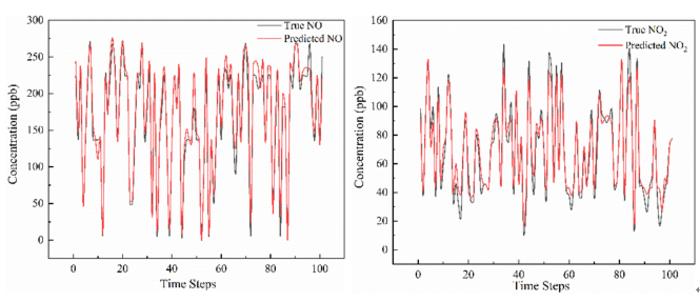

A research team led by Hefei Institutes of Physical Science in China has unveiled a new deep-learning model that significantly improves the forecasting of roadside air pollutants. The model, called DSTMA-BLSTM (Dynamic Shared and Task-specific Multi-head Attention Bidirectional Long Short-Term Memory), achieved an R² above 0.94 on major pollutants and cut prediction errors by about 30% compared with conventional LSTM models.

The core innovation lies in how it decomposes the intertwined effects of traffic behavior, meteorology, and emissions: a shared “attention” layer extracts common temporal patterns across pollutants, while task-specific attention heads isolate the unique dynamics of each pollutant.

From a supercomputing and big-data standpoint, this matters: urban air pollution is a high-dimensional, non-linear system, subject to rapid shifts in traffic flows, weather, emission regimes, and chemical transformations. Taming this complexity requires serious computing power (for training these deep models) and real-time model inference that can integrate streaming sensor data, traffic flow telemetry, meteorological forecasts, and emissions inventories.

In other words, we are entering an era where supercomputing-class workflows (massive data, advanced AI architectures, real-time inference) are not just for cosmology or physics; they’re now essential for everyday environmental management.

Why the urgency? And why the timing is glaring

A high-accuracy pollutant forecasting system is not confined to the lab. In an era of accelerating climate change, urbanization, and increasing regulatory pressure, the ability to predict pollutant spikes (such as traffic-related NO₂, PM₂.₅, and ozone precursors) has direct implications for public health, energy-use strategies, and climate policy.

However, we are at a precarious point. The COP30 climate summit in Belém, Brazil (Nov 10-21, 2025), saw world leaders state clearly that the planet has already exceeded the 1.5 °C threshold above pre-industrial levels, a critical point for habitability. The summit agenda focuses not only on mitigation (reducing emissions) but also on adaptation, resilience, and science-based decision-making.

This directly relates to the Hefei team's work: one enabler of adaptation is improved forecasting of environmental hazards (including air quality), made possible by computing power and AI. If cities can anticipate problems sooner, they can respond more quickly.

But here’s the catch:

- Better forecasting is necessary, but not sufficient: You can predict pollutant spikes, but if the infrastructure, policies, or finance to act are missing, forecasting becomes an academic exercise.

- The compute-intensive nature of such models means only organizations with high-performance infrastructure or dedicated cloud investments can deploy them, raising concerns about inequality across cities and nations.

- At COP30, despite abundant promises, a significant gap persists. According to policy analysts, current national plans (NDCs) still place the world on a warming trajectory of 2.3-2.8 °C, well exceeding the 1.5 °C target.

- Brazil’s hosting of COP30 is symbolically powerful; the Amazon region is central to global climate dynamics, yet the infrastructure demands of such a summit (and the larger transition) place additional pressure on ecosystems and resources.

What this means for cities

For any firms working at the intersection of big data, real estate, and predictive systems, here’s the play:

- Integrate supercomputing-grade forecasting models into urban-scale platforms (e.g., neighborhood-level pollutant alerts, real estate risk dashboards, development-planning tools).

- Recognize that climate risk is now ambient: air-quality shocks, energy-use surges, and infrastructure strain all feed into property value, tenant demand, and regulatory exposure.

- Position real-estate intelligence tools to reflect the new era: not just “location, condition, comps” but “real-time environmental intelligence, resilience capacity, compute-enabled forecasts”.

- Advocate for compute equity: if only select cities can afford real-time supercomputing models, the climate justice gap widens. Platforms that democratize access become strategic.

Bottom line

The Hefei team’s advance is a hopeful sign: supercomputing and AI are proving to be potent levers in environmental forecasting and management. But the larger picture remains sobering: at COP30, the world was warned we are already beyond critical thresholds, and cities face accelerating hazards. The compute muscle is necessary now; it must be matched by policy, infrastructure, equity, and action.

If we don’t build the “compute infrastructure for resilience” alongside our climate infrastructure, forecasts risk becoming unused tools in a climate-stressed world. Let’s keep these worlds, supercomputing, urban resilience, and climate policy tightly coupled.

How to resolve AdBlock issue?

How to resolve AdBlock issue?