A research team at the Massachusetts Institute of Technology has established a novel computational framework poised to advance gravitational-wave astronomy as a viable method for probing dark matter. Leveraging large-scale numerical simulations of binary black hole mergers in dense dark matter environments, the project introduces a sophisticated waveform-modeling pipeline designed to distinguish mergers in vacuum from those influenced by the surrounding dark matter.

The work represents a notable convergence of computational astrophysics, numerical relativity, and AI-assisted signal analysis. Rather than searching for dark matter through traditional collider experiments or direct-detection instrumentation, the MIT-led team approached the problem as a high-dimensional inference challenge embedded in gravitational-wave data streams from the LIGO-Virgo-KAGRA (LVK) observatories.

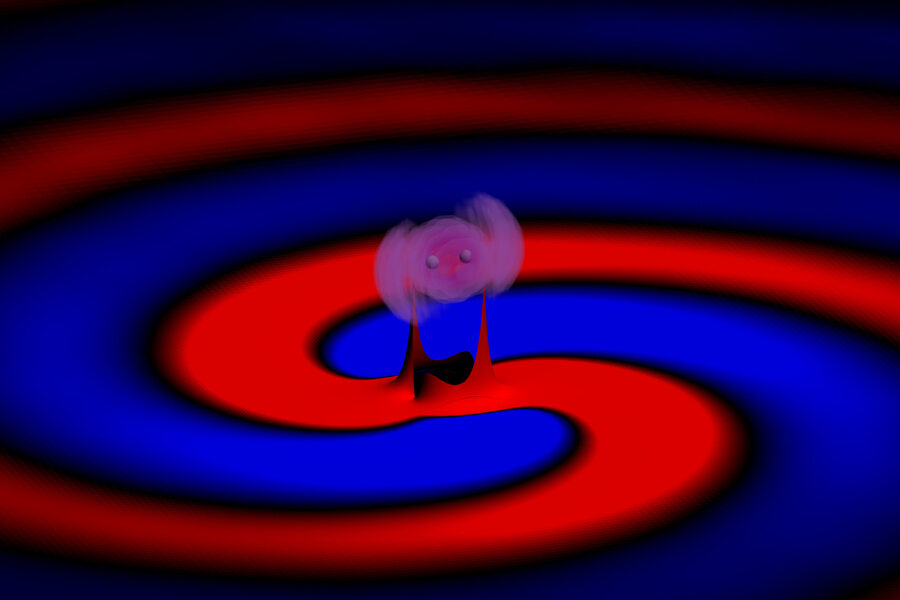

At the center of the research is a new simulation architecture designed to model how dark matter modifies the gravitational waveform emitted by colliding black holes. Specifically, the researchers investigated “light scalar” dark matter candidates, ultralight particles predicted to behave collectively as wave-like fields near rapidly spinning black holes. Under certain conditions, a black hole can transfer rotational energy into the surrounding dark matter field through a relativistic amplification process known as superradiance.

The resulting dark matter cloud becomes sufficiently dense to perturb the orbital dynamics of a black hole binary system. Those perturbations, in turn, alter the emitted gravitational-wave signal. The challenge for researchers was determining whether such modifications survive long enough, and remain coherent enough, to be detectable after propagating millions or billions of light-years across spacetime.

To solve that problem, the MIT group constructed detailed numerical simulations spanning multiple black hole configurations, dark matter densities, orbital geometries, and mass ratios. The simulations generated synthetic gravitational waveforms representing mergers occurring inside dark matter environments rather than empty spacetime.

From a computational-science perspective, the project resembles a next-generation inverse modeling problem. The researchers effectively built a parameterized waveform generator capable of embedding environmental physics into relativistic merger simulations. Instead of treating black hole binaries as isolated vacuum systems, the standard assumption in most gravitational-wave pipelines, the framework introduces environmental coupling terms associated with scalar-field dark matter interactions.

This matters because modern gravitational-wave observatories generate massive volumes of noisy observational data that must be filtered through large template banks generated by simulation. Detecting subtle dark matter signatures, therefore, becomes fundamentally a computational pattern-recognition problem operating in extremely high-dimensional parameter space.

The MIT team applied its simulation framework to publicly available LVK datasets covering the observatories’ first three observing runs. Out of 28 high-confidence merger events examined, 27 aligned closely with standard vacuum-based merger predictions. However, one event, GW190728, exhibited statistical agreement with the new dark matter-enhanced waveform model.

The researchers stress that this is not evidence of dark matter detection. The statistical significance remains insufficient for a discovery claim, and independent validation will be required. Yet the computational importance of the work lies elsewhere: the simulations establish that environmental dark matter effects may no longer be computationally invisible inside gravitational-wave archives.

For computer scientists, the project highlights a broader transformation underway in computational physics. Increasingly, frontier discoveries are emerging not purely from experimental hardware, but from the interaction between simulation systems, probabilistic inference engines, and large-scale observational datasets.

In practical terms, the waveform generation framework behaves similarly to a scientific foundation model for relativistic astrophysics. The simulation pipeline maps physical priors, black hole mass, spin, orbital evolution, scalar field density, and propagation distance into predicted waveform outputs that can then be compared against detector observations.

The computational cost of such simulations is substantial. Numerical relativity calculations involving black hole binaries already require high-performance computing infrastructure due to the complexity of solving Einstein’s field equations across discretized spacetime grids. Introducing coupled dark matter fields further increases the dimensionality and stability requirements of the simulations. The work, therefore, reflects the growing dependence of astrophysics on HPC-scale numerical modeling and large distributed data-analysis pipelines.

The project also underscores an emerging trend in scientific computing: environmental context modeling. Historically, many simulation frameworks simplified astrophysical systems into isolated, idealized conditions. But next-generation simulations increasingly attempt to incorporate surrounding matter fields, turbulence, plasma interactions, magnetic structures, and now dark matter environments directly into the numerical stack.

That shift parallels developments in climate science, fusion research, and molecular dynamics, where researchers are moving from simplified equilibrium approximations toward fully coupled multiphysics simulations.

The MIT work may ultimately prove important not because it definitively identified dark matter, but because it expanded the computational search space through which dark matter can be explored. Instead of requiring entirely new detectors, the framework effectively upgrades existing gravitational-wave observatories into indirect dark matter sensors through simulation-driven inference.

As future observatories such as the Einstein Telescope and Cosmic Explorer come online, the resolution and sensitivity of gravitational-wave measurements are expected to increase dramatically. That will place even greater emphasis on scalable waveform simulation, uncertainty quantification, Bayesian inference, and AI-assisted signal classification.

For the supercomputing community, the message is becoming increasingly clear: modern astrophysics is evolving into a computational discipline where simulations are no longer auxiliary tools for interpreting observations. They are becoming the instruments of discovery themselves.

How to resolve AdBlock issue?

How to resolve AdBlock issue?