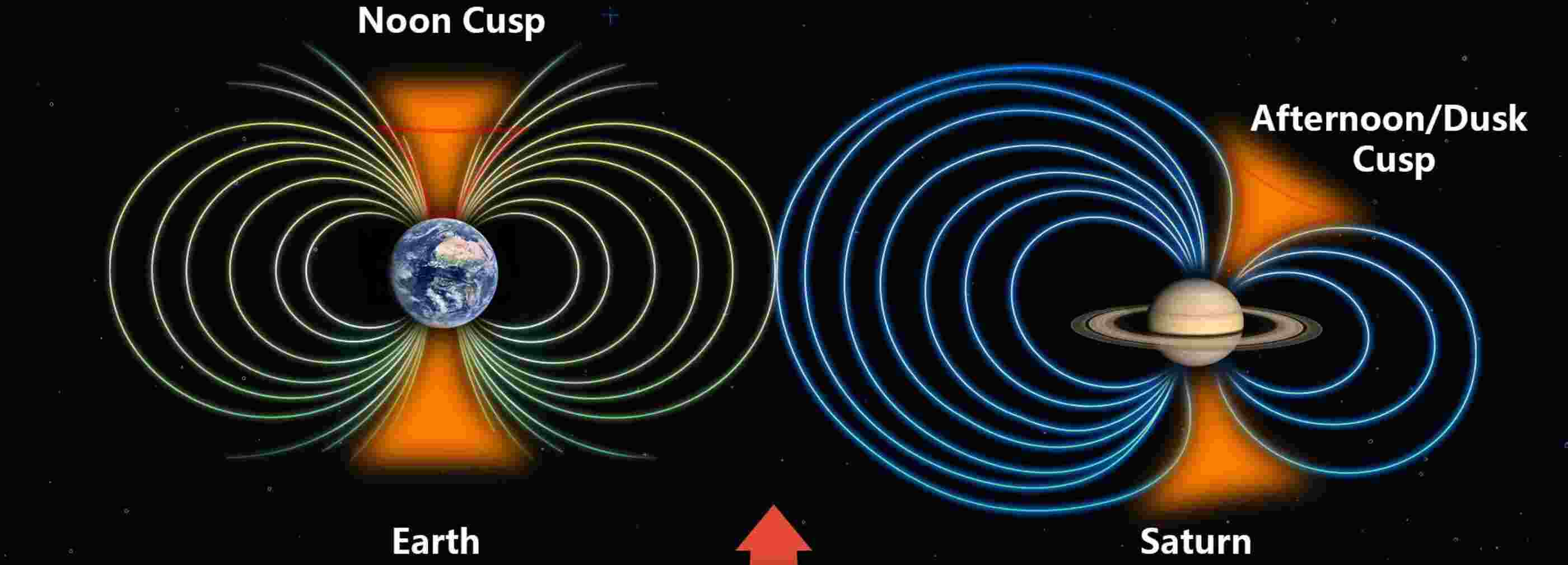

Humanity has sent astronauts back into deep space through NASA’s Artemis II mission, the greatest threat isn’t distance, isolation, or mechanical failure. Instead, it’s something far more elusive: solar radiation, an invisible force with the potential to damage DNA, disrupt electronics, and endanger lives within minutes.

Now, in a powerful convergence of computational science and space exploration, researchers at the University of Michigan are deploying a new generation of solar radiation forecasting tools, driven by high-performance computing (HPC), to help safeguard astronauts venturing beyond Earth’s protective magnetic field.

The Supercomputing Shield

At the heart of this effort lies a dual-model system: a machine-learning predictor and a physics-based simulation engine. Together, they represent a new paradigm in space weather forecasting, one that depends fundamentally on supercomputing.

The machine-learning model continuously analyzes vast streams of solar imagery captured by spacecraft such as NASA’s Solar Dynamics Observatory and the Solar and Heliospheric Observatory.

Trained on decades of data, it identifies subtle precursors to solar particle events, delivering probabilistic forecasts up to 24 hours in advance, an extraordinary leap in preparedness.

But prediction alone is not enough. Understanding severity, timing, and duration requires something more computationally demanding: physics.

This is where HPC systems come into play.

NASA has committed significant supercomputing resources to run a sophisticated physics-based model that simulates how solar energetic particles accelerate in the sun’s corona and propagate through space. These simulations are not trivial, they must resolve complex plasma interactions, magnetic field dynamics, and near–light–speed particle transport in near-real time.

Without supercomputing, such modeling would take too long to be actionable. With it, astronauts gain a critical advantage: time.

From Minutes to Meaningful Warnings

Solar energetic particles can reach spacecraft in minutes after a solar eruption, leaving little room for reaction. But by combining machine learning with physics-based HPC simulations, scientists are transforming raw solar data into actionable intelligence.

The result is a system that doesn’t just say something might happen; it helps answer how bad it will be, when it will arrive, and how long it will last.

This distinction is crucial.

With early warnings, Artemis astronauts can reconfigure their spacecraft, strategically repositioning equipment to create temporary radiation shelters. These operational decisions, guided by supercomputing-powered forecasts, could mean the difference between routine exposure and dangerous dose levels.

Computing at the Edge of Human Exploration

The Artemis II mission represents the first crewed journey beyond low-Earth orbit in over 50 years. Unlike astronauts aboard the International Space Station, Artemis crews will operate largely outside Earth’s magnetic shield, where radiation risks intensify dramatically.

Compounding the challenge, the mission coincides with the peak of the sun’s 11-year activity cycle, a period marked by more frequent and intense solar eruptions.

In this environment, supercomputing becomes more than a research tool; it becomes mission-critical infrastructure.

These systems ingest real-time observational data, execute computationally intensive models, and deliver forecasts that must be both fast and accurate. Every second matters. Every calculation counts.

A New Era of Predictive Spaceflight

What makes this moment transformative is not just the technology itself, but what it represents: a shift from reactive to predictive space exploration.

For decades, astronauts have relied on monitoring and mitigation. Now, thanks to advances in HPC and artificial intelligence, they can anticipate and prepare.

This capability is being developed under initiatives like the CLEAR Center (Center for All-Clear Solar Energetic Particle Forecasts), which aims to integrate machine learning, physics, and empirical models into a unified forecasting framework.

It is a vision where supercomputers act as early warning systems for humanity’s expansion into space, where algorithms scan the sun continuously, and simulations map invisible dangers before they arrive.

Inspiration at Scale

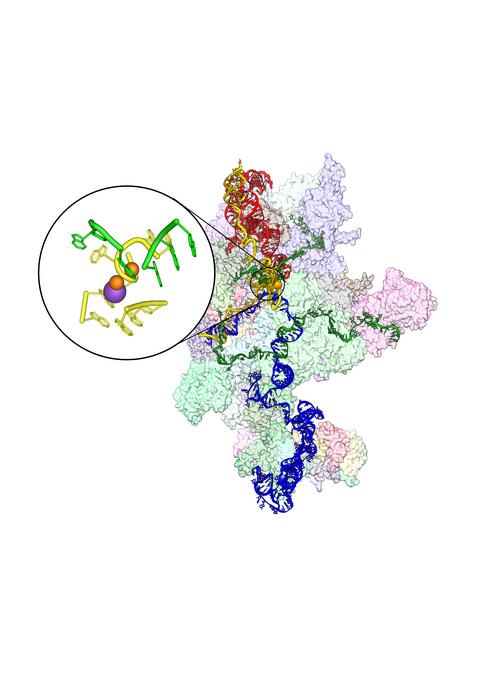

There is something profoundly inspiring about this intersection of disciplines. The same computational power used to model climate systems, design advanced materials, and decode biological complexity is now being harnessed to protect human life millions of miles from Earth.

Supercomputing is no longer confined to laboratories and data centers; it is extending its reach into orbit, into deep space, and into the future of human exploration.

As Artemis II arcs around the Moon, it will carry more than astronauts. It will carry the culmination of decades of computational innovation, a silent, invisible shield built from data, algorithms, and the relentless pursuit of understanding.

And in that sense, every core, every node, every simulation is part of the mission.

Because before we can safely explore the cosmos, we must first compute it.

How to resolve AdBlock issue?

How to resolve AdBlock issue?