Introduction

Scientists from the University of Birmingham in the UK and Goethe University in Frankfurt have collaborated to study the impact of environmental changes on freshwater lakes over the past century. Using a DNA "time machine" to analyze sediment cores, the researchers discovered that pollution, extreme weather events, and rising temperatures have contributed to the irreversible loss of biodiversity. Their findings, supported by AI analysis, highlight the importance of protecting and restoring biodiversity for the health and sustainability of ecosystems.

The "Biodiversity Time Machine"

The team used sediment samples collected from the bottom of a lake in Denmark to reconstruct a 100-year history of biodiversity, chemical pollution, and climate change. This particular lake was an ideal natural experiment due to its well-documented shifts in water quality over time. By analyzing the biological and environmental signals contained in the sediment, the researchers were able to build a detailed picture of yearly biodiversity changes, providing unprecedented insights into the impacts of pollution and climate change on freshwater ecosystems.

Pollution and Climate Change Impacts

The researchers used environmental DNA analysis to identify the key factors responsible for the loss of species in the lake. They found that pollutants such as insecticides and fungicides, along with rising minimum temperatures, had the most detrimental effects on biodiversity levels. The DNA analysis also showed that while the lake had started to recover over the past two decades, the overall biodiversity was still significantly altered compared to its pristine state.

Importance of Protecting Biodiversity

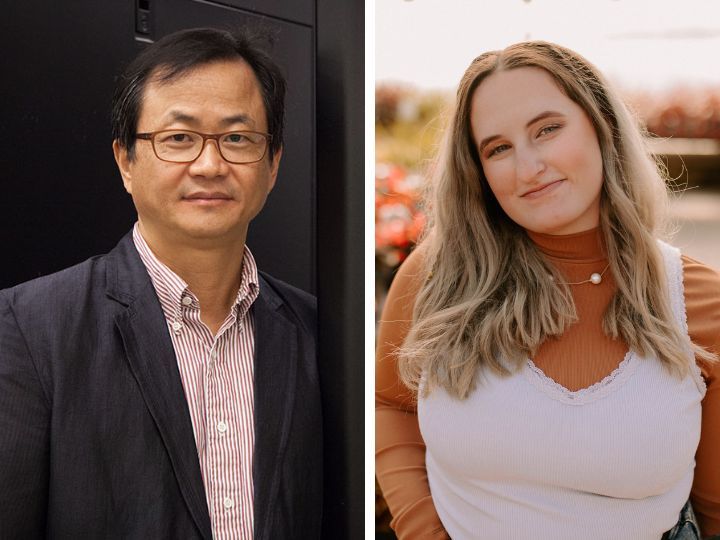

Lead author Niamh Eastwood, a PhD student at the University of Birmingham, emphasized the irreversible nature of the biodiversity loss caused by pollution and warming temperatures. The disappearance of species observed in the lake's historical records indicates that not all lost biodiversity can be restored. Eastwood stressed the critical need to protect biodiversity to prevent further losses and preserve the essential ecosystem services that they provide.

Role of AI and Future Research

The researchers used AI algorithms to analyze the DNA and environmental datasets, enabling them to identify patterns and drivers of biodiversity loss over time. They highlight the value of AI-based approaches in understanding the impacts of historic changes and predicting future biodiversity loss under various pollution scenarios. The team plans to expand their research to include other lakes in England and Wales, allowing for the generalization and application of their findings to broader freshwater ecosystems.

Preserving the Future of Freshwater Ecosystems

The study conducted by the University of Birmingham researchers underscores the urgent need for action to protect and restore the biodiversity of freshwater lakes. By understanding the interconnected effects of pollution, climate change, and human activities, policymakers and regulators can develop targeted strategies to mitigate further damage. Preserving the health and diversity of natural ecosystems is crucial for sustaining essential ecosystem services and ensuring the well-being of both wildlife and human populations.

Conclusion

The groundbreaking research conducted by the University of Birmingham sheds light on the century-long loss of biodiversity in freshwater lakes due to pollution and climate change. By harnessing the power of AI and analyzing DNA data from sediment cores, the researchers provided a detailed understanding of the lasting impacts of human activities on ecosystems. This research serves as a call to action, urging stakeholders to prioritize the protection and restoration of biodiversity to safeguard the health and resilience of freshwater ecosystems for generations to come.

How to resolve AdBlock issue?

How to resolve AdBlock issue?