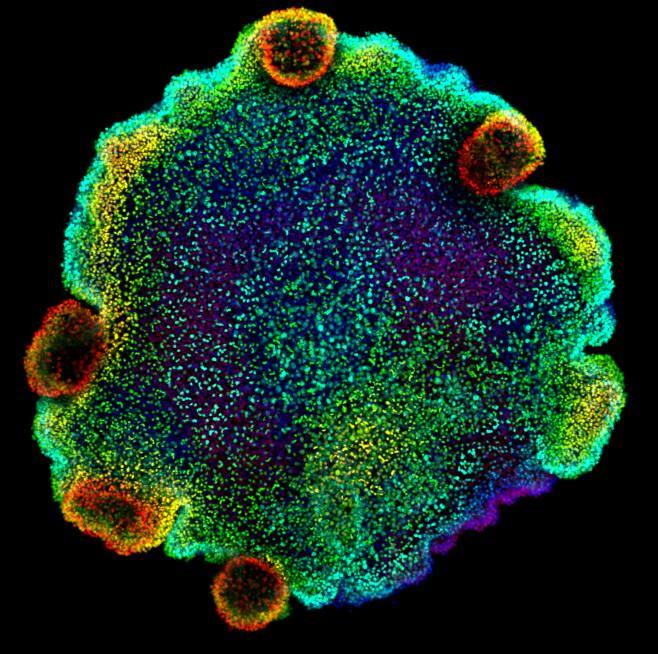

Researchers at Dana-Farber have discovered a potential new way to evaluate certain features of clear cell renal cell carcinoma (ccRCC) using deep learning and image processing. Their AI-based assessment tool analyzes two-dimensional images of a tumor sample taken from a pathology slide and identifies previously unknown features, such as tumor microheterogeneity, that could help predict whether the tumor will respond to immunotherapy. This discovery suggests that pathology slides may contain valuable biological information about ccRCC tumors and other types of tumors, which could be useful in better understanding the biology of cancer.

The work, which is described in Cell Reports Medicine, is part of a broader effort at Dana-Farber to use AI in biologically grounded ways to transform cancer care and cancer discovery.

“This is an example of the growing convergence of AI and cancer biology,” says co-senior author Eliezer Van Allen, MD, Chief of the Division of Population Sciences at Dana-Farber. “It represents a major opportunity to measure key features of the tumor and its immune microenvironment at the same time. These measures could help drive not only biological discovery but also potentially guide cancer care.”

Renal cell carcinoma is a prevalent cancer type, ranking among the top 10 most common cancers globally. The clear cell subtype, specifically, accounts for about 75-80% of metastatic cases. While some tumors respond well to immune checkpoint inhibitors (ICIs), there is currently no reliable method to predict whether a ccRCC tumor will respond to immunotherapy with an ICI.

“We wanted to know what a tumor that responds to immunotherapy looks like,” says first author Jackson Nyman, Ph.D., who was a graduate student in Van Allen’s lab and is now at PathAI. “Is there anything in the pathology slide that might give us clues about what is different about the tumors?”

Pathologists analyze pathology slides of tumor samples that have been stained to reveal the structures of cells as part of the diagnosis process. A crucial measure that is taken into account is the nuclear grade, which indicates how much the tumor cells deviate from normal cells.

As part of a collaborative project, Nyman, Sabina Signoretti, MD, a pathologist at Dana-Farber, and Toni Choueiri, MD, Director of the Lank Center for Genitourinary Oncology at Dana-Farber, trained an AI model to assess a tumor's nuclear grade. The AI model not only successfully assessed the nuclear grade, but was also able to identify variations in grade across a tumor sample.

The team was inspired by their finding to expand their deep learning model. The model was developed to quantify tumor microheterogeneity and immune properties, such as immune infiltration, across the slide. Tumor microheterogeneity measures the degree to which the nuclear grade varies across the slide. Immune infiltration measures how deeply lymphocytes, the warriors of the immune system, have penetrated the tumor. Although pathologists can complete these measures, it is too time-consuming to do routinely.

When the team assessed a set of ccRCC pathology slides with their AI model, they found that some tumors were notably homogeneous while others had many different nuclear grades in various patterns. They also observed that lymphocytes were present in some tumors, while others lacked substantial infiltration.

“There was a visual difference in some patient images versus others that had not been obvious before,” says Nyman. “We wondered if certain patterns might be predictive of a response to immunotherapy.”

The team utilized an AI-based tool to examine pathology slides of tumors from patients who participated in the CheckMate 025 randomized phase 3 clinical trial, to answer a crucial question. The trial tested monotherapy with an ICI or an mTOR inhibitor in patients with ccRCC who had previously undergone standard therapy.

They discovered that certain features, such as tumor microheterogeneity and immune infiltration, were linked to improved overall survival among patients who were taking immune checkpoint inhibitors. The tumors that responded to ICIs had higher levels of tumor microheterogeneity and denser infiltration of lymphocytes in high-grade regions.

“These signals are hiding in plain sight,” says Van Allen. “They are just hard for pathologists to practically measure on individual slides. With AI, we have a scalable way to potentially squeeze a lot more information out of these slides.”

Although the tool is not yet suitable for clinical use, the team is in the process of testing it in an ongoing clinical trial. The trial involves the use of combination immunotherapy as a first-line treatment for patients with ccRCC. Additionally, the team plans to investigate whether these visual clues found in pathology slides are linked to specific molecular features of the tumor, such as gene alterations.

“The use of deep learning strategies to identify tumor and microenvironmental features from histopathology slides and determine their relationship to molecular and clinical states may have value across tumor types and therapeutic modalities,” says Van Allen.

The research conducted by the Dana-Farber Cancer Institute has shown that deep learning can help reveal valuable insights about kidney cancer from pathology slides. This finding has the potential to enhance the accuracy of diagnosis and treatment of kidney cancer, which may lead to better outcomes for patients.

How to resolve AdBlock issue?

How to resolve AdBlock issue?