Moore, who set the course for the future of the semiconductor industry, devoted his later years to philanthropy. Intel and the Gordon and Betty Moore Foundation have announced that company co-founder Gordon Moore has passed away at the age of 94. The foundation reported he died peacefully on Friday, March 24, 2023, surrounded by family at his home in Hawaii.

Moore and his longtime colleague Robert Noyce founded Intel in July 1968. Moore initially served as executive vice president until 1975, when he became president. In 1979, Moore was named chairman of the board and chief executive officer, posts he held until 1987 when he gave up the CEO position and continued as chairman. In 1997, Moore became chairman emeritus, stepping down in 2006.

During his lifetime, Moore also dedicated his focus and energy to philanthropy, particularly environmental conservation, science, and patient care improvements. Along with his wife of 72 years, he established the Gordon and Betty Moore Foundation, which has donated more than $5.1 billion to charitable causes since its founding in 2000.

“Those of us who have met and worked with Gordon will forever be inspired by his wisdom, humility, and generosity,” reflected foundation president Harvey Fineberg. “Though he never aspired to be a household name, Gordon’s vision and his life’s work enabled the phenomenal innovation and technological developments that shape our everyday lives. Yet those historic achievements are only part of his legacy. His and Betty’s generosity as philanthropists will shape the world for generations to come.”

Pat Gelsinger, Intel CEO, said, “Gordon Moore defined the technology industry through his insight and vision. He was instrumental in revealing the power of transistors and inspired technologists and entrepreneurs across the decades. We at Intel remain inspired by Moore’s Law and intend to pursue it until the periodic table is exhausted. Gordon’s vision lives on as our true north as we use the power of technology to improve the lives of every person on Earth. My career and much of my life took shape within the possibilities fueled by Gordon’s leadership at the helm of Intel, and I am humbled by the honor and responsibility to carry his legacy forward.”

Frank D. Yeary, chairman of Intel’s board of directors, said, “Gordon was a brilliant scientist and one of America’s leading entrepreneurs and business leaders. It is impossible to imagine the world we live in today, with computing so essential to our lives, without the contributions of Gordon Moore. He will always be an inspiration to our Intel family and his thinking is at the core of our innovation culture.”

Andy Bryant, former chairman of Intel’s board of directors, said, “I will remember Gordon as a brilliant scientist, a straight-talker, and an astute businessperson who sought to make the world better and always do the right thing. It was a privilege to know him, and I am grateful that his legacy lives on in the culture of the company he helped to create.”

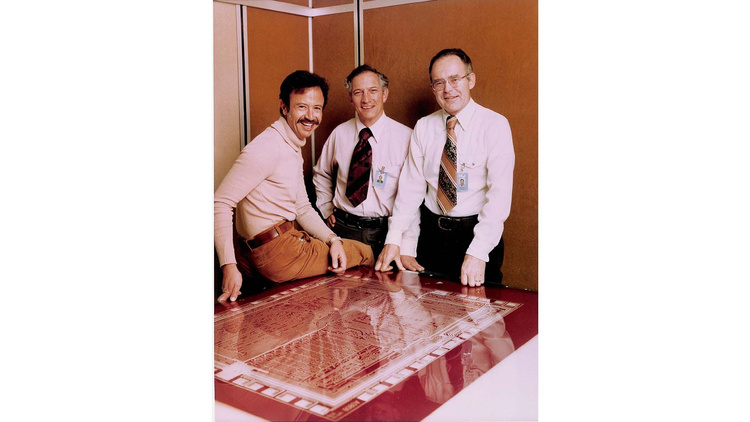

Before establishing Intel, Moore and Noyce participated in the founding of Fairchild Semiconductor, where they played central roles in the first commercial production of diffused silicon transistors and later the world’s first commercially viable integrated circuits. The two had previously worked together under William Shockley, the co-inventor of the transistor and founder of Shockley Semiconductor, which was the first semiconductor company established in what would become Silicon Valley. Upon striking out on their own, Moore and Noyce hired future Intel CEO Andy Grove as the third employee, and the three of them built Intel into one of the world’s great companies. Together they became known as the “Intel Trinity,” and their legacy continues today.

In addition to Moore’s seminal role in founding two of the world’s pioneering technology companies, he famously forecast in 1965 that the number of transistors on an integrated circuit would double every year – a prediction that came to be known as Moore’s Law.

“All I was trying to do was get that message across, that by putting more and more stuff on a chip we were going to make all electronics cheaper,” Moore said in a 2008 interview.

With his 1965 prediction proven correct, in 1975 Moore revised his estimate to the doubling of transistors on an integrated circuit every two years for the next 10 years. Regardless, the idea of chip technology growing at an exponential rate, continually making electronics faster, smaller, and cheaper, became the driving force behind the semiconductor industry and paved the way for the ubiquitous use of chips in millions of everyday products.

In 2022, Gelsinger announced the renaming of the Ronler Acres campus in Oregon – where Intel teams develop future process technologies – to Gordon Moore Park at Ronler Acres. The RA4 building that’s home to much of Intel’s Technology Development Group was also renamed The Moore Center along with its café, The Gordon.

“I can think of no better way to honor Gordon and the profound impact he’s had on this company than by bestowing his name on this campus,” Gelsinger said at the event. “I hope we did you proud today, Gordon. And the world thanks you.”

Gordon Earle Moore was born in San Francisco on Jan. 3, 1929, to Walter Harold and Florence Almira “Mira” (Williamson) Moore. Moore was educated at San Jose State University, the University of California at Berkeley, and the California Institute of Technology, where he was awarded a Ph.D. in chemistry in 1954.

He started his research career at the Johns Hopkins Applied Physics Laboratory in Maryland. He returned to California in 1956 to join Shockley Semiconductor. In 1957, Moore co-founded Fairchild Semiconductor, a division of Fairchild Camera and Instrument, along with Robert Noyce and six other colleagues from Shockley Semiconductor. Eleven years later, Moore and Noyce co-founded Intel.

With Fairchild and Intel came financial success. Beginning with individual gifts, many of them anonymous, then forming the Moore Family Foundation, and eventually, in 2000, creating the Gordon and Betty Moore Foundation, Moore and his wife sought philanthropy to make the world a better place for future generations. His passion for impact and measurement were hallmarks of his philanthropic work and aspirations.

He received the National Medal of Technology from President George H.W. Bush in 1990, and the Presidential Medal of Freedom, the nation’s highest civilian honor, from President George W. Bush in 2002.

After retiring from Intel in 2006, Moore divided his time between California and Hawaii, serving as chairman of the board for the Gordon and Betty Moore Foundation until transitioning to chairman emeritus in 2018. Moore also served as a member of the board of directors of Conservation International and Gilead Sciences, Inc. He was a member of the National Academy of Engineering, a Fellow of the Royal Society of Engineers, and a Fellow of the Institute of Electrical and Electronics Engineers. He served as chairman of the board of trustees of the California Institute of Technology from 1995 until the beginning of 2001 and continued as a Life Trustee.

In 1950, Moore married Betty Irene Whitaker, who survives him. Moore is also survived by sons Kenneth and Steven and four grandchildren.

How to resolve AdBlock issue?

How to resolve AdBlock issue?

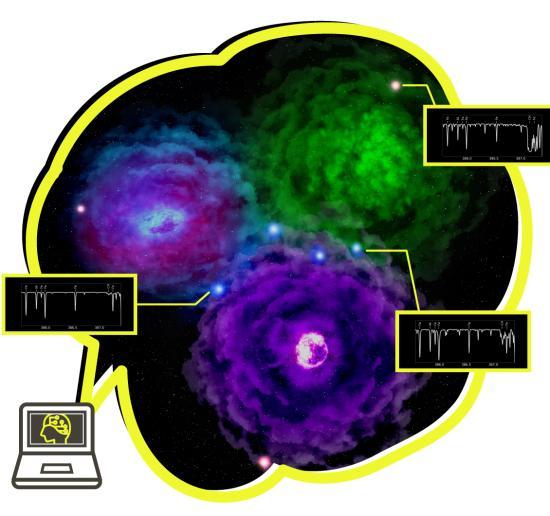

![Figure 2. Carbon vs. iron abundance of extremely metal-poor (EMP) stars. The colour bar shows the probability for mono-enrichment from our machine learning algorithm. Stars above the dashed lines (at [C/Fe] = 0.7) are called carbon-enhanced metal-poor (CEMP) stars and most of them are mono-enriched. (Credit: Hartwig et al.) Figure 2. Carbon vs. iron abundance of extremely metal-poor (EMP) stars. The colour bar shows the probability for mono-enrichment from our machine learning algorithm. Stars above the dashed lines (at [C/Fe] = 0.7) are called carbon-enhanced metal-poor (CEMP) stars and most of them are mono-enriched. (Credit: Hartwig et al.)](/images/図3_67128.jpg)