An array of 350 radio telescopes in the Karoo desert of South Africa is getting closer to detecting “cosmic dawn” — the era after the Big Bang when stars first ignited and galaxies began to bloom.

The Hydrogen Epoch of Reionization Array (HERA) team says that it has doubled the sensitivity of the array, which was already the most sensitive radio telescope in the world dedicated to exploring this unique period in the history of the universe.

While they have yet to detect radio emissions from the end of the cosmic dark ages, their results do provide clues to the composition of stars and galaxies in the early universe. In particular, their data suggest that early galaxies contained very few elements besides hydrogen and helium, unlike our galaxies today.

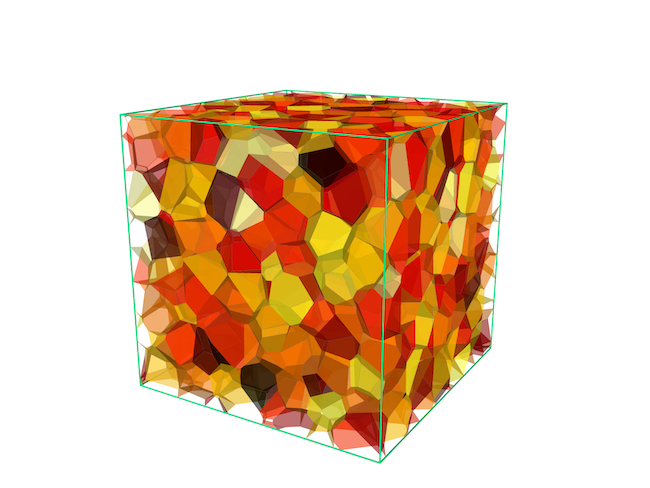

When the radio dishes are fully online and calibrated, ideally this fall, the team hopes to construct a 3D map of the bubbles of ionized and neutral hydrogen as they evolved from about 200 million years ago to around 1 billion years after the Big Bang. The map could tell us how early stars and galaxies differed from those we see around us today, and how the universe as a whole looked in its adolescence.

“This is moving toward a potentially revolutionary technique in cosmology. Once you can get down to the sensitivity you need, there’s so much information in the data,” said Joshua Dillon, a research scientist in the University of California, Berkeley’s Department of Astronomy. “A 3D map of most of the luminous matter in the universe is the goal for the next 50 years or more.”

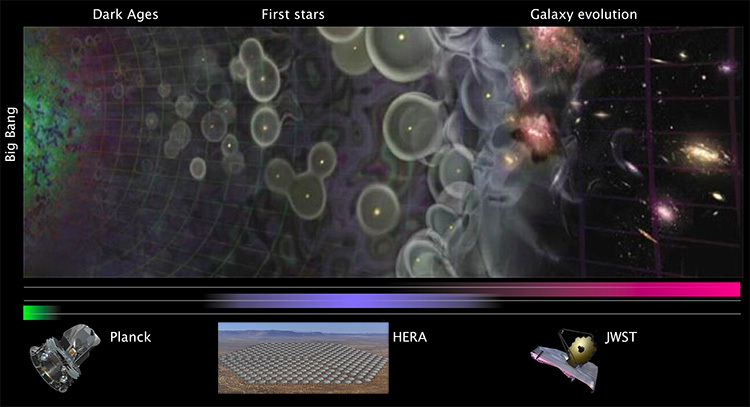

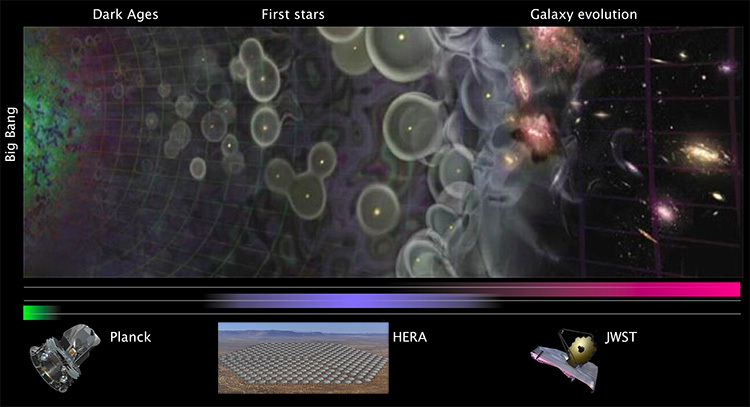

Other telescopes also are peering into the early universe. The new James Webb Space Telescope (JWST) has now imaged a galaxy that existed about 325 million years after the birth of the universe in the Big Bang. But the JWST can see only the brightest of the galaxies that formed during the Epoch of Reionization, not the smaller but far more numerous dwarf galaxies whose stars heated the intergalactic medium and ionized most of the hydrogen gas.

HERA seeks to detect radiation from the neutral hydrogen that filled the space between those early stars and galaxies and, in particular, determine when that hydrogen stopped emitting or absorbing radio waves because it became ionized.

The fact that the HERA team has not yet detected these bubbles of ionized hydrogen within the cold hydrogen of the cosmic dark age rules out some theories of how stars evolved in the early universe.

Specifically, the data show that the earliest stars, which may have formed around 200 million years after the Big Bang, contained few other elements than hydrogen and helium. This is different from the composition of today’s stars, which have a variety of so-called metals, the astronomical term for elements, ranging from lithium to uranium, that are heavier than helium. The finding is consistent with the current model for how stars and stellar explosions produced most of the other elements.

“Early galaxies have to have been significantly different than the galaxies that we observe today in order for us not to have seen a signal,” said Aaron Parsons, principal investigator for HERA and a UC Berkeley associate professor of astronomy. “In particular, their X-ray characteristics have to have changed. Otherwise, we would have detected the signal we’re looking for.”

The atomic composition of stars in the early universe determined how long it took to heat the intergalactic medium once stars began to form. Key to this is the high-energy radiation, primarily X-rays, produced by binary stars where one of them has collapsed into a black hole or neutron star and is gradually eating its companion. With few heavy elements, a lot of the companion’s mass is blown away instead of falling onto the black hole, meaning fewer X-rays and less heating of the surrounding region.

The new data fit the most popular theories of how stars and galaxies first formed after the Big Bang, but not others. Preliminary results from the first analysis of HERA data reported a year ago, hinted that those alternatives — specifically, cold reionization — were unlikely.

“Our results require that even before reionization and by as late as 450 million years after the Big Bang, the gas between galaxies must have been heated by X-rays. These likely came from binary systems where one star is losing mass to a companion black hole,” Dillon said. “Our results show that if that’s the case, those stars must have been very low ‘metallicity,’ that is, very few elements other than hydrogen and helium in comparison to our sun, which makes sense because we’re talking about a period in time in the universe before most of the other elements were formed.”

The Epoch of Reionization

The origin of the universe in the Big Bang 13.8 billion years ago produced a hot cauldron of energy and elementary particles that cooled for hundreds of thousands of years before protons and electrons combined to form atoms — primarily hydrogen and helium. Looking at the sky with sensitive telescopes, astronomers have mapped in detail the faint variations in temperature from this moment — what’s known as the cosmic microwave background — a mere 380,000 years after the Big Bang.

Aside from this relict heat radiation, however, the early universe was dark. As the universe expanded, the clumpiness of matter seeded galaxies and stars, which in turn produced radiation — ultraviolet and X-rays — that heated the gas between stars. At some point, hydrogen began to ionize — it lost its electron — and formed bubbles within the neutral hydrogen, marking the beginning of the Epoch of Reionization.

To map these bubbles, HERA and several other experiments are focused on a wavelength of light that neutral hydrogen absorbs and emits, but ionized hydrogen does not. Called the 21-centimeter line (a frequency of 1,420 megahertz), it is produced by the hyperfine transition, during which the spins of the electron and proton flip from parallel to antiparallel. Ionized hydrogen, which has lost its only electron, doesn’t absorb or emit this radio frequency.

Since the Epoch of Reionization, the 21-centimeter line has been red-shifted by the expansion of the universe to a wavelength 10 times as long — about 2 meters, or 6 feet. HERA’s rather simple antennas, a construct of chicken wire, PVC pipe, and telephone poles, are 14 meters across to collect and focus this radiation onto detectors.

“At two meters wavelength, a chicken wire mesh is a mirror,” Dillon said. “And all the sophisticated stuff, so to speak, is in the supercomputer backend and all of the data analysis that comes after that.”

The new analysis is based on 94 nights of observing in 2017 and 2018 with about 40 antennas — phase 1 of the array. Last year’s preliminary analysis was based on 18 nights of phase 1 observations.

The new paper’s main result is that the HERA team has improved the sensitivity of the array by a factor of 2.1 for light emitted about 650 million years after the Big Bang (a redshift, or an increase in wavelength, of 7.9), and 2.6 for radiation emitted about 450 million years after the Big Bang (a redshift of 10.4).

The HERA team continues to improve the telescope’s calibration and data analysis in hopes of seeing those bubbles in the early universe, which are about 1 millionth the intensity of the radio noise in the neighborhood of Earth. Filtering out the local radio noise to see the radiation from the early universe has not been easy.

“If it’s Swiss cheese, the galaxies make the holes, and we’re looking for the cheese,” so far, unsuccessfully, said David Deboer, a research astronomer in UC Berkeley’s Radio Astronomy Laboratory.

Extending that analogy, however, Dillon noted, “What we’ve done is we’ve said the cheese must be warmer than if nothing had happened. If the cheese were really cold, it turns out it would be easier to observe that patchiness than if the cheese were warm.”

That mostly rules out cold reionization theory, which posited a colder starting point. The HERA researchers suspect, instead, that the X-rays from X-ray binary stars heated up the intergalactic medium first.

“The X-rays will effectively heat up the whole block of cheese before the holes will form,” Dillon said. “And those holes are the ionized bits.”

“HERA is continuing to improve and set better and better limits,” Parsons said. “The fact that we’re able to keep pushing through, and we have new techniques that are continuing to bear fruit for our telescope, is great.”

The HERA collaboration is led by UC Berkeley and includes scientists from across North America, Europe, and South Africa. The construction of the array is funded by the National Science Foundation and the Gordon and Betty Moore Foundation, with key support from the government of South Africa and the South African Radio Astronomy Observatory (SARAO).

How to resolve AdBlock issue?

How to resolve AdBlock issue?