- Hurricanes that make landfall typically decay but sometimes transition into extratropical cyclones and re-intensify, causing widespread damage to inland communities

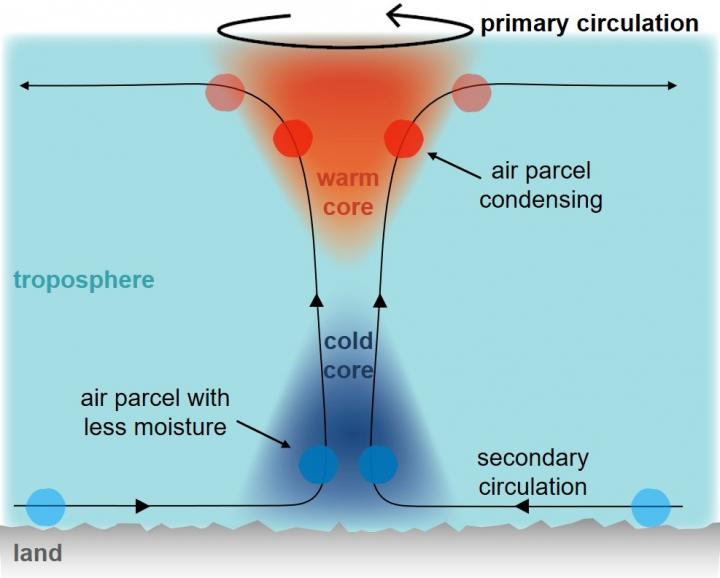

- The presence of a cold-core is currently used to identify this transition, but a new study has now found that a cold-core naturally forms in all landfalling hurricanes

- The cold-core was detected when scientists ran simulations of landfalling hurricanes that accounted for moisture stored within the cyclone

- Over time, the scientists saw a cold-core growing from the bottom of the hurricane, replacing the warm core

- The research could help forecasters make more accurate predictions on whether communities farther inland will be impacted by these extreme weather events

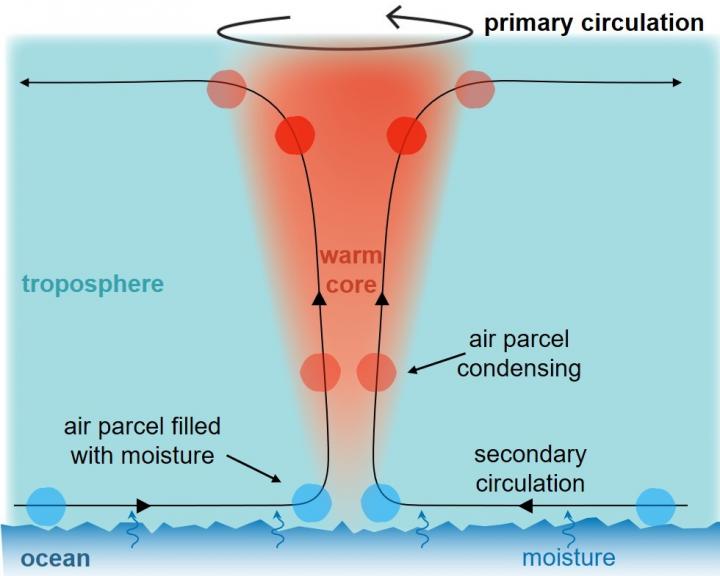

Hurricanes are powerful weather events born in the open sea. Fueled by moisture from the warm ocean, hurricanes can intensify in strength, move vast distances across the water, and ultimately unleash their destruction upon the land. But what happens to hurricanes after they've made landfall remains an open question.

Now, a recent study in Physical Review Fluids has used simulations to explore the fate of landfalling hurricanes. The scientists found that after landfall, the warm, dynamic heart of a hurricane is replaced by a growing cold-core - an unexpected finding that could help forecasters predict the level of extreme weather that communities farther inland may face.

"Generally, if a hurricane hits land, it weakens and dies," said Professor Pinaki Chakraborty, senior author and head of the Fluid Mechanics Unit at the Okinawa Institute of Science and Technology Graduate University (OIST). "But sometimes, a hurricane can intensify again deep inland, creating a lot of destruction, like flooding, in communities far away from the coast. So, predicting the course that a hurricane will take is crucial."

These re-intensification events occur when hurricanes, also known as tropical cyclones or typhoons in other global regions, transition into extratropical cyclones: storms that occur outside the Earth's tropics. Unlike tropical cyclones that harness their strength from ocean moisture, extratropical cyclones gain their energy due to unstable conditions in the surrounding atmosphere. This instability comes in the form of weather fronts - boundaries that separate warmer, lighter air from colder, denser air.

"Weather fronts are always unstable, but the release of energy is typically very slow. When a hurricane comes, it can disturb the front and trigger a faster release of energy that allows the storm to intensify again," said first author Dr. Lin Li, a former Ph.D. student in Prof. Chakraborty's unit.

However, predicting if this transition will occur is challenging for weather forecasters as hurricanes must interact with this front in a specific and complex way. Currently, forecasters use one key characteristic to objectively identify this transition: the presence of a cold-core within a landfalling hurricane, caused by an inward rush of cold air from the weather front.

However, when Prof. Chakraborty and Dr. Li simulated what happens to hurricanes after hitting land, they found that a cold-core was present in all landfalling hurricanes, growing upwards from the bottom of the hurricanes as they decayed, despite a stable atmosphere with no weather fronts.

"This appears to be a natural consequence of when a hurricane makes landfall and starts to decay," said Dr. Li.

Previous theoretical models of landfalling hurricanes missed the growing cold-core as they didn't account for the moisture stored within landfalling hurricanes, explained the researchers.

Prof. Chakraborty said, "Once hurricanes move over land and lose their moisture supply, models typically viewed them as just a spinning, dry vortex of air, which like swirling tea in a cup, rubs over the surface of the land and slows down due to friction."

However, the store of moisture within landfalling hurricanes means that thermodynamics still plays a critical role in how they decay.

In hurricanes over a warm ocean, the air that enters the hurricane is heavily saturated with moisture. As this air rises upward, it expands and cools, which lowers the amount of water vapor each "parcel" of air can hold. The water vapor within each air parcel therefore condenses, releasing heat. This means that these air parcels cool slower than the surrounding air outside the hurricane, generating a warm core.

But once a hurricane hits land, the air entering the hurricane contains less moisture. As these air parcels rise, they must travel higher before they reach a temperature cool enough for the water vapor to condense, delaying the release of heat. This means that at the bottom of the hurricane, where all the air parcels are moving upwards, it is comparatively cooler than the surrounding atmosphere, where air parcels move randomly in all directions, resulting in a cold-core.

"As the hurricane keeps decaying, it eats up more and more of the moisture stored within the hurricane, so the air parcels must rise even higher before condensation occurs," said Dr. Li. "So over time, the cold-core grows and the warm core shrinks."

The researchers hope that a better understanding of cold cores could help forecasters more accurately distinguish between decaying hurricanes and ones transitioning into extratropical cyclones.

"It's no longer as simple as hurricanes having a warm core and extratropical cyclones having a cold core," said Prof. Chakraborty. "But in decaying hurricanes, the cold-core we see is restricted to the lower half of the cyclone, whereas in an extratropical cyclone, the cold-core spans the whole hurricane - that's the signature that forecasters need to look for."

How to resolve AdBlock issue?

How to resolve AdBlock issue?