A new method for generating quantum-entangled photons in a spectral range of light previously inaccessible developed

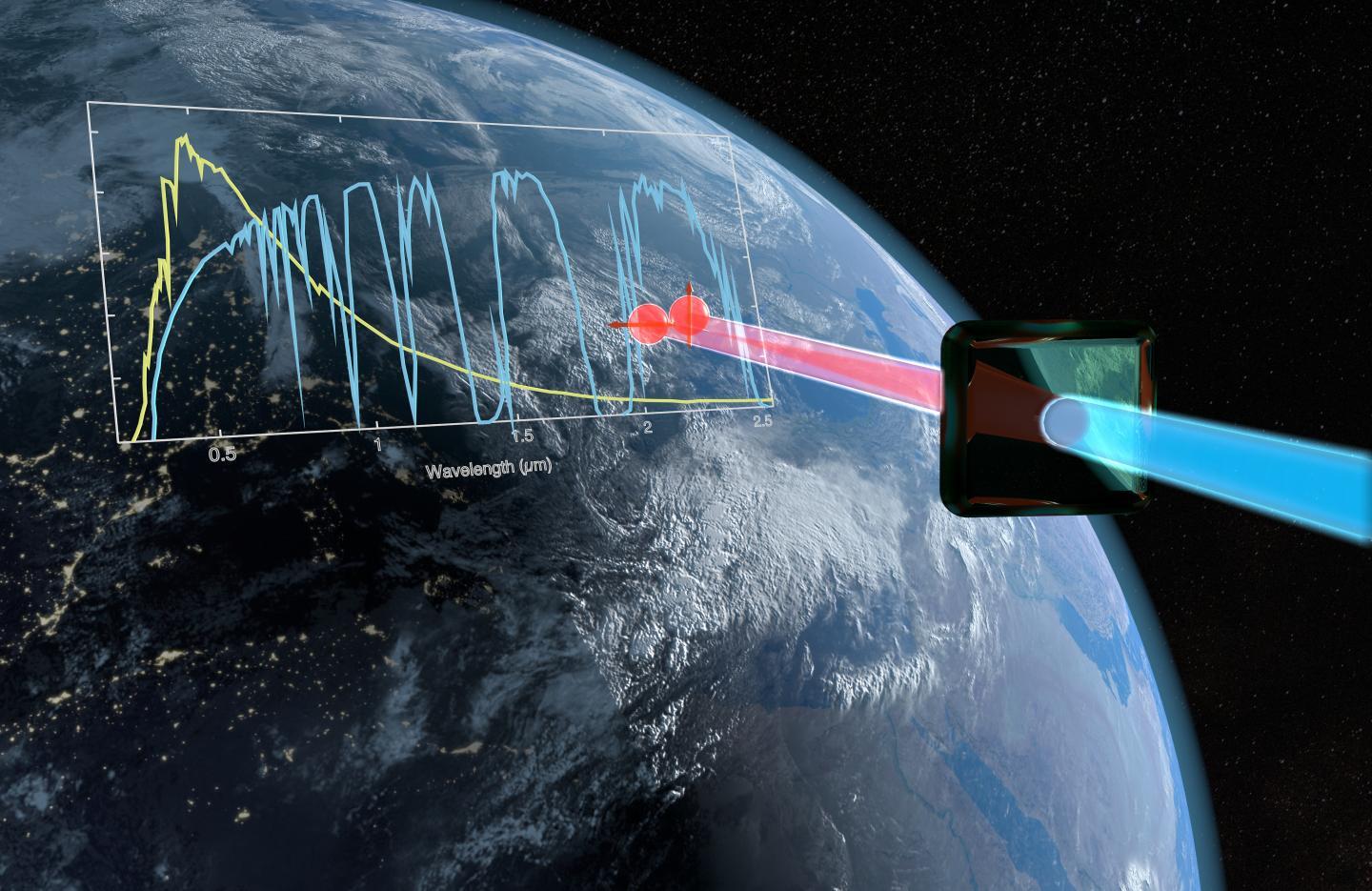

An international team with the participation of Prof. Dr. Michael Kues from the Cluster of Excellence PhoenixD at Leibniz University Hannover has developed a new method for generating quantum-entangled photons in a spectral range of light that was previously inaccessible. The discovery can make the encryption of satellite-based communications much more secure in the future.

A 15-member research team from the UK, Germany, and Japan has developed a new method for generating and detecting quantum-entangled photons at a wavelength of 2.1 micrometers. In practice, entangled photons are used in encryption methods such as quantum key distribution to completely secure telecommunications between two partners against eavesdropping attempts. The research results are presented to the public for the first time in an academic journal.  {module INSIDE STORY}

{module INSIDE STORY}

Until now, it has been only technically possible to implement such encryption mechanisms with entangled photons in the near-infrared range of 700 to 1550 nanometers. However, these shorter wavelengths have disadvantages, especially in satellite-based communication: They are disturbed by light-absorbing gases in the atmosphere as well as the background radiation of the sun. With the existing technology, end-to-end encryption of transmitted data can only be guaranteed at night, but not on sunny and cloudy days.

The international team, led by Dr. Matteo Clerici from the University of Glasgow, wants to solve this problem with its discovery. The photon pairs entangled at two-micrometer wavelength would be significantly less influenced by the solar background radiation, says Prof. Dr. Michael Kues from the PhoenixD Cluster of Excellence at the Leibniz University of Hannover. In addition, so-called transmission windows exist in the earth's atmosphere, especially for wavelengths of two micrometers, so that the photons are less absorbed by the atmospheric gases, in turn allowing a more effective communication.

For their experiment, the researchers used a nonlinear crystal made of lithium niobate. They sent ultrashort light pulses from a laser into the crystal and a nonlinear interaction produced the entangled photon pairs with a new wavelength of 2.1 micrometers.

The research results published describe the details of the experimental system and the verification of the entangled photon pairs: "The next crucial step will be to miniaturize this system by converting it into photonic integrated devices, making it suitable for mass production and for the use in other application scenarios", says Kues.  {module INSIDE STORY}

{module INSIDE STORY}

After completing his studies and doctorate in physics at the Westfälische Wilhelms University of Münster, Kues worked at the Institut National de la Recherche Scientifique - Centre Énergie Matériaux et Télécommunications (Canada). There he headed the research group "Nonlinear integrated quantum optics" for four years. He then moved to the University of Glasgow and joined the international team around Dr. Matteo Clerici. Since spring 2019, Kues has been a professor at the Hannover Centre for Optical Technologies (HOT) at Leibniz Universität Hannover and is researching, within the PhoenixD Cluster of Excellence, the development of novel photonic quantum technologies exploiting micro- and nanophotonics approaches. Kues wants to expand his five-member research team.

How to resolve AdBlock issue?

How to resolve AdBlock issue?  {module INSIDE STORY}

{module INSIDE STORY}