Extensively used grassland is host to a high degree of biodiversity, performs an important climate protection function as a carbon sink, and also serves for fodder and food production. However, these ecosystem services are jeopardized if productivity on these lands is maximized and their use therefore intensified. Until now, data on the condition of the meadows and pastures in Germany have been unavailable for larger areas. In the journal Remote Sensing of Environment, researchers at the Helmholtz Centre for Environmental Research (UFZ) have now described how satellite data and machine learning methods enable the assessment of land-use intensity.

The Sentinel-2 space mission began with the launch of the Earth observation satellite Sentinel-2A in June 2015, and Sentinel-2B was launched in March 2017. Since then, these two satellites have been orbiting in space at an altitude of nearly 800 kilometers and, as part of the European Space Agency's (ESA) Copernicus program, providing data for, e.g., climate protection and land monitoring. Every three to five days, they record images in the visible and infrared range of the electromagnetic spectrum, which, with a very high resolution of up to 10 meters, provide a strong foundation for detecting features such as changes in vegetation. An interdisciplinary team of researchers from the Helmholtz Centre for Environmental Research (UFZ) has used this freely accessible data to study the land-use intensity of German grasslands for the years 2017 and 2018. According to the Federal Statistical Office, these grasslands cover an area of roughly 4.7 million hectares and hence nearly 30 percent of all agricultural land. "We need more information on the land-use intensity of grasslands to better understand the stability and functioning of our ecosystems. The more intensively grassland is used, the greater the influence on primary production, nitrogen deposition, and resilience to climate changes," says lead author Dr. Maximilian Lange. He is a scientist in the UFZ Department of Remote Sensing, which is embedded in the "Remote Sensing Centre for Earth System Research" jointly funded by the UFZ and the University of Leipzig.

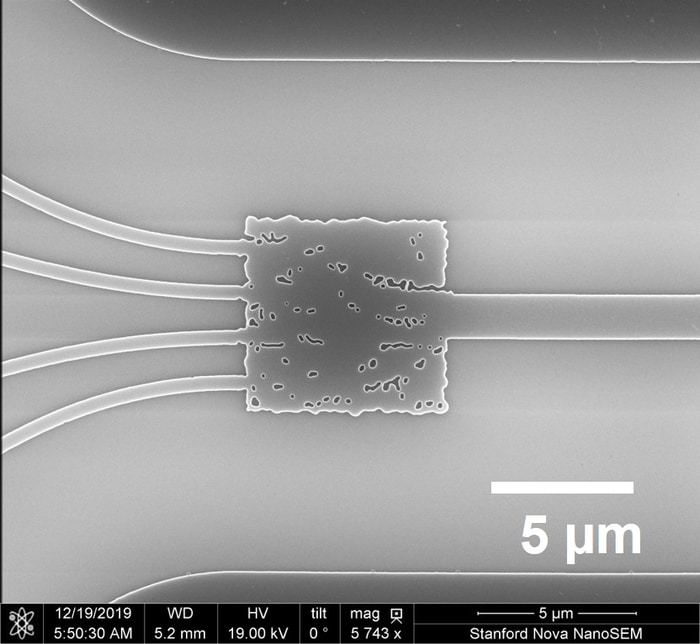

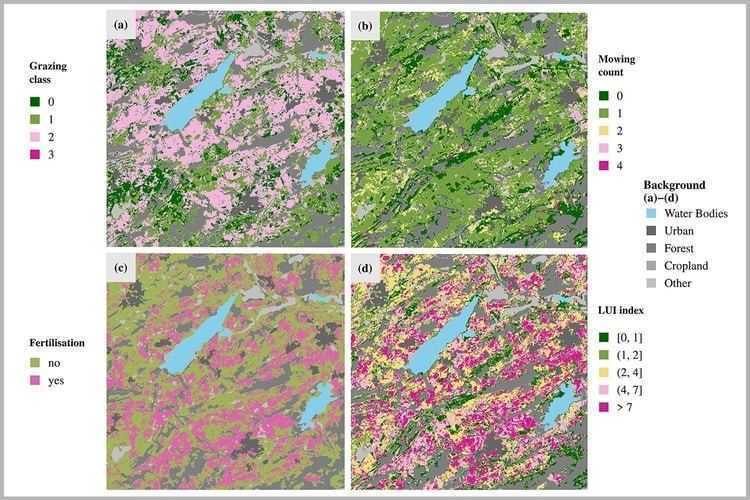

A prerequisite for the long-term preservation of grassland is underlying management, e.g. cutting or grazing. If left unused, the areas encounter shrub encroachment. But the intensity of grassland management is critical to their ability to provide ecosystem services. However, no Germany-wide data is publicly available on how farmers manage their grasslands. The UFZ scientist has now used the satellite data with a resolution of 20 meters to derive inferences about mowing frequency, grazing intensity of cattle, horses, sheep, and goats, and fertilization in Germany. "The magnitude of these three types of management is critical to the intensity of use," says Lange. He defined mowing frequency classes from 0 (not mowed) to 5 (mowed five times per year) and calculated a grazing intensity from 0 to 3 (heavily grazed) from a mix of livestock numbers, species, and age. For fertilization, he distinguished between fertilized and not fertilized. He combined these three categories to derive an index that indicates the management intensity of a grassland area ranging from "extensive" to "intensive."

He used artificial intelligence (AI) to derive information on the three usage parameters based on the multi-dimensional data the researchers obtained from the satellite images. "AI can very efficiently derive information from data that are too complex for humans to comprehend. Reference data can be used to train machine learning algorithms to identify patterns in the satellite data that we can then evaluate and apply to infer conclusions for large areas," he says. Lange obtained the reference data from the field data of three Biodiversity Exploratories sponsored by the German Research Foundation (DFG) in Hainich, Schorfheide, and the Schwäbische Alb. Various experiments have been ongoing there since 2006 in long-term studies on grassland with different levels of land-use intensity. These experiments investigate topics such as how land use affects biodiversity and the effects of changes in species composition on ecosystem processes.

Lange used two algorithms to evaluate how accurately machine learning recognizes actual grassland use from the satellite data: Random Forest, a standard remote sensing method for classifying land cover, and CNN (Convolutional Neural Networks), a deep learning method primarily used in image processing. The result: "Both methods do a good job of representing reality, and the CNN method is slightly better," he says. With the CNN method, the UFZ researcher was able to approximate the data from the DFG Biodiversity Exploratories, which ranged from 66 to 85 percent (grazing intensity 66 percent, mowing regime 68 percent, fertilization 85 percent) for the example of 2018. Random Forest based results were slightly lower for all three parameters. This is a high classification accuracy for comparable ecological remote sensing studies, but it could be still further improved if more data on grassland use were available. "The more data that can be used to train a deep learning method and the more accurate these data are, the more precise the results will be," says Lange. In a further step, he tested the results’ plausibility in four example regions in Germany. Two of these regions (Oberallgäu and Dithmarschen) are known for their intensive grassland use, while one near the Rhön Biosphere Reserve sees only moderate use and the other, a nature reserve in Saxony-Anhalt, is only used extensively. This comparison also yielded a good match between the remote sensing-based results and the actual data.

Overall, the UFZ team found that grassland was used less intensively in Germany in 2018 than in 2017. "This is primarily due to the drought in 2018 and the associated loss of grassland productivity," says Dr. Daniel Doktor, last author of the publication and head of the UFZ Land Cover & Dynamics Working Group. For example, the calculations show that 64 percent of the grassland was not mown in 2018 while this value was only 36 percent in 2017. "The results also show the management differences across Germany. Management often is very intensive in regions such as Allgäu or Schleswig-Holstein, while it is far more extensive in Brandenburg or parts of Saxony," he says. But this evaluation is only the beginning. More precise management data from further regions of Germany are needed to draw even more precise conclusions with the machine learning algorithms.

How to resolve AdBlock issue?

How to resolve AdBlock issue?