Far from Earth, beneath the tranquil blue atmospheres of Neptune and Uranus, exists a realm unreachable by spacecraft and impossible to replicate in the lab. Here, pressures soar to millions of times greater than Earth’s atmosphere and temperatures exceed those of molten lava. Now, new research suggests this environment may harbor an entirely new state of matter.

What makes this discovery remarkable is not just what was found, but how it was found.

Through the power of supercomputing and machine-learning.

A hidden state of matter, computed, not observed

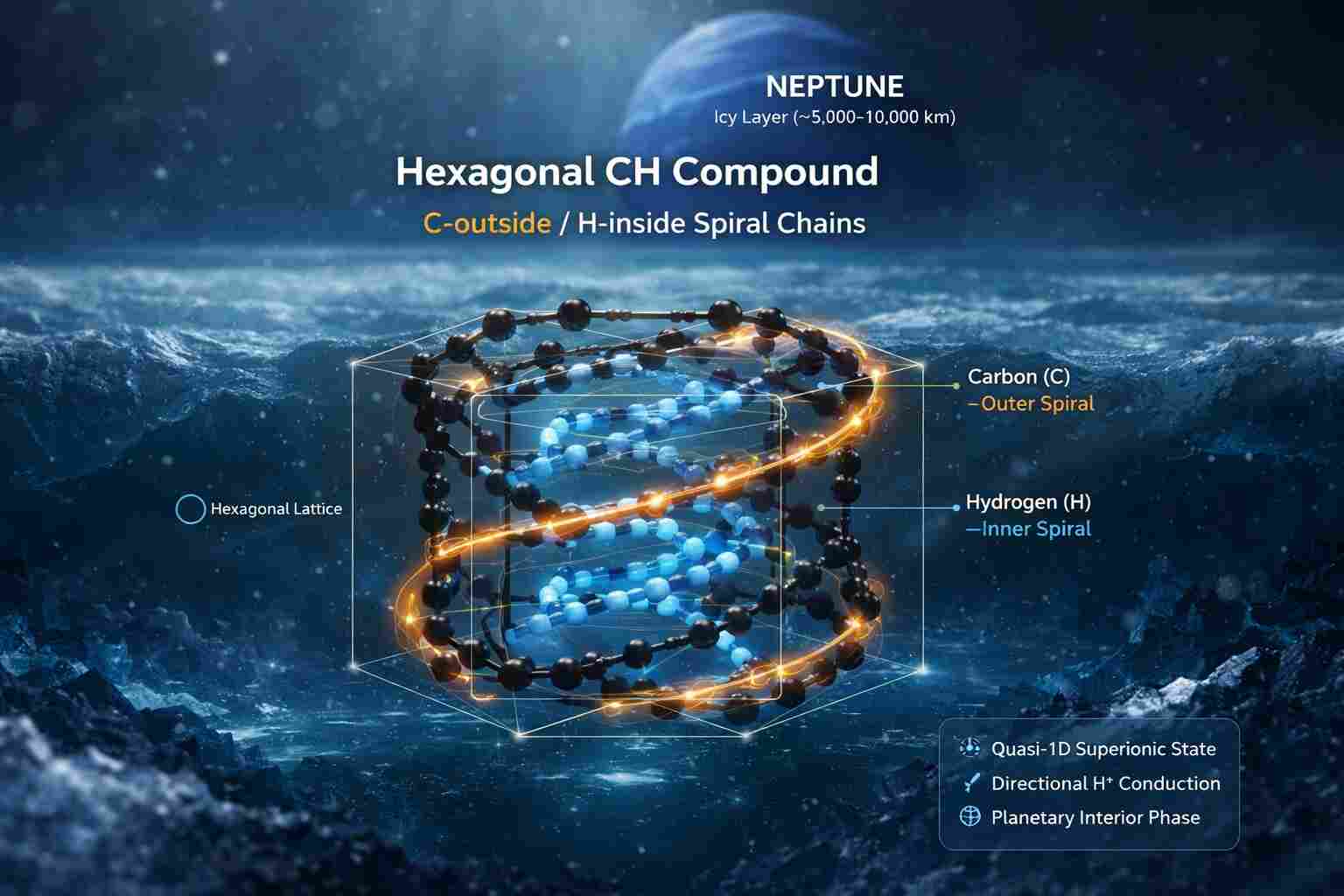

In a study led by researchers at Carnegie Science, scientists predict that deep within these ice giants exists a “superionic” form of carbon hydride, a strange hybrid phase where matter behaves simultaneously like a solid and a liquid.

Under extreme planetary conditions, pressures reaching up to 3,000 gigapascals and temperatures of thousands of degrees, atoms reorganize into exotic configurations. In this case, carbon atoms form a rigid lattice while hydrogen atoms flow through it like a fluid, creating what researchers describe as a quasi-one-dimensional superionic state.

This is not something that can be captured in a lab or observed by a telescope.

It must be computed into existence.

Supercomputers as planetary probes

To uncover this hidden physics, scientists turned to high-performance computing systems capable of simulating matter at the quantum level. Using first-principles calculations combined with machine-learning-driven interatomic models, researchers recreated the extreme environments of planetary interiors, atom by atom, interaction by interaction.

These simulations are staggering in scale and complexity. They must account for quantum mechanical behavior, atomic bonding, thermal fluctuations, and pressure-induced phase transitions, all of which unfold simultaneously across millions of computational steps.

In effect, supercomputers have become our deepest drilling instruments, probing worlds we cannot physically access.

Rewriting Planetary Science

The implications stretch far beyond academic curiosity.

For decades, scientists have known that Uranus and Neptune contain layers of so-called “hot ices,” mixtures of water, methane, and ammonia under extreme conditions. But the exact behavior of these materials has remained one of planetary science’s greatest mysteries.

Now, with the discovery of superionic carbon hydride, researchers are beginning to understand how these planets generate their unusual magnetic fields and internal dynamics. Exotic phases like this may influence heat flow, electrical conductivity, and convection deep within these worlds.

And as more than 6,000 exoplanets have already been discovered, these insights don’t just apply to our solar system; they provide a blueprint for understanding planets across the galaxy.

The rise of computational discovery

This breakthrough underscores a profound shift in how science is done.

Where exploration once required telescopes or spacecraft, today it increasingly depends on computation. Supercomputers are not just tools for analysis; they are engines of discovery, capable of predicting entirely new states of matter before they are ever observed.

In this new paradigm, simulation becomes exploration.

Equations become experiments.

And code becomes a window into worlds billions of miles away.

Inspiration at planetary scale

There is something deeply inspiring about this moment.

Humanity has not yet returned to Uranus or Neptune since Voyager 2 flew past them decades ago.

Yet through supercomputing, we are once again exploring their depths, this time not with cameras, but with computation.

We are discovering oceans of exotic matter, dynamic interiors, and hidden physical laws, all without leaving Earth.

It is a reminder that the frontier of exploration is no longer just out there in space.

It is also inside our machines.

And with every simulation, every model, every breakthrough, we move closer to understanding not just distant planets, but the fundamental nature of matter itself.

Because in the age of supercomputing, even the deepest secrets of the universe are within reach, one calculation at a time.

How to resolve AdBlock issue?

How to resolve AdBlock issue?