The island of Madagascar—one of the last large land masses colonized by humans—sits about 250 miles (400 kilometers) off the coast of East Africa. While it’s still regarded as a place of unique biodiversity, Madagascar long ago lost all its large-bodied vertebrates, including giant lemurs, elephant birds, turtles, and hippopotami. A human genetic study reported in the journal Current Biology on November 4 links these losses in time with the first major expansion of humans on the island, around 1,000 years ago.

The island of Madagascar—one of the last large land masses colonized by humans—sits about 250 miles (400 kilometers) off the coast of East Africa. While it’s still regarded as a place of unique biodiversity, Madagascar long ago lost all its large-bodied vertebrates, including giant lemurs, elephant birds, turtles, and hippopotami. A human genetic study reported in the journal Current Biology on November 4 links these losses in time with the first major expansion of humans on the island, around 1,000 years ago.

“This human demographic expansion was simultaneous with a cultural and ecological transition on the island,” says Denis Pierron, French National Centre for Scientific Research (CNRS) researcher in Toulouse, France. “Around the same period, cities appeared in Madagascar and all the vertebrates of more than 10 kilograms disappeared.”

The origins of humans in Madagascar have long been an enigma, Pierron explained. Madagascar is home to 25 million people who speak an Asian language despite the island’s proximity to East Africa. Other groups who speak similar languages live more than 4,000 miles away. The people that live in Madagascar are known to trace their roots back to two small populations: one Bantu-speaking from Africa and another Austronesian-speaking from Asia. But, beyond that, the history remained rather murky.

To retrace the history and understand more about the origin of the Malagasy people, a multi-disciplinary consortium launched 2007 a project known as Madagascar Genetic and Ethnolinguistic (MAGE). Over 10 years, Malagasy and international researchers visited more than 250 villages across the country to sample the cultural and genetic human diversity.

In the new study, Pierron and his colleagues took a close look at the human genetic evidence. More specifically, they closely studied how various segments of human chromosomes were shared with local ancestry information and supercomputer-simulated genetic data. Together, they’ve inferred that the Malagasy ancestral Asian population was isolated on the island for more than 1,000 years with an effective population size of just a few hundred individuals.

Their isolation ended about 1,000 years ago when a small group of Bantu-speaking African people came to Madagascar. Afterward, the population continued to expand rapidly over generations. The growing human population led to extensive changes to the Madagascar landscape and the loss of all large-bodied vertebrates that once lived there, they suggest.

The findings have important implications that may now be applied to studies of other human populations. For instance, it shows it’s possible to untangle the demographic history of ancient populations even well after two or more groups have mixed, by using genetic data and supercomputer simulations to test the likelihood of different scenarios. The findings also offer new insights into how past changes in human populations led to changes in whole ecosystems.

“Our study supports the theory that it was not directly the arrival of humans on the island that caused the disappearance of the megafauna, but rather a change in lifestyle that caused both a human population expansion and a reduction in biodiversity in Madagascar,” Pierron says.

While these efforts have led to a much better understanding of Madagascar’s history, many intriguing questions remain. For instance, Pierron asks, “If the ancestral Asian population was isolated for more than a millennium before mixing with the African population, where was this population? Already in Madagascar or in Asia? Why did the Asian population isolate itself over 2,000 years ago? Around 1,000 years ago, what triggered the observed cultural and demographic transition?”

How to resolve AdBlock issue?

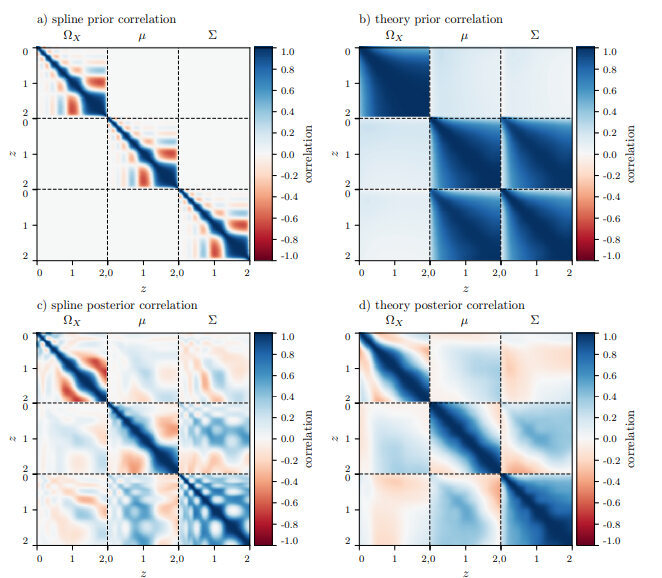

How to resolve AdBlock issue?  Scientists from around the world have reconstructed the laws of gravity, to help get a more precise picture of the Universe and its constitution.

Scientists from around the world have reconstructed the laws of gravity, to help get a more precise picture of the Universe and its constitution.

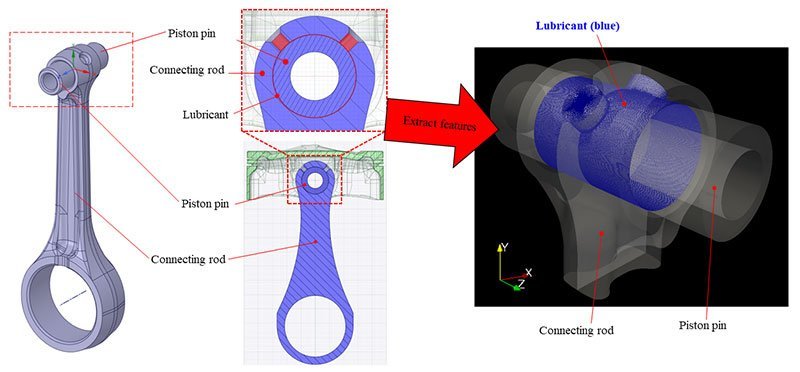

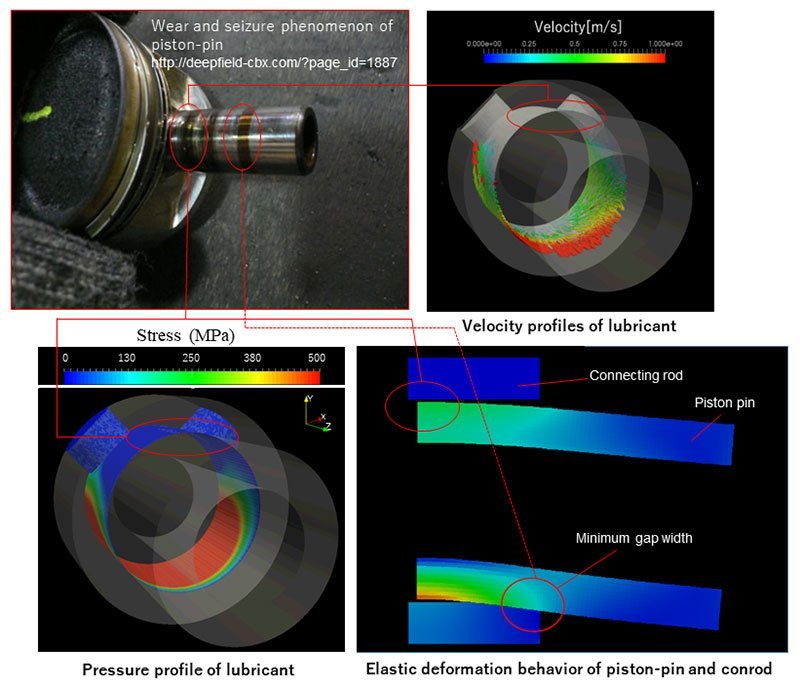

A research group has created an analysis method to predict wear and seizure locations in the sliding parts of engine piston pins. The breakthrough will help limit wear and tear on transportation and industrial machinery components and make them more fuel efficient.

A research group has created an analysis method to predict wear and seizure locations in the sliding parts of engine piston pins. The breakthrough will help limit wear and tear on transportation and industrial machinery components and make them more fuel efficient.