Millions of international viewers enjoyed watching the reality TV show “Ice Road Truckers”, in which experienced truck drivers were expected to master scary challenges, such as transporting heavy supplies across frozen lakes in the remote Arctic. According to a new study published in the prestigious journal Earth’s Future by an international team of climate and lake scientists, crossing frozen lakes with heavy trucks may soon be a matter of the past.

Millions of international viewers enjoyed watching the reality TV show “Ice Road Truckers”, in which experienced truck drivers were expected to master scary challenges, such as transporting heavy supplies across frozen lakes in the remote Arctic. According to a new study published in the prestigious journal Earth’s Future by an international team of climate and lake scientists, crossing frozen lakes with heavy trucks may soon be a matter of the past.

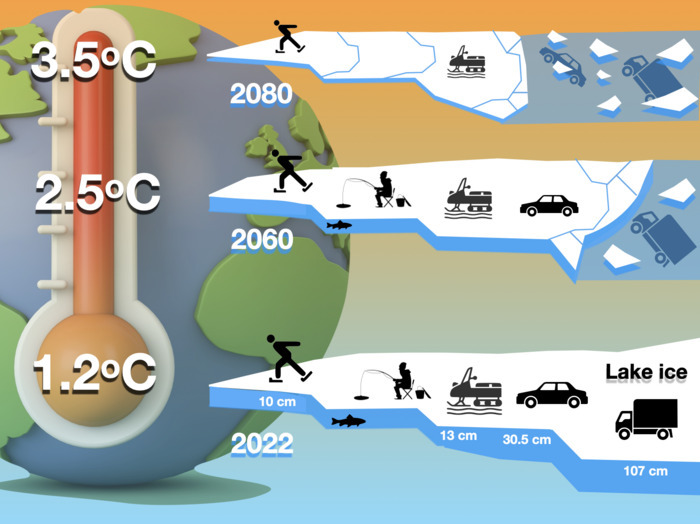

The study is based on one of the most comprehensive future climate change model simulations to date (the Community Earth System Model ver. 2 Large Ensemble) to determine at which warming levels and unsafe ice conditions will be reached regionally with regard to transportation and recreational activities, including ice-fishing or ice-skating.

The conclusion of the study is straightforward, namely that global warming will make lake ice much less safe (Figure). This is likely to affect indigenous communities in the Arctic as well as regional economies, where people rely on ice roads as a means for fast and comparatively cheap transportation and supply during winter. Thinning future ice conditions also threaten unique lake ecosystems that have adapted to recurring frozen lake conditions over tens of thousands of years.

“Our results demonstrate that the duration of safe ice over the next 80 years will shorten by 2-3 weeks depending on the future warming level. In regions where lakes are used as ice roads to transport heavy goods and supplies, the number of days with safe ice conditions will decline by more than 90%, even for moderate warming of 1.5°C above early 20th Century conditions”, says Dr. Lei Huang, corresponding author of the study and former postdoctoral researcher at the IBS Center for Climate Physics (ICCP), in Busan, South Korea.

“According to our computer model simulations, many densely populated regions in the mid-latitudes are projected to experience a large deterioration in safe ice conditions for recreational activities. Already a 1.5°C warming above early 20th Century conditions can lead to more than 60% loss in the duration of safe lake ice. This will negatively impact local communities that rely on the ice recreation industry.” says Dr. Iestyn Woolway from Bangor University, in the UK, the first author of the study.

Dr. Sapna Sharma from York University in Canada, one of the lead authors, added, “Given that our planet has already warmed by 1.2°C since the beginning of industrialization, it is time to implement proper regional adaptation strategies in affected communities to mitigate economic losses and to avoid loss of lives.”

How to resolve AdBlock issue?

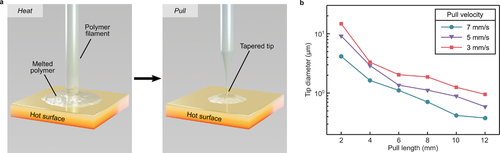

How to resolve AdBlock issue?  In a newly-published study, a team of researchers in Oxford University’s Department of Materials led by

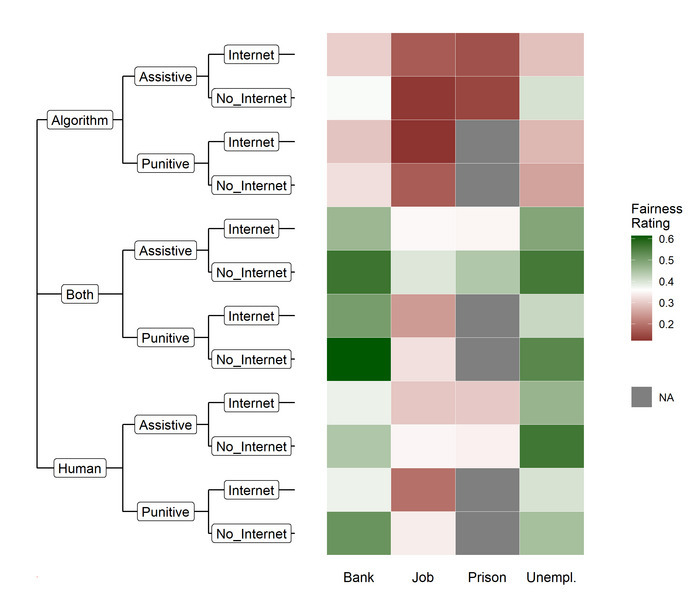

In a newly-published study, a team of researchers in Oxford University’s Department of Materials led by  Today, machine learning helps determine the loan we qualify for, the job we get, and even who goes to jail. But when it comes to these potentially life-altering decisions, can computers make a fair call? In a study published today in the journal Patterns, researchers from Germany showed that with human supervision, people think a computer’s decision can be as fair as a decision primarily made by humans.

Today, machine learning helps determine the loan we qualify for, the job we get, and even who goes to jail. But when it comes to these potentially life-altering decisions, can computers make a fair call? In a study published today in the journal Patterns, researchers from Germany showed that with human supervision, people think a computer’s decision can be as fair as a decision primarily made by humans. Extreme nonlinear wave group dynamics in directional wave states

Extreme nonlinear wave group dynamics in directional wave states The Large Hadron Collider (LHC) is the most powerful particle accelerator ever built which sits in a tunnel 100 meters underground at CERN, the European Organisation for Nuclear Research, near Geneva in Switzerland. It is the site of long-running experiments which enable physicists worldwide to learn more about the nature of the Universe.

The Large Hadron Collider (LHC) is the most powerful particle accelerator ever built which sits in a tunnel 100 meters underground at CERN, the European Organisation for Nuclear Research, near Geneva in Switzerland. It is the site of long-running experiments which enable physicists worldwide to learn more about the nature of the Universe.