A collaboration between scientists at Cambridge and UCL has led to the discovery of a new form of ice that more closely resembles liquid water than any other and may hold the key to understanding this most famous of liquids.

The new form of ice is amorphous. Unlike ordinary crystalline ice where the molecules arrange themselves in a regular pattern, in amorphous ice, the molecules are in a disorganized form that resembles a liquid.

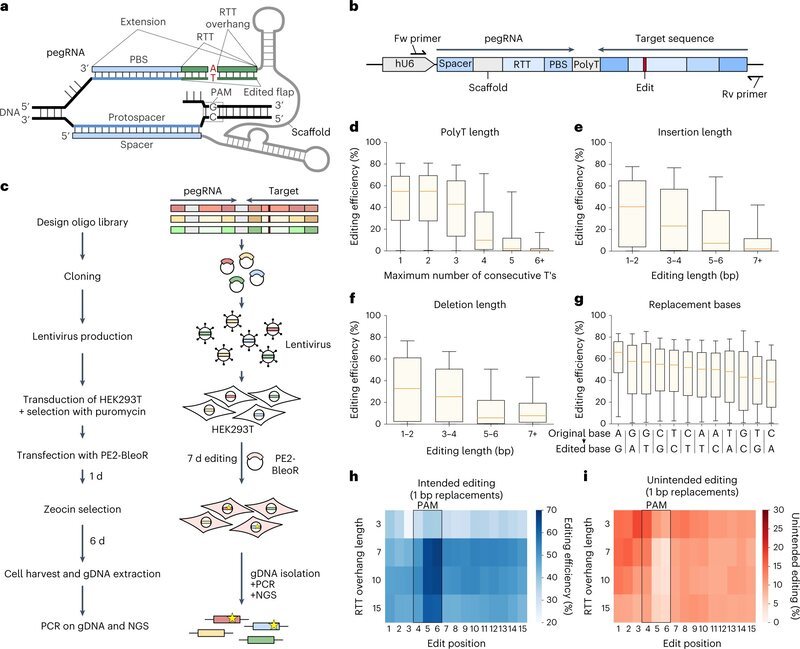

The team created a new form of amorphous ice in an experiment and achieved an atomic-scale model of it in a supercomputer simulation. The experiments used a technique called ball-milling, which grinds crystalline ice into small particles using metal balls in a steel jar. Ball-milling is regularly used to make amorphous materials, but it had never been applied to ice.

The team found that ball-milling created an amorphous form of ice, which, unlike all other known ices, had a density similar to that of liquid water and whose state resembled water in solid form. They named the new ice medium-density amorphous ice (MDA).

To understand the process at the molecular scale the team employed super-computational simulation. By mimicking the ball-milling procedure via repeated random shearing of crystalline ice, the team successfully created a super-computational model of MDA.

“Our discovery of MDA raises many questions on the very nature of liquid water and so understanding MDA’s precise atomic structure is very important,” said co-author Dr. Michael Davies, who carried out the super-computational modeling. “We found remarkable similarities between MDA and liquid water.”

A happy medium

Amorphous ices have been suggested to be models for liquid water. Until now, there have been two main types of amorphous ice: high-density and low-density amorphous ice.

As the names suggest, there is a large density gap between them. This density gap, combined with the fact that the density of liquid water lies in the middle, has been a cornerstone of our understanding of liquid water. It has led in part to the suggestion that water consists of two liquids: one high- and one low-density liquid.

Senior author Professor Christoph Salzmann said: “The accepted wisdom has been that no ice exists within that density gap. Our study shows that the density of MDA is precisely within this density gap and this finding may have far-reaching consequences for our understanding of liquid water and its many anomalies.”

A high-energy geophysical material

The discovery of MDA gives rise to the question: where might it exist in nature? Shear forces were discovered to be vital to creating MDA in this study. The team suggests ordinary ice could undergo similar shear forces in the ice moons due to the tidal forces exerted by gas giants such as Jupiter.

Moreover, MDA displays one remarkable property that is not found in other forms of ice. Using calorimetry, they discovered that when MDA recrystallizes to ordinary ice it releases an extraordinary amount of heat. The heat released from the recrystallization of MDA could play a role in activating tectonic motions. More broadly, this discovery shows water can be a high-energy geophysical material.

Professor Angelos Michaelides, the lead author from Cambridge's Yusuf Hamied Department of Chemistry, said: “Amorphous ice in general is said to be the most abundant form of water in the universe. The race is now on to understand how much of it is MDA and how geophysically active MDA is.”

How to resolve AdBlock issue?

How to resolve AdBlock issue?