The rate at which the planet warms in response to the ongoing buildup of heat-trapping carbon dioxide gas could increase in the future, according to new supercomputer simulations of a comparable warm period more than 50 million years ago.

Researchers at the University of Michigan and the University of Arizona used a state-of-the-art climate model to successfully simulate--for the first time--the extreme warming of the Early Eocene Period, which is considered an analog for Earth's future climate.

They found that the rate of warming increased dramatically as carbon dioxide levels rose, a finding with far-reaching implications for Earth's future climate, the researchers report in a paper scheduled for publication Sept. 18 in the journal Science Advances.

Another way of stating this result is that the climate of the Early Eocene became increasingly sensitive to additional carbon dioxide as the planet warmed.

"We were surprised that the climate sensitivity increased as much as it did with increasing carbon dioxide levels," said first author Jiang Zhu, a postdoctoral researcher at the UM Department of Earth and Environmental Sciences. {module In-article}

"It is a scary finding because it indicates that the temperature response to an increase in carbon dioxide in the future might be larger than the response to the same increase in CO2 now. This is not good news for us."

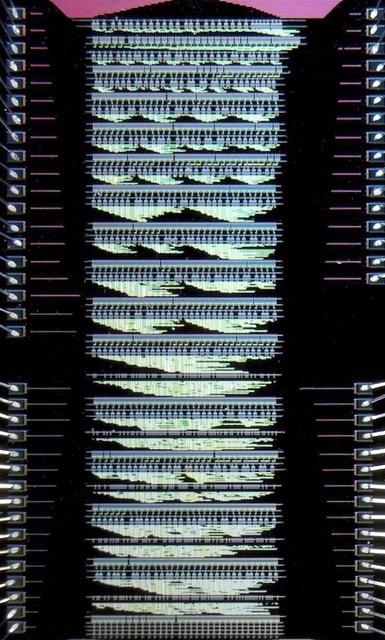

The researchers determined that the large increase in climate sensitivity they observed--which had not been seen in previous attempts to simulate the Early Eocene using similar amounts of carbon dioxide--is likely due to an improved representation of cloud processes in the climate model they used, the Community Earth System Model version 1.2, or CESM1.2.

Global warming is expected to change the distribution and types of clouds in the Earth's atmosphere, and clouds can have both warming and cooling effects on the climate. In their simulations of the Early Eocene, Zhu and his colleagues found a reduction in cloud coverage and opacity that amplified CO2-induced warming.

The same cloud processes responsible for increased climate sensitivity in the Eocene simulations are active today, according to the researchers.

"Our findings highlight the role of small-scale cloud processes in determining large-scale climate changes and suggest a potential increase in climate sensitivity with future warming," said U-M paleoclimate researcher Christopher Poulsen, a co-author of the Science Advances paper.

"The sensitivity we're inferring for the Eocene is indeed very high, though it's unlikely that climate sensitivity will reach Eocene levels in our lifetimes," said Jessica Tierney of the University of Arizona, the paper's third author.

The Early Eocene (roughly 48 million to 56 million years ago) was the warmest period of the past 66 million years. It began with the Paleocene-Eocene Thermal Maximum, which is known as the PETM, the most severe of several short, intensely warm events.

The Early Eocene was a time of elevated atmospheric carbon dioxide concentrations and surface temperatures at least 14 degrees Celsius (25 degrees Fahrenheit) warmer, on average, than today. Also, the difference between temperatures at the equator and the poles was much smaller.

Geological evidence suggests that atmospheric carbon dioxide levels reached 1,000 parts per million in the Early Eocene, more than twice the present-day level of 412 ppm. If nothing is done to limit carbon emissions from the burning of fossil fuels, CO2 levels could once again reach 1,000 ppm by the year 2100, according to climate scientists.

Until now, climate models have been unable to simulate the extreme surface warmth of the Early Eocene--including the sudden and dramatic temperature spikes of the PETM--by relying solely on atmospheric CO2 levels. Unsubstantiated changes to the models were required to make the numbers work, said Poulsen, a professor in the U-M Department of Earth and Environmental Sciences and associate dean for natural sciences.

"For decades, the models have underestimated these temperatures, and the community has long assumed that the problem was with the geological data, or that there was a warming mechanism that hadn't been recognized," he said.

But the CESM1.2 model was able to simulate both the warm conditions and the low equator-to-pole temperature gradient seen in the geological records.

"For the first time, a climate model matches the geological evidence out of the box--that is, without deliberate tweaks made to the model. It's a breakthrough for our understanding of past warm climates," Tierney said.

CESM1.2 was one of the climate models used in the authoritative Fifth Assessment Report from the Intergovernmental Panel on Climate Change, finalized in 2014. The model's ability to satisfactorily simulate Early Eocene warming provides strong support for CESM1.2's prediction of future warming, which is expressed through a key climate parameter called equilibrium climate sensitivity.

The term equilibrium climate sensitivity refers to the long-term change in global temperature that would result from a sustained doubling--lasting hundreds to thousands of years--of carbon dioxide levels above the pre-industrial baseline of 285 ppm. The consensus among climate scientists is that the ECS is likely to be between 1.5 C and 4.5 C (2.7 F-8.1 F).

The equilibrium climate sensitivity in CESM1.2 is near the upper end of that consensus range at 4.2 C (7.7 F). The U-M-led study's Early Eocene simulations exhibited increasing equilibrium climate sensitivity with warming, suggesting an Eocene sensitivity of more than 6.6 C (11.9 F), much greater than the present-day value.

How to resolve AdBlock issue?

How to resolve AdBlock issue?

{module In-article}

{module In-article}