In the search for Earth-like planets, University of Rochester scientist Miki Nakajima turns to supercomputer simulations of moon formations.

Earth’s moon is vitally important in making Earth the planet we know today: the moon controls the length of the day and ocean tides, which affect the biological cycles of lifeforms on our planet. The moon also contributes to Earth’s climate by stabilizing Earth’s spin axis, offering an ideal environment for life to develop and evolve.

Because the moon is so important to life on Earth, scientists conjecture that a moon may be a potentially beneficial feature in harboring life on other planets. Most planets have moons, but Earth’s moon is distinct in that it is large compared to the size of Earth; the moon’s radius is larger than a quarter of Earth’s radius, a much larger ratio than most moons to their planets.

Miki Nakajima, an assistant professor of earth and environmental sciences at the University of Rochester, finds that distinction significant. And in a new study that she led, she and her colleagues at the Tokyo Institute of Technology and the University of Arizona examine moon formations and conclude that only certain types of planets can form moons that are large in respect to their host planets.

“By understanding moon formations, we have a better constraint on what to look for when searching for Earth-like planets,” Nakajima says. “We expect that exomoons [moons orbiting planets outside our solar system] should be everywhere, but so far we haven’t confirmed any. Our constraints will be helpful for future observations.”

The origin of Earth’s moon

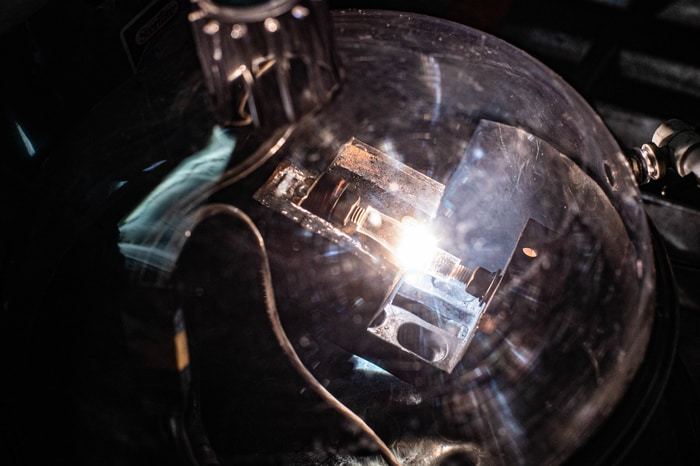

Many scientists have historically believed Earth’s large moon was generated by a collision between proto-Earth—Earth at its early stages of development—and a large, Mars-sized impactor, approximately 4.5 billion years ago. The collision resulted in the formation of a partially vaporized disk around Earth, which eventually formed into the moon.

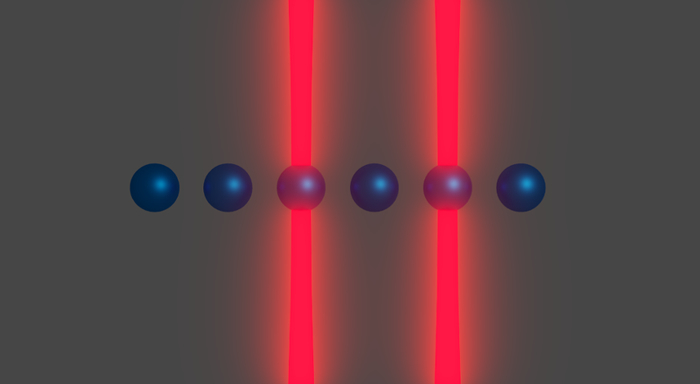

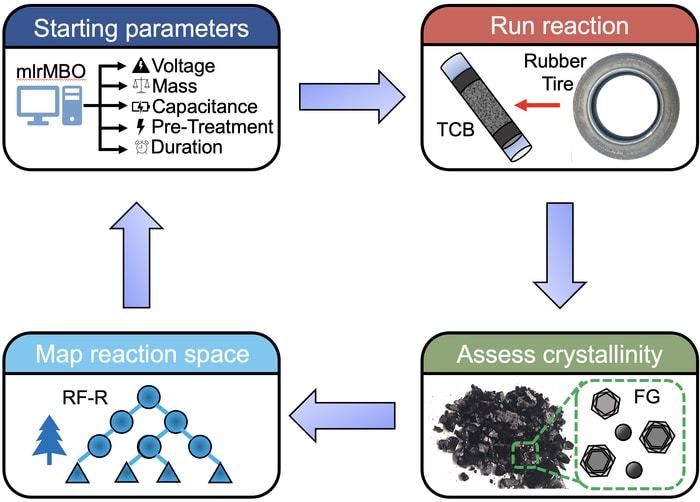

To find out whether other planets can form similarly large moons, Nakajima and her colleagues conducted impact simulations on the computer, with several hypothetical Earth-like rocky planets and icy planets of varying masses. They hoped to identify whether the simulated impacts would result in partially vaporized disks, like the disk that formed Earth’s moon.

The researchers found that rocky planets larger than six times the mass of Earth (6M) and icy planets larger than one Earth mass (1M) produce fully—rather than partially—vaporized disks, and these fully-vaporized disks are not capable of forming fractionally large moons.

“We found that if the planet is too massive, these impacts produce completely vapor disks because impacts between massive planets are generally more energetic than those between small planets,” Nakajima says.

After an impact that results in a vaporized disk, over time, the disk cools and liquid moonlets—a moon’s building blocks—emerge. In a fully-vaporized disk, the growing moonlets in the disk experience strong gas drag from vapor, falling onto the planet very quickly. In contrast, if the disk is only partially vaporized, moonlets do not feel such strong gas drag.

“As a result, we conclude that a complete vapor disk is not capable of forming fractionally large moons,” Nakajima says. “Planetary masses need to be smaller than those thresholds we identified to produce such moons.”

The search for Earth-like planets

The constraints outlined by Nakajima and her colleagues are important for astronomers investigating our universe; researchers have detected thousands of exoplanets and possible exomoons, but have yet to definitively spot a moon orbiting a planet outside our solar system.

This research may give them a better idea of where to look.

As Nakajima says: “The exoplanet search has typically been focused on planets larger than six earth masses. We are proposing that instead, we should look at smaller planets because they are probably better candidates to host fractionally large moons.”

How to resolve AdBlock issue?

How to resolve AdBlock issue?