The National Institutes of Health have awarded Michigan State University researchers $2.7 million to continue developing artificial intelligence algorithms that predict key features of viruses as they evolve.

The team is led by Guowei Wei, an expert in AI who has published nearly 30 papers on COVID-19, and Yong-Hui Zheng, whose extensive background in virology is helping verify and improve AI predictions. The team also includes Jiahui Chen, a visiting assistant professor at MSU who played an essential role in developing the AI models.

The Wei lab already has shown those models can make accurate predictions about new variants of the novel coronavirus and, with this grant, the researchers are working to bolster their algorithms.

“What we’re doing is making our predictions more accurate and more timely,” said Wei, an MSU Foundation Professor in the College of Natural Science’s Department of Mathematics and Department of Biochemistry and Molecular Biology. “And now our work isn’t just for COVID, but also for many other viral infections.”

The work could one day help drug developers create universal vaccines and therapies that are more effective and “evolution-proof” against a range of viral diseases, including the flu, HIV, and COVID-19.

“HIV, Ebola, influenza, the coronavirus — they’re all different viruses, but they share common features,” said Zheng, a professor in the College of Osteopathic Medicine in the Department of Microbiology and Molecular Genetics. “If we learn how to attack one, that can inform how we attack the others.”

“The goal is to have us much better prepared for any future disease or pandemic,” Wei said.

How AI, data, and experiments can inform public health

More immediately, the Wei team believes its AI can help inform public health officials if they need to update their recommended protective measures — such as by issuing masking and social distancing guidance — against emerging coronavirus variants.

Although vaccines and treatments are now available that didn’t exist when the U.S. first declared a public health emergency in response to the novel coronavirus, the virus is still out there evolving. Our immune responses are naturally influencing the trajectory of that evolution.

Thinking in terms of “survival of the fittest,” a virus that can evade vaccines or natural immunity will be more fit than its predecessor, Wei said. That means it will be better equipped to survive, multiply and infect others. The take-home message isn’t that people shouldn’t protect themselves, Wei said, but that a virus that still infects about 100,000 Americans daily isn’t going to get tired, bored, or just give up.

“Viruses don’t have a personality. They just survive,” Wei said. “We want to make sure we are prepared.”

This new grant, funded by the National Institute of Allergy and Infectious Diseases, is an investment to improve our readiness through cutting-edge technology. But it also leverages the expertise and experience of Wei and Zheng.

Zheng has led NIH-funded grants for two decades, although this will be his first with an explicit focus on the coronavirus.

“I’m very proud that this is the first one,” he said. “But we don’t want it to be the last. This new grant will expand my lab’s capacity to accommodate more needs campuswide and we want to use that to stimulate more collaboration.”

Zheng brings a unique virology skillset to MSU. He first was recruited in 2005 as an HIV researcher and, over time, his lab has grown to study the molecular biology of influenza and Ebola. When the coronavirus pandemic struck, he knew his team could provide valuable experimental infrastructure to help better study the new virus.

For example, his team developed less dangerous versions of the virus along with lab-grown cells for these “pseudo-viruses” to infect while preserving the biochemistry of real, clinical infections. The researchers also created very sensitive assays or tests, that would reveal which viruses infected which cells. All of this provided researchers with safer, faster, and easier ways to study a complex virus while generating valuable biological data.

Similarly, in early 2020, Wei’s team started putting its unique skills to work combatting the coronavirus.

“Before the pandemic, we had had success in worldwide competitions, being recognized as one of the top labs in combining AI and mathematics for drug discovery,” said Wei, who also holds an appointment in the Department of Electrical and Computer Engineering in the College of Engineering.

Wei’s research had focused on using AI to help design new pharmaceuticals in partnership with Pfizer and Bristol-Myers Squibb. Within days of China’s Wuhan lockdown in January 2020, Wei’s team started sharing its AI resources to help find drugs to fight the coronavirus and reveal new potential drug targets. But the researchers also recognized their algorithms could do more.

With a global community working to fight the coronavirus, there was a wealth of new genomic data describing the virus being shared regularly. Wei and his team saw an opportunity to combine that data with their AI framework to understand how the virus was mutating as time went on.

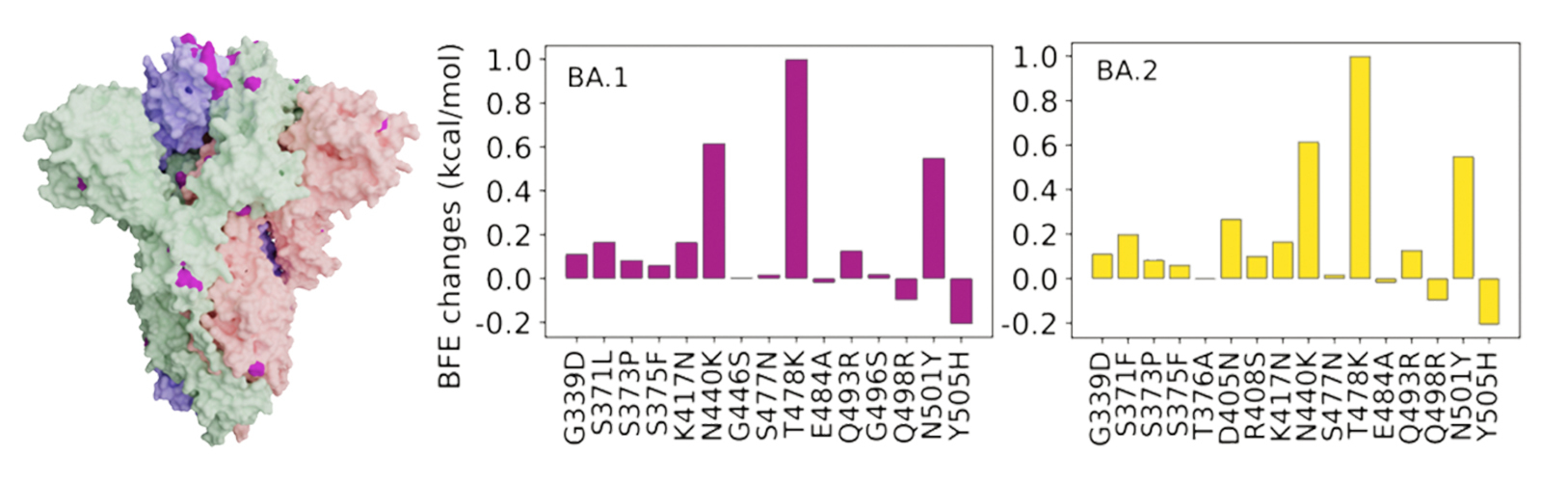

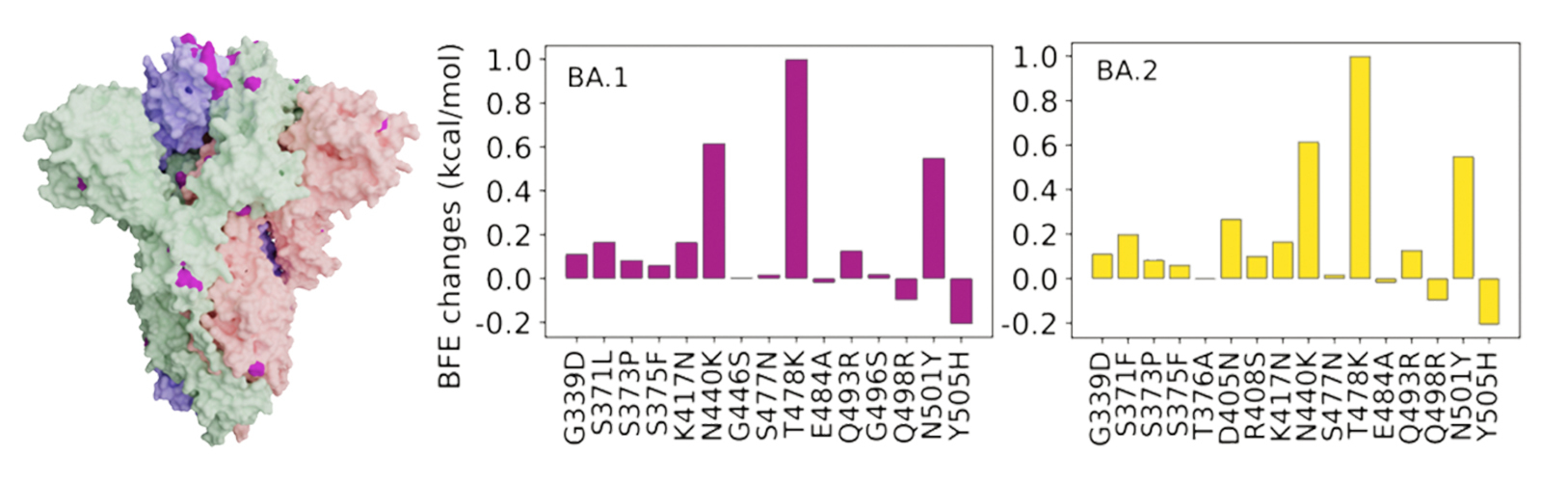

For example, they were among the first to see how “survival of the fittest” was playing out in the virus and steering its evolution, Wei said. His team then used that knowledge to look ahead and identify two potentially vital sites on the virus’s spike protein, the protein the virus uses to latch onto cells and infect them. Mutations in those two spike protein sites would later turn out to play crucial roles in the virus’s most prevalent variants, Wei said.

“We took what we were doing with deep learning and mathematics, then combined that with the viral genomic data to understand the evolution of the virus, look at its trajectory and ask what’s going to happen,” Wei said. “That gives us a way to predict what can happen in the future.”

Successfully predicting virus behavior

Wei and Zheng have been collaborating for about a year, starting before the grant was awarded. Their teamwork has informed precise algorithms with real-world data and provided real experimental results to compare with AI predictions.

“We need to have that interdisciplinary collaboration for this to work,” Zheng said. “Everything the computer models predicted, we had to confirm with experiments in a living system.”

Although Wei’s team validated its AI with laboratory experiments, the researchers still knew they’d need to prove their algorithms could work with a brand-new variant with very little data. Then, in the fall of 2021, the first omicron variant appeared.

“Back in late November, people didn’t know what was going to happen,” Wei said.

Researchers and public health officials responded immediately, but the process of experimenting and gathering data takes weeks. Meanwhile, Wei’s team put its AI to the test.

Their projections showed this first iteration of omicron would be more infectious, better at eluding the protection of vaccines, and less responsive to antibody treatments than earlier variants.

“Within days, we had our predictions,” Wei said. “A month and a half later, everything we predicted proved to be true by experimental labs around the world. Using AI, we can give people a month or two to prepare.”

Then, in early 2022, a new subvariant of omicron called BA.2 started spreading. A similar scenario played out. Wei’s team predicted it would be more infective and even more elusive, which would allow it to become the next dominant variant.

“We made our predictions on February 11, and on March 26, the World Health Organization announced it was the dominant form of the virus,” Wei said.

Now that scientists and officials better understand omicron, the newer versions aren’t garnering the same level of attention as their predecessors. But new variants and subvariants are still emerging. With support from the National Institutes of Health, the MSU team is working to ensure we stay prepared for what’s next, whether that’s a new variant, something more familiar like the flu, or something entirely different.

How to resolve AdBlock issue?

How to resolve AdBlock issue?