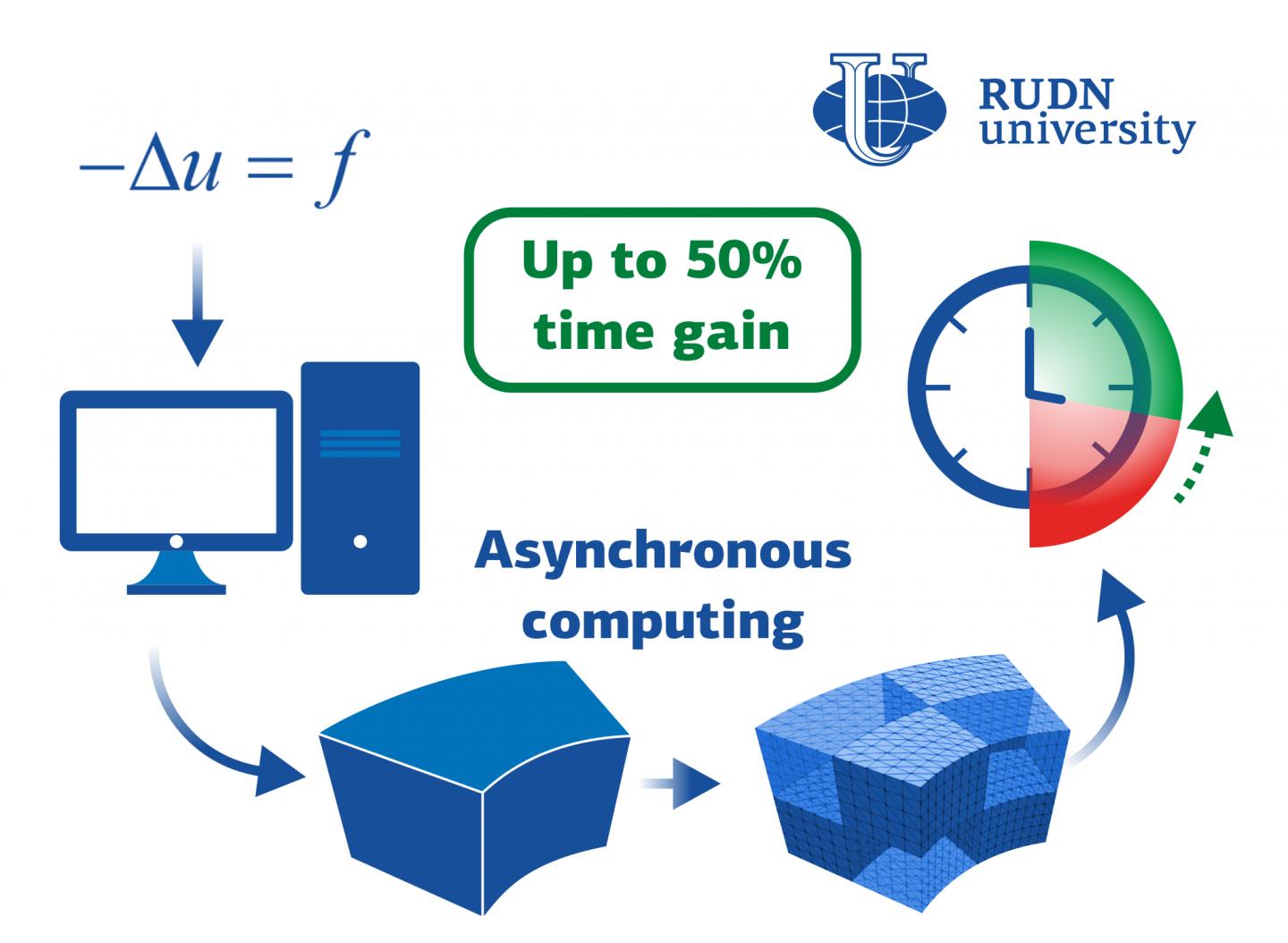

In Moscow, RUDN University mathematician and his colleagues from France and Hungary developed an algorithm for parallel supercomputing, which allows solving applied problems, such as electrodynamics or hydrodynamics. The gain in time is up to 50%. The results are published in the Journal of Computational and Applied Mathematics.

Parallel supercomputing methods are often used to process practical problems in physics, engineering, biology, and other fields. It involves several processors joined in a net to simultaneously solve a single problem -- each has its own small part. The way to distribute the work between the processors and make them "communicate" with each other is a choice based on the specifics of a particular problem. One possible method is domain decomposition. The study domain is divided into separate parts -- subdomains -- according to the number of processors. When that number is very high, especially in heterogeneous high-performance computing (HPC) environments, asynchronous processes constitute a valuable ingredient. Usually, Schwarz methods are used, in which the subdomains overlap each other. This provides accurate results but does not suit when the overlap is not straightforward. RUDN University Mathematician and his colleagues from France and Hungary proposed a new algorithm that makes the asynchronous decomposition easier in many structural cases -- the subdomains do not overlap; the result remains accurate with less time needed for computation.

"Until now, almost all investigations of asynchronous iterations within domain decomposition frameworks targeted methods of the parallel Schwarz type. A first, and sole, attempt to deal with primal nonoverlapping decomposition resulted in simultaneously iterating on the subdomains and on the interface between them. That means that computation scheme is defined on the whole global domain", Guillaume Gbikpi-Benissan, Engineering Academy of RUDN University.

Mathematicians proposed an algorithm based on the Gauss-Seidel method. The essence of the innovation is that the calculation algorithm is not run simultaneously on the entire domain, but alternately on the subdomains and the boundaries between them. As a result, the values obtained during each iteration within the subdomain can be immediately used for calculations on the boundary at no additional cost.

Mathematicians tested the new algorithm on the Poisson equation and the linear elasticity problem. The first one is used, for example, to describe the electrostatic field, the second one is used in hydrodynamics, to describe the motion of liquids. The new method was faster than the original one for both equations. A gain of up to 50% was indeed achieved -- with 720 subdomains, the computation of the Poisson equation took 84 seconds while the original algorithm spent 170 seconds. Moreover, the number of synchronous alternating iterations decreases with an increase in the number of subdomains.

"It is a quite interesting behavior which can be explained by the fact that the ratio of alternation increases as the subdomains sizes are reduced and more of the interface appears. This work, therefore, encourages for further possibilities and new promising investigations of the asynchronous computing paradigm", Guillaume Gbikpi-Benissan, Engineering Academy of RUDN University.

How to resolve AdBlock issue?

How to resolve AdBlock issue?