A multi-institutional team of astrophysicists headquartered at Boston University, led by BU astrophysicist Merav Opher, has made a breakthrough discovery in our understanding of the cosmic forces that shape the protective bubble surrounding our solar system—a bubble that shelters life on Earth and is known by space researchers as the heliosphere.

Astrophysicists believe the heliosphere protects the planets within our solar system from powerful radiation emanating from supernovas, the final explosions of dying stars throughout the universe. They believe the heliosphere extends far beyond our solar system, but despite the massive buffer against cosmic radiation that the heliosphere provides Earth’s life-forms, no one knows the shape of the heliosphere—or, for that matter, the size of it.

“How is this relevant for society? The bubble that surrounds us, produced by the sun, offers protection from galactic cosmic rays, and the shape of it can affect how those rays get into the heliosphere,” says James Drake, an astrophysicist at the University of Maryland who collaborates with Opher. “There are lots of theories but, of course, the way that galactic cosmic rays can get in can be impacted by the structure of the heliosphere—does it have wrinkles and folds and that sort of thing?”

Opher’s team has constructed some of the most compelling supercomputer simulations of the heliosphere, based on models built on observable data and theoretical astrophysics. At BU, in the Center for Space Physics, Opher, a College of Arts & Sciences professor of astronomy, leads a NASA DRIVE (Diversity, Realize, Integrate, Venture, Educate) Science Center that’s supported by $1.3 million in NASA funding. That team, made up of experts Opher recruited from 11 other universities and research institutes, develops predictive models of the heliosphere in an effort the team calls SHIELD (Solar-wind with Hydrogen Ion Exchange and Large-scale Dynamics).

Since BU’S NASA DRIVE Science Center first received funding in 2019, Opher’s SHIELD team has hunted for answers to several puzzling questions: What is the overall structure of the heliosphere? How do its ionized particles evolve and affect heliospheric processes? How does the heliosphere interact and influence the interstellar medium, the matter, and the radiation that exists between stars? And how do cosmic rays get filtered by, or transported through, the heliosphere?

“SHIELD combines theory, modeling, and observations to build comprehensive models,” Opher says. “All these different components work together to help understand the puzzles of the heliosphere.”

And now a paper published by Opher and collaborators in Astrophysical Journal reveals that neutral hydrogen particles streaming from outside our solar system most likely play a crucial role in the way our heliosphere takes shape.

In their latest study, Opher’s team wanted to understand why heliospheric jets—blooming columns of energy and matter that are similar to other types of cosmic jets found throughout the universe—become unstable. “Why do stars and black holes—and our sun—eject unstable jets?” Opher says. “We see these jets projecting as irregular columns, and [astrophysicists] have been wondering for years why these shapes present instabilities.”

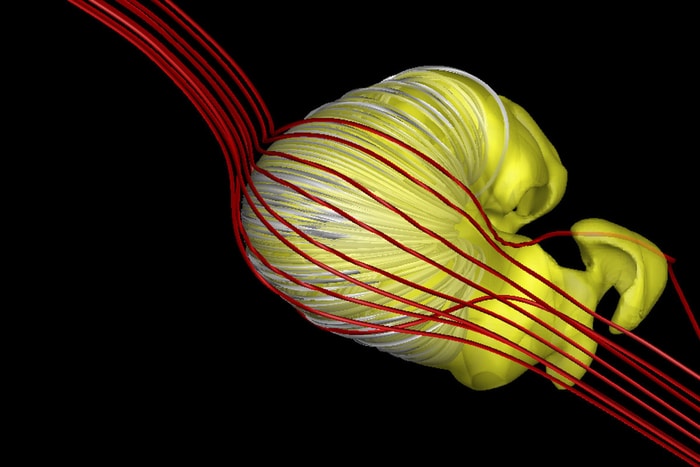

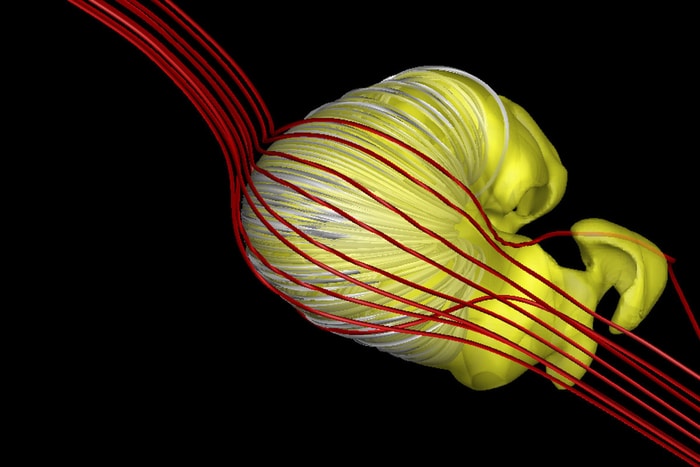

Similarly, SHIELD models predict that the heliosphere, traveling in tandem with our sun and encompassing our solar system, doesn’t appear to be stable. Other models of the heliosphere developed by other astrophysicists tend to depict the heliosphere as having a comet-like shape, with a jet—or a “tail”—streaming behind in its wake. In contrast, Opher’s model suggests the heliosphere is shaped more like a croissant or even a donut.

The reason for that? Neutral hydrogen particles, so-called because they have equal amounts of positive and negative charges that net no charge at all.

“They come streaming through the solar system,” Opher says. Using a computational model like a recipe to test the effect of ‘neutrals’ on the shape of the heliosphere, she “took one ingredient out of the cake—the neutrals—and noticed that the jets coming from the sun, shaping the heliosphere, become super stable. When I put them back in, things start bending, the center axis starts wiggling, and that means that something inside the heliospheric jets is becoming very unstable.”

Instability like that would theoretically cause disturbance in the solar winds and jets emanating from our sun, causing the heliosphere to split its shape—into a croissant-like form. Although astrophysicists haven’t yet developed ways to observe the actual shape of the heliosphere, Opher’s model suggests the presence of neutrals slamming into our solar system would make it impossible for the heliosphere to flow uniformly like a shooting comet. And one thing is for sure—neutrals are pelting their way through space.

Drake, a coauthor on the new study, says Opher’s model “offers the first clear explanation for why the shape of the heliosphere breaks up in the northern and southern areas, which could impact our understanding of how galactic cosmic rays come into Earth and the near-Earth environment.” That could affect the threat that radiation poses to life on Earth and also for astronauts in space or future pioneers attempting to travel to Mars or other planets.

“The universe is not quiet,” Opher says. “Our BU model doesn’t try to cut out the chaos, which has allowed me to pinpoint the cause [of the heliosphere’s instability]…. The neutral hydrogen particles.”

Specifically, the presence of the neutrals colliding with the heliosphere triggers a phenomenon well known by physicists, called the Rayleigh-Taylor instability, which occurs when two materials of different densities collide, with the lighter material pushing against the heavier material. It’s what happens when oil is suspended above the water, and when heavier fluids or materials are suspended above lighter fluids. Gravity plays a role and gives rise to some wildly irregular shapes. In the case of the cosmic jets, the drag between the neutral hydrogen particles and charged ions creates a similar effect as gravity. The “fingers” seen in the famous Horsehead Nebula, for example, are caused by the Rayleigh-Taylor instability.

“This finding is a breakthrough, it’s set us in a direction of discovering why our model gets its distinct croissant-shaped heliosphere and why other models don’t,” Opher says.

How to resolve AdBlock issue?

How to resolve AdBlock issue?