- Since the study was completed, several of the predicted mutations appeared in omicron, the most recently identified SARS-CoV-2 variant, offering insight into how omicron might be able to escape immune defense generated by mRNA vaccines and monoclonal antibody treatments for COVID-19.

- The researchers modeled their predictions of future mutations using a combination of variables, including rare mutations documented in immunocompromised patients, existing SARS-CoV-2 genotypes, and the virus’s current molecular structure and behavior.

- Findings highlight the ability of SARS-CoV-2 to shape-shift, underscoring the likelihood of new variants that contain multiple high-risk mutations and are capable of evading antibody-based treatments and vaccines.

- The study highlights the urgent need to help curb viral evolution and future mutations through mitigation measures and by ensuring global immunity through mass vaccination.

To predict the future evolutionary maneuvers of SARS-CoV-2, a research team led by investigators at Harvard Medical School has identified several likely mutations that would allow the virus to evade immune defenses, including natural immunity acquired through infection and developed from vaccination as well as antibody-based treatments.

The study, published Dec. 2 in Science as an accelerated publication for immediate release, was designed to gauge how SARS-CoV-2 might evolve as it continues to adapt to its human hosts and in doing so to help public health officials and scientists prepare for future mutations.

Indeed, as the research was nearing publication, a new variant of concern, dubbed omicron, entered the scene and was subsequently found to contain several of the antibody-evading mutations the researchers predicted in the newly published paper. As of Dec. 1, omicron has been identified in 25 countries in Africa, Asia, Australia, Europe, and North and South America, a list that is growing daily.

The researchers caution that the study findings are not directly applicable to omicron because how this specific variant behaves will depend on the interplay among its own unique set of mutations–at least 30 in the viral spike protein—and on how it competes against other active strains circulating in populations around the world. Nonetheless, the researchers said, the study gives important clues about particular areas of concern with omicron and also serves as a primer on other mutations that might appear in future variants.

“Our findings suggest that great caution is advised with omicron because these mutations have proven quite capable of evading monoclonal antibodies used to treat newly infected patients and antibodies derived from mRNA vaccines,” said study senior author Jonathan Abraham, assistant professor of microbiology in the Blavatnik Institute at HMS and an infectious disease specialist at Brigham and Women’s Hospital. The researchers did not study response to antibodies developed from non-mRNA vaccines.

The longer the virus continues to replicate in humans, Abraham noted, the more likely it is that it will continue to evolve novel mutations that develop new ways to spread in the face of existing natural immunity, vaccines, and treatments. That means that public health efforts to prevent the spread of the virus, including mass vaccinations worldwide as soon as possible, are crucial both to prevent illness and to reduce opportunities for the virus to evolve, Abraham said.

The findings also highlight the importance of ongoing anticipatory research into the potential future evolution of not only SARS-CoV-2 but other pathogens as well, the researchers said.

“To get out of this pandemic, we need to stay ahead of this virus, as opposed to playing catch up,” said study co-lead author Katherine Nabel, a fifth-year student in the Harvard/MIT MD-PhD Program. “Our approach is unique in that instead of studying individual antibody mutations in isolation, we studied them as part of composite variants that contain many simultaneous mutations at once—we thought this might be where the virus was headed. Unfortunately, this seems to be the case with omicron.”

Many studies have looked at the mechanisms developed in newly dominant SARS-CoV-2 strains that enable the virus to resist the protective power of antibodies to prevent infection and serious disease.

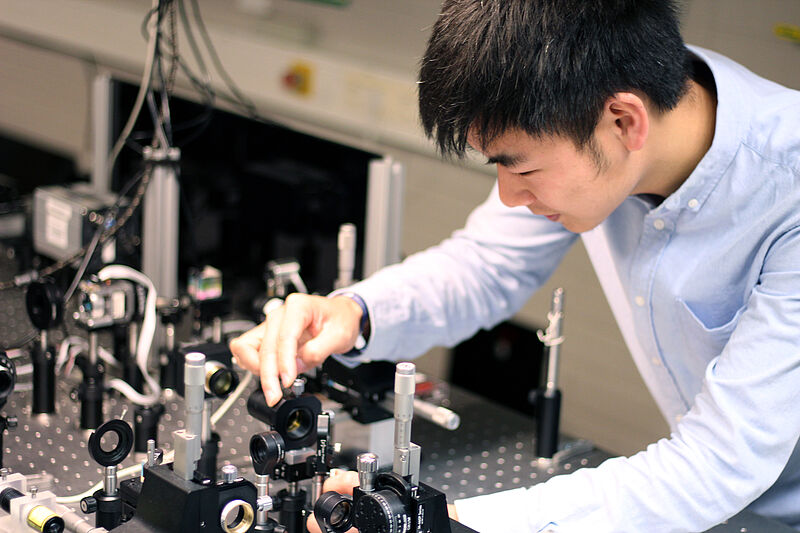

This past summer, instead of waiting to see what the next new variant might bring, Abraham set out to determine how possible future mutations might impact the virus’s ability to infect cells and to evade immune defenses—work that he did in collaboration with colleagues from HMS, Brigham and Women’s Hospital, Massachusetts General Hospital, Harvard Pilgrim Health Care Institute, Harvard T.H. Chan School of Public Health, Boston University School of Medicine and National Emerging Infectious Diseases Laboratories (NEIDL), and AbbVie Bioresearch Center.

To estimate how the virus might transform itself next, the researchers followed clues in the chemical and physical structure of the virus and looked for rare mutations found in immunocompromised individuals and a global database of virus sequences. In lab-based studies using non-infectious virus-like particles, the researchers found combinations of multiple, complex mutations that would allow the virus to infect human cells while reducing or neutralizing the protective power of antibodies.

The researchers focused on a part of the coronavirus’s spike protein called the receptor-binding domain, which the virus uses to latch on to human cells. The spike protein allows the virus to enter human cells, where it initiates self-replication and, eventually, leads to infection. Most antibodies function by locking on to the same locations on the virus’s spike protein receptor-binding domain to block it from entering cells and causing infection.

Mutation and evolution are a normal part of a virus’s natural history. Every time a new copy of a virus is made, there’s a chance that a copy error—a genetic typo—might be introduced. As a virus encounters selective pressure from the host’s immune system, copy errors that allow the virus to avoid being blocked by existing antibodies have a better chance of surviving and continuing to replicate. Mutations that allow a virus to evade antibodies in this way are known as escape mutations.

The researchers demonstrated that the virus could develop large numbers of simultaneous escape mutations while retaining the ability to connect to the receptors it needs to infect a human cell. The team worked with so-called pseudotype viruses, lab-made stand-ins for a virus constructed by combining harmless, noninfectious virus-like particles with pieces of the SARS-CoV-2 spike protein containing the suspected escape mutations. The experiments showed that pseudo-type viruses containing up to seven of these escape mutations are more resistant to neutralization by therapeutic antibodies and serum from mRNA vaccine recipients.

This level of complex evolution had not been seen in widespread strains of the virus at the time the researchers began their experiments. But with the emergence of the omicron variant, this level of complex mutation in the receptor-binding domain is no longer hypothetical. The delta variant had only two mutations in its receptor-binding domain, and the pseudotypes Abraham’s team studied had up to seven mutations, omicron appears to have fifteen, including several of the specific mutations that his team analyzed.

In a series of experiments, the researchers performed biochemical assays to see how antibodies would bind to spike proteins containing escape mutations. Several of the mutations, including some of those found in omicron, enabled the pseudotypes to completely evade therapeutic antibodies, including those found in monoclonal antibody cocktail therapies.

The researchers also found one antibody that was able to neutralize all of the tested variants effectively. However, they also noted that the virus would be able to evade that antibody if the spike protein developed a single mutation that adds a sugar molecule at the location where the antibody binds to the virus. That, in essence, would prevent the antibody from doing its job.

The researchers noted that in rare instances, circulating strains of SARS-CoV-2 have been found to gain this mutation. When this happens, it is likely the result of selective pressure from the immune system, the researchers said. Understanding the role of this rare mutation, they added, is critical to being better prepared before it emerges as part of dominant strains.

While the researchers did not directly study the pseudotype virus’s ability to escape immunity from natural infection, findings from the team’s previous work with variants carrying fewer mutations suggest that these newer, highly mutated variants would also adeptly evade antibodies acquired through natural infection.

In another experiment, the pseudotypes were exposed to blood serum from individuals who had received an mRNA vaccine. For some of the highly mutated variants, serum from single-dose vaccine recipients completely lost the ability to neutralize the virus. In samples taken from people who had received a second dose of vaccine, the vaccine retained at least some effectiveness against all variants, including some extensively mutated pseudotypes.

The researchers note that their analysis suggests that repeated immunization even with the original spike protein antigen may be critical to countering highly mutated SARS-CoV-2 spike protein variants.

“This virus is a shape-shifter,” Abraham said. “The great structural flexibility we saw in the SARS-CoV-2 spike protein suggests that omicron is not likely to be the end of the story for this virus.”

How to resolve AdBlock issue?

How to resolve AdBlock issue?