The data collected by the Florida Department of Environmental Protection will aid in assessing the effectiveness of restoration projects and promoting resilience along the coast. Woolpert has been commissioned by the Florida Department of Environmental Protection to utilize lidar technologies for acquiring bathymetric survey data. This supports the Florida Seafloor Mapping Initiative (FSMI) and its goal of creating a detailed, accessible, and high-resolution model of Florida's coastal waters by 2026.

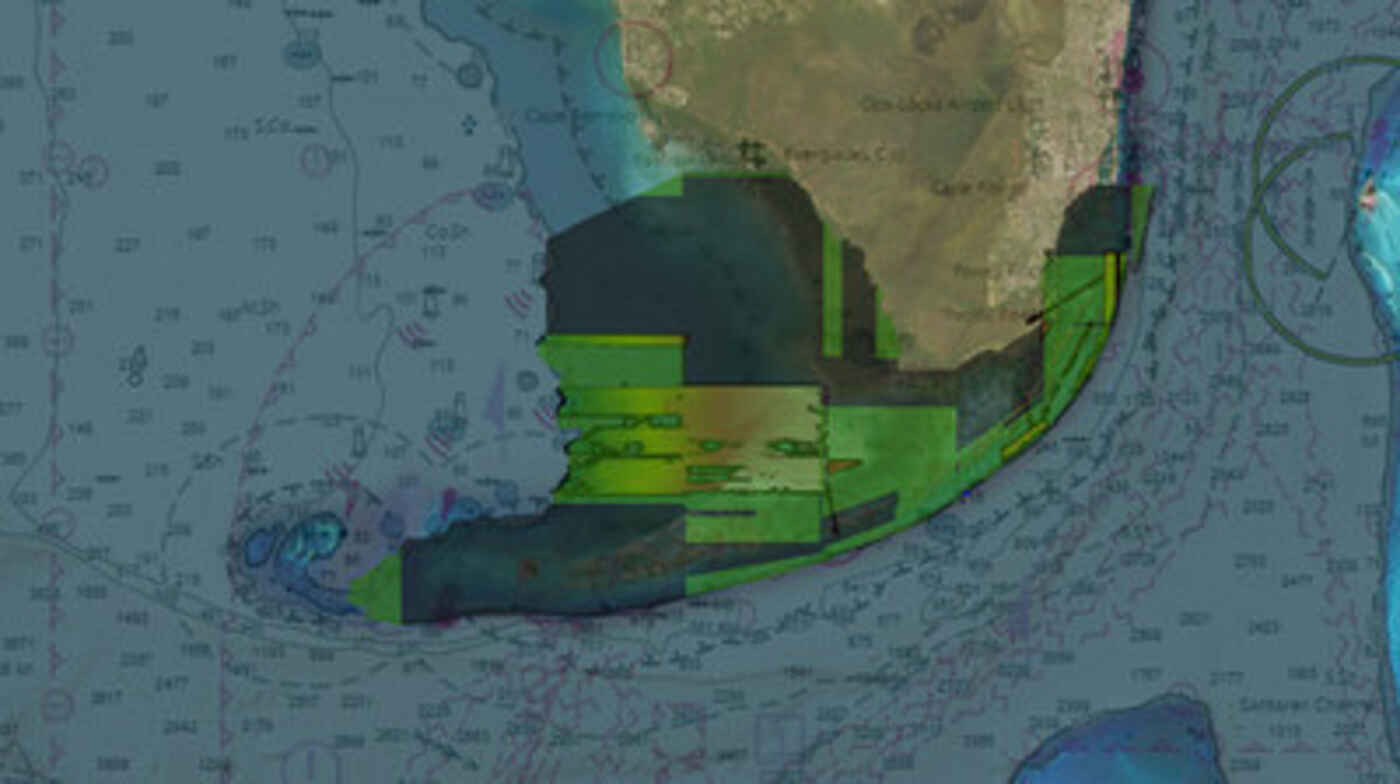

Bathymetric survey data appears as a digital map, with varying shades of blue and green representing the depths of Florida's coastal waters. The details are incredibly precise, with every nook and cranny of the seafloor mapped out.

The FSMI project is a continuation of the Florida Coastal Mapping Program (FCMaP), which involved collaboration from various federal and state agencies as well as community stakeholders to gather detailed seafloor data spanning 171,780 square kilometers along Florida's coastline. Once finished, the FSMI data will be integrated with the existing terrestrial lidar. This valuable information will assist federal and state agencies in comprehending the coastal vulnerability and effects of hurricanes in the region, assessing the success of restoration initiatives, and aiding in ongoing efforts to promote coastal resilience and map flood risks.

As part of this task order, Woolpert will be responsible for collecting bathymetric lidar data along Florida's southern coast, including the Florida Keys, and extending southwest to Dry Tortugas National Park. The data will cover a total area of 23,418 square kilometers.

According to Rick Householder, Program Director at Woolpert, the data collection will take place in two phases. Phase I will involve acquiring topographic and bathymetric lidar data up to a depth of 20 meters using aircraft. Phase II will include the use of marine vessels equipped with multibeam sonar to collect data beyond 20 meters up to 200 meters in depth.

Householder emphasized the significance of this project and its potential impact on various aspects such as assessing the health of marine habitats, disaster response, and resiliency efforts. He stated that the wealth of information gathered through this initiative will have long-term effects on the state of Florida. Additionally, Householder believes that this project can serve as a model for future seafloor mapping initiatives across the continental U.S.

The entire dataset is expected to be collected by May next year and delivered by summer 2024. The contract is currently in progress.

How to resolve AdBlock issue?

How to resolve AdBlock issue?