Professor Paul Bellan and his research team at Caltech have made a groundbreaking discovery that challenges previous understanding of plasma. Their experiments with magnetically accelerated plasma jets have revealed that high-energy electrons can produce X-rays in relatively "cold" plasma conditions, contrary to conventional understanding. This unexpected finding opens up new possibilities for scientific exploration.

The Journey of the Plasma Jet

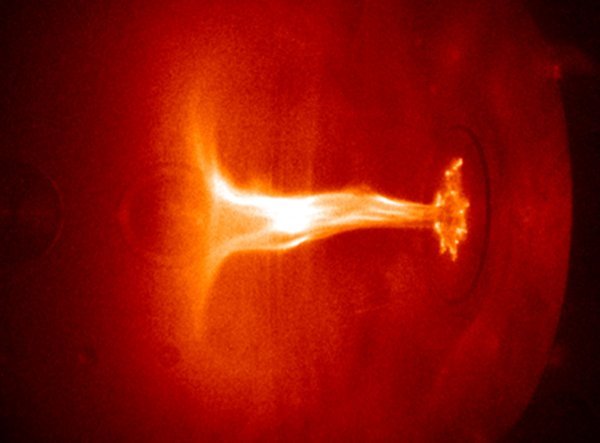

Bellan's research involves creating magnetically accelerated jets of plasma within a vacuum chamber. By ionizing the gas, applying high voltage, and generating strong magnetic fields, the plasma is molded into a jet that travels at incredible speeds. Observations of these plasma jets have revealed intriguing stages of evolution in just a matter of microseconds.

The plasma jet initially takes the shape of an umbrella and gradually extends in length. Once it reaches a certain point, it transforms into a rapidly expanding corkscrew shape due to instability. This rapid expansion triggers another instability, leading to the formation of ripples within the jet. These ripples play a crucial role in accelerating electrons to high energies.

The Choking Effect and Electron Acceleration

The ripples within the plasma jet effectively "choke" the electric current flowing through it. This choking effect is similar to placing one's thumb over a water hose, restricting the flow and creating a pressure gradient that accelerates the water. In this case, the choked jet current generates an electric field strong enough to accelerate electrons to high energy levels.

Previously, it was believed that cold plasmas were incapable of generating high-energy electrons due to their collisional nature. However, Bellan's experiments have disproved this notion. Like a driver navigating through gridlocked traffic, electrons within a cold plasma would collide with other particles, impeding their acceleration. But Bellan has demonstrated that high-energy electrons can be produced in cold plasma by magnetically accelerating plasma jets.

Professor Paul Bellan's research at Caltech has revealed the generation of X-rays in cold plasma, challenging conventional wisdom. To understand how high-energy electrons were produced, Bellan developed a supercomputer code that simulated the behavior of thousands of electrons and ions deflecting off each other within an electric field. By tweaking the parameters and observing the changes in electron behavior, Bellan sought to uncover the secrets behind their acceleration.

As the electrons accelerated in the electric field, they occasionally transferred energy to nearby ions, exciting them to emit visible light while slowing down themselves. However, most electrons merely deflected slightly without exciting the ions. It was this occasional energy transfer that allowed a few electrons to continuously accelerate and reach high energy levels.

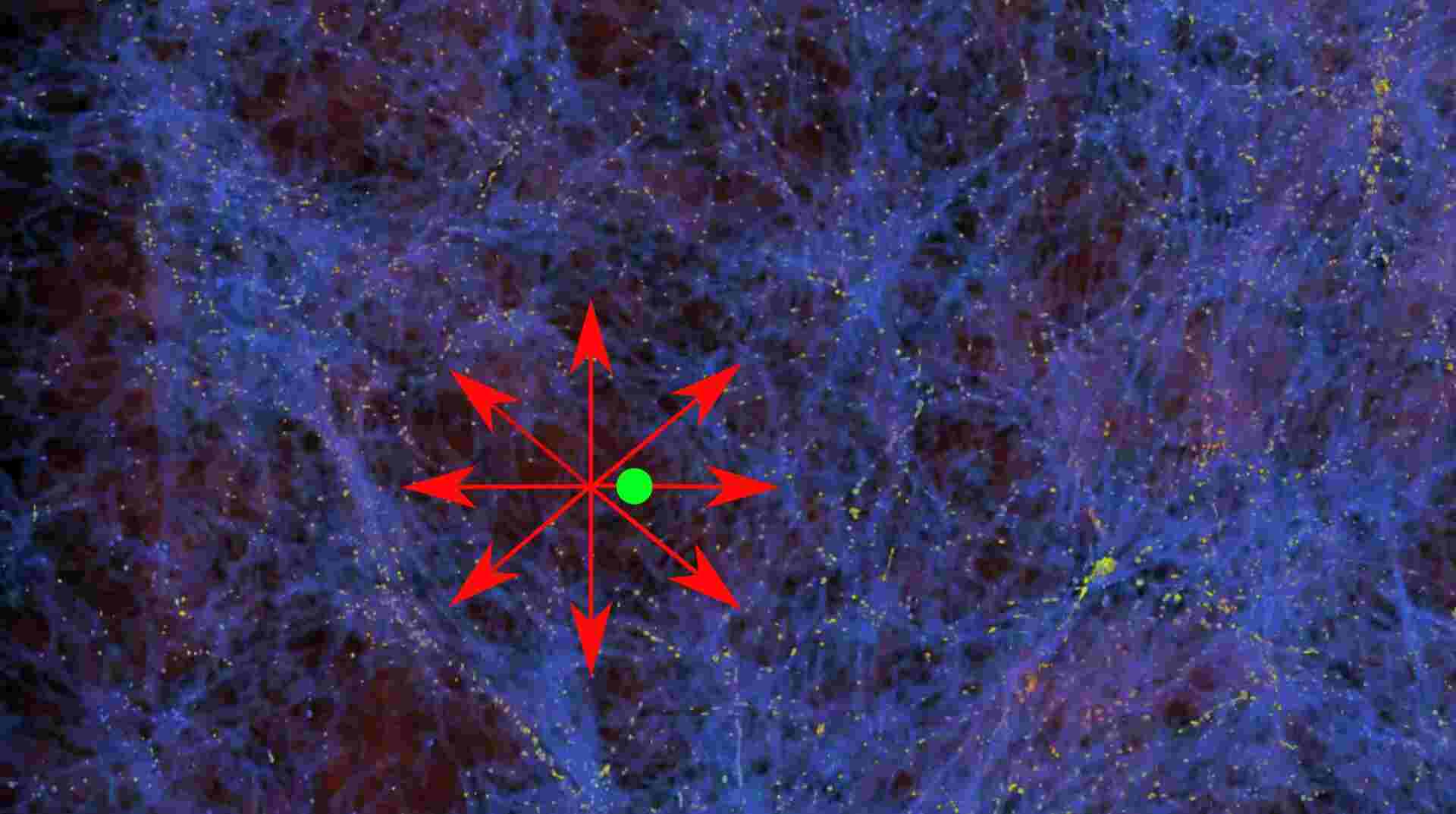

This discovery has implications for astrophysics and fusion energy research, as similar processes may occur in solar flares and other phenomena. Understanding the mechanisms behind electron acceleration in cold plasma opens up new avenues for research and potentially redefines our understanding of plasma physics. While the X-ray generation in cold plasma may not be directly applicable to practical fusion energy, it provides valuable insights into the underlying mechanisms at play.

In conclusion, as scientists continue to delve into the complexities of plasma dynamics, the mysteries of the universe may gradually unravel. The intricate interplay between particles and fields holds the key to unlocking new frontiers of knowledge.

How to resolve AdBlock issue?

How to resolve AdBlock issue?