The iconic Red List of Threatened Species, published by the International Union for Conservation of Nature (IUCN), identifies species at risk of extinction. A study in PLOS Biology publishing May 26th by Gabriel Henrique de Oliveira Caetano at Ben-Gurion University of the Negev, Israel, and colleagues presents a novel machine learning tool for assessing extinction risk and then use this tool to show that reptile species which are unlisted due to lack of assessment or data are more likely to be threatened than assessed species.

The IUCN’s Red List of Threatened Species is the most comprehensive assessment of the extinction risk of species and informs conservation policy and practices globally. However, the process for categorizing species is laborious and subject to bias, depending heavily on manual curation by human experts; many animal species have therefore not been evaluated, or lack sufficient data, creating gaps in protective measures.

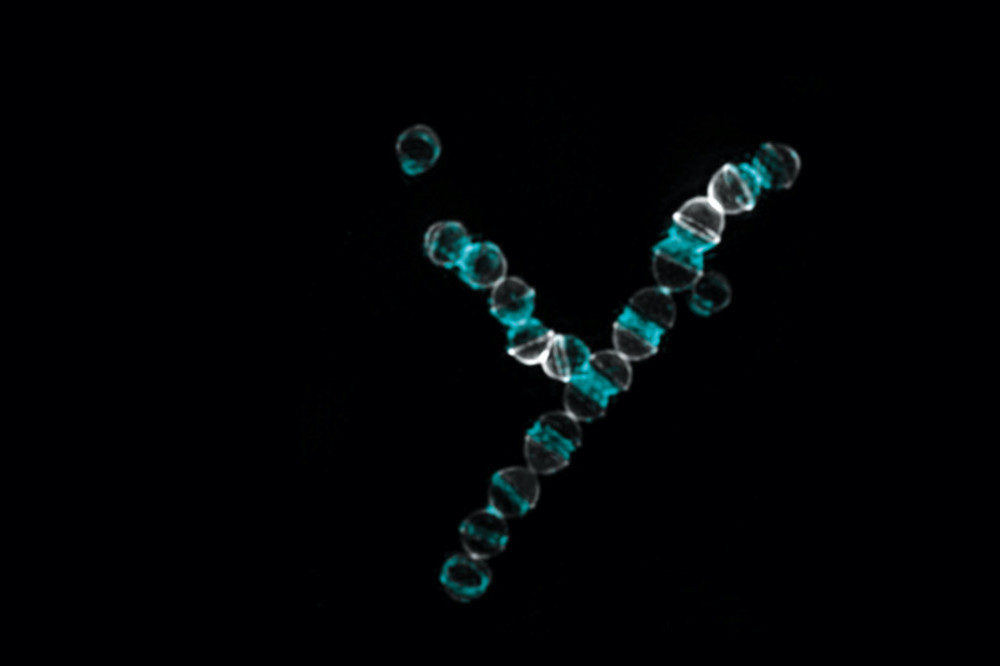

To assess 4,369 reptile species that were previously unable to be prioritized for conservation and develop accurate methods for assessing the extinction risk of obscure species, these researchers created a machine learning supercomputer model. The model assigned IUCN extinction risk categories to the 40% of the world’s reptiles that lacked published assessments or are classified as “DD” (“Data Deficient”) at the time of the study. The researchers validated the model’s accuracy, comparing it to the Red List risk categorizations.

The researchers found that the number of threatened species is much higher than reflected in the IUCN Red List and that both unassessed (“Not Evaluated” or “NE”) and Data Deficient reptiles were more likely to be threatened than assessed species. Future studies are needed to better understand the specific factors underlying extinction risk in threatened reptile taxa, to obtain better data on obscure reptile taxa, and to create conservation plans that include newly identified threatened species.

According to the authors, “Altogether, our models predict that the state of reptile conservation is far worse than currently estimated and that immediate action is necessary to avoid the disappearance of reptile biodiversity. Regions and taxa we identified as likely to be more threatened should be given increased attention in new assessments and conservation planning. Lastly, the method we present here can be easily implemented to help bridge the assessment gap on other less known taxa”.

Coauthor Shai Meiri adds, “Importantly, the additional reptile species identified as threatened by our models are not distributed randomly across the globe or the reptilian evolutionary tree. Our added information highlights that there are more reptile species in peril – especially in Australia, Madagascar, and the Amazon basin – all of which have a high diversity of reptiles and should be targeted for the extra conservation efforts. Moreover, species-rich groups, such as geckos and elapids (cobras, mambas, coral snakes, and others), are probably more threatened than the Global Reptile Assessment currently highlights, these groups should also be the focus of more conservation attention”

Coauthor Uri Roll adds, “Our work could be very important in helping the global efforts to prioritize the conservation of species at risk – for example using the IUCN red-list mechanism. Our world is facing a biodiversity crisis, and severe man-made changes to ecosystems and species, yet funds allocated for conservation are very limited. Consequently, it is key that we use these limited funds where they could provide the most benefits. Advanced tools- such as those we have employed here, together with accumulating data, could greatly cut the time and cost needed to assess extinction risk, and thus pave the way for more informed conservation decision making.”

How to resolve AdBlock issue?

How to resolve AdBlock issue?