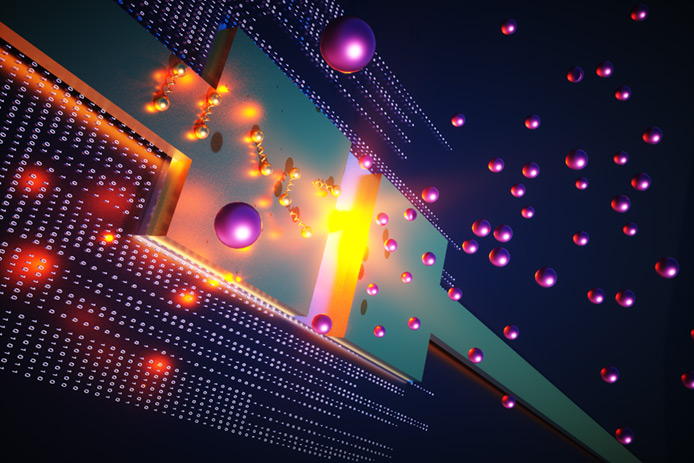

The promise of a quantum internet depends on the complexities of harnessing light to transmit quantum information over fiber optic networks. A potential step forward was reported today by researchers in Sweden who developed integrated chips that can generate light particles on demand and without the need for extreme refrigeration.

Quantum supercomputing today relies on states of matter, that is, electrons that carry qubits of information to perform multiple calculations simultaneously, in a fraction of the time it takes with classical supercomputing.

The co-author of the research, Val Zwiller, Professor at KTH Royal Institute of Technology, says that to integrate quantum supercomputing seamlessly with fiber-optic networks--which are used by the internet today--a more promising approach would be to harness optical photons.

"The photonic approach offers a natural link between communication and computation," he says. "That's important since the end goal is to transmit the processed quantum information using light."

But for photons to deliver qubits on-demand in quantum systems, they need to be emitted in a deterministic, rather than probabilistic, fashion. This can be accomplished at extremely low temperatures in artificial atoms, but today the research group at KTH reported a way to make it work in optical integrated circuits--at room temperature.

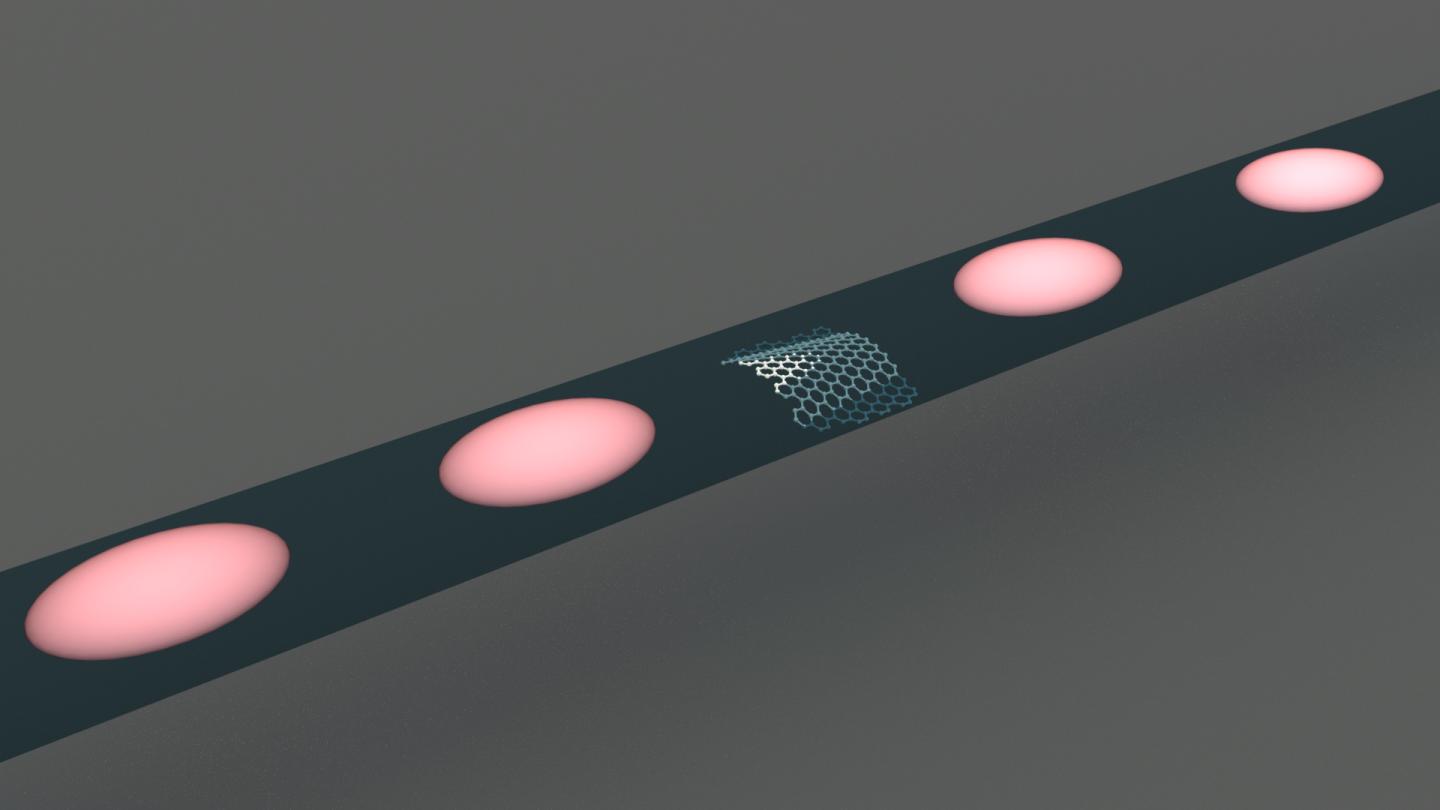

The new method enables photon emitters to be precisely positioned in integrated optical circuits that resemble copper wires for electricity, except that they carry light instead, says co-author of the research, Ali Elshaari, Associate Professor at KTH Royal Institute of Technology.

The researchers harnessed the single-photon-emitting properties of hexagonal boron nitride (hBN), a layered material. hBN is a compound commonly used is used ceramics, alloys, resins, plastics, and rubbers to give them self-lubricating properties. They integrated the material with silicon nitride waveguides to direct the emitted photons.

Quantum circuits with light are either operated at cryogenic temperatures--plus 4 Kelvin above absolute zero--using atom-like single-photon sources, or at room temperature using random single-photon sources, Elshaari says. By contrast, the technique developed at KTH enables optical circuits with on-demand emission of light particles at room temperature.

"In existing optical circuits operating at room temperature, you never know when the single photon is generated unless you do a heralding measurement," Elshaari says. "We realized a deterministic process that precisely positions light-particles emitters operating at room temperature in an integrated photonic circuit."

The researchers reported coupling of hBN single-photon emitter to silicon nitride waveguides, and they developed a method to image the quantum emitters. Then in a hybrid approach, the team built the photonic circuits for the quantum sources locations using a series of steps involving electron beam lithography and etching, while still preserving the high-quality nature of the quantum light.

The achievement opens a path to hybrid integration, that is, incorporating atom-like single-photon emitters into photonic platforms that cannot emit light efficiently on demand.

How to resolve AdBlock issue?

How to resolve AdBlock issue?