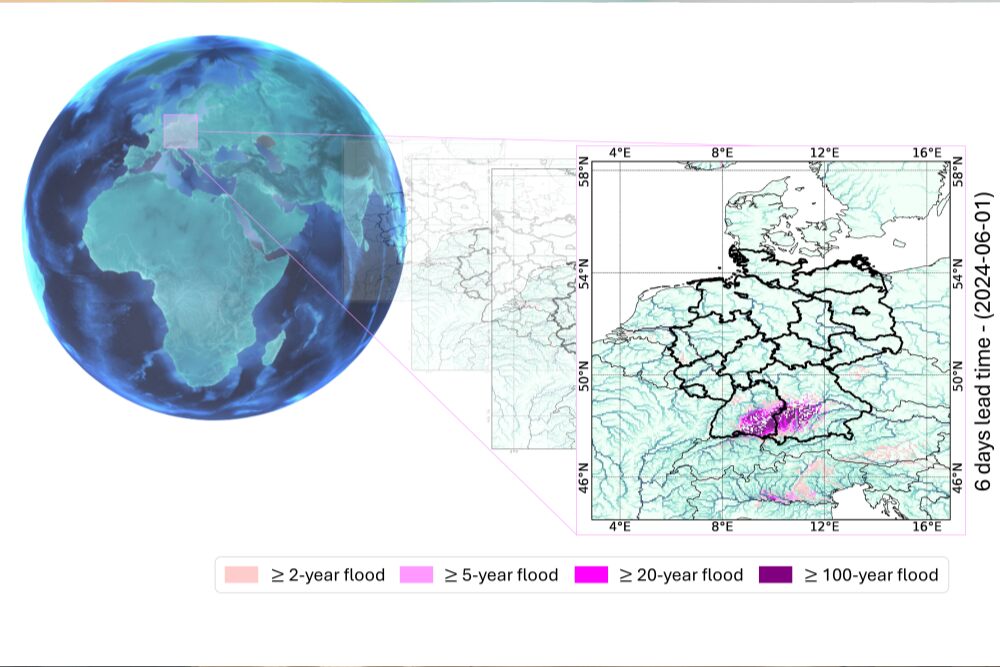

In an era characterized by increasingly unpredictable river fluctuations, a novel tool developed by the LAMARR Institute (for Machine Learning & Artificial Intelligence) in Germany may represent a significant advancement. Their recent introduction of RiverMamba, a deep-learning model, is designed to forecast river discharge and fluvial floods on a global grid.

Researchers indicate that RiverMamba can generate forecasts of global river discharge on a 0.05° grid (approximately 5 km resolution) with a lead time of up to seven days.

Key technical features include:

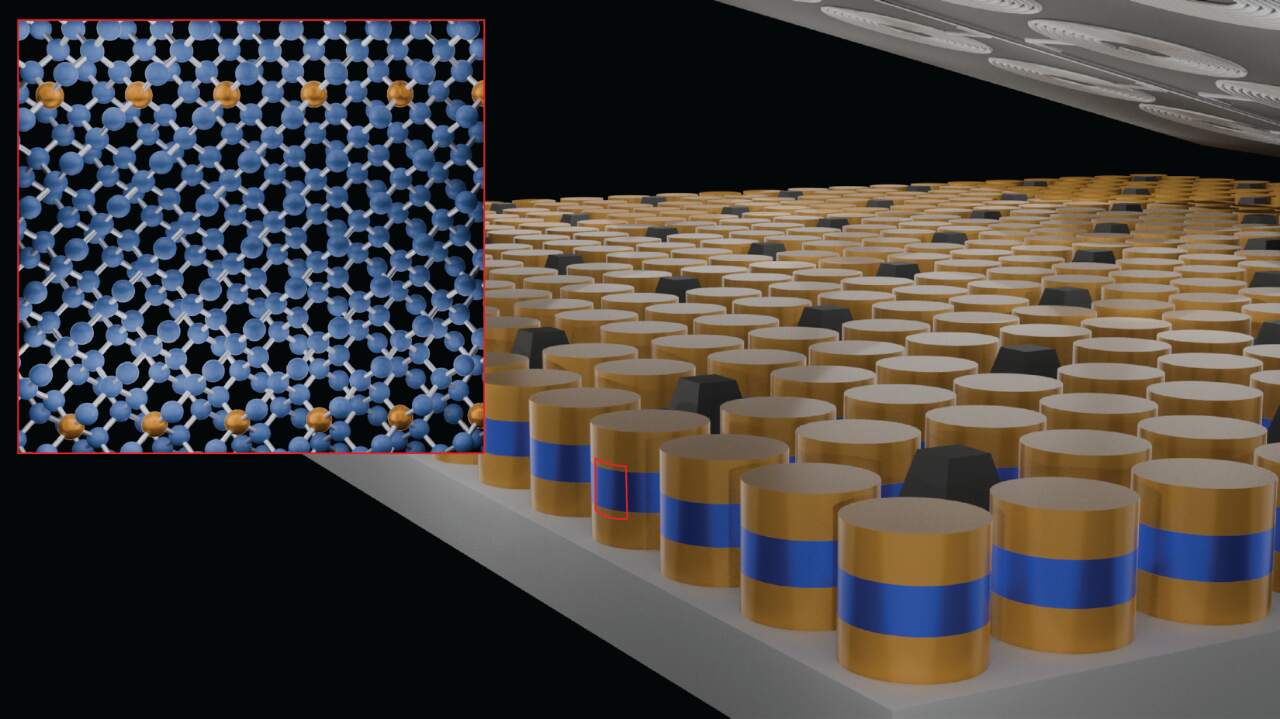

- The utilization of "Mamba blocks" (bidirectional state-space modules) to model the spatio-temporal routing of rivers and meteorological forcing.

- Integration of long-term reanalysis data (e.g., ERA5-Land), static river attributes, and meteorological forecasts (ECMWF HRES) to inform predictions.

- The developers assert that RiverMamba "surpasses both operational AI- and physics-based models" in forecasting accuracy for extreme events.

This is particularly significant for the St. Louis, Missouri, home of this year's SC25 supercomputing show, and beyond. Flood forecasting is a critical undertaking in areas like the Kansas City region, where river basins (e.g., the Missouri and Kansas rivers and their tributaries) can pose unexpected challenges. A model with global coverage and medium-range lead times offers several advantages:

- Lead-time extension: Up to seven days provides emergency planners with increased preparedness time.

- Granular spatial resolution: The 0.05° grid enables finer discrimination of catchments.

- Extreme flood modeling: Enables the analysis of rare, high-impact events, rather than just "typical" flows.

- Scalability: A global model allows for potential application beyond major rivers to smaller basins, which are often less well-monitored.

For MoveInLab and its KC-first orientation, this means: as climate change nudges more frequent extreme weather, the ability to forecast at finer scales can inform property risk assessment, neighbourhood resilience strategies, and buyer/seller consults about flood hazard.

The model, as noted by its developers, has certain limitations. Specifically, observational data may not fully capture human interventions, such as dams and levees, and uncertainties remain in meteorological forecasting. The model's operational readiness across all catchment areas requires further demonstration. For users in the KC area, local calibration may be necessary, as global models often require adaptation to local hydro-geomorphology, urbanization patterns, and data characteristics.

Supercomputing's Advancement in River Flow Modeling

The underlying technology, supercomputing (i.e., large-scale clusters, high-performance computing, GPU farms), has transitioned from modeling galactic structures to modeling granular elements, such as water molecules in rivers. This shift is significant because: Data volume and velocity necessitate processing terabytes of meteorological, land-surface, and hydrological data to predict floods globally at approximately 5 km resolution with a seven-day lead time, an undertaking that posed challenges for traditional models.

Model complexity

The Mamba blocks in RiverMamba embed spatio-temporal routing, which is computationally intensive. Without supercomputing or GPU acceleration, the model would operate too slowly to be practical for real-time forecasting. Operational resilience: Flood-warning centers in regions like the Midwest require models that run quickly, reliably, and frequently to issue timely alerts, which is facilitated by supercomputing infrastructure.

Democratization risk

However, compute-heavy models necessitate resources (energy, hardware, expertise). If only a few institutions can operate them, the benefits may not reach underserved regions, raising equity concerns. In summary, supercomputing is not merely "big machines doing big math" but rather the new infrastructure supporting Earth-system resilience. For flood forecasting, this infrastructure is finally undergoing the necessary upgrades.

RiverMamba represents a significant advancement, characterized by global awareness, fine resolution, and deep-learning capabilities. For the #SC25 and STL audience, this translates to improved tools for understanding and communicating flood risk. However, it is not a "magic bullet." Local adaptation, data limitations, and access to computational resources remain crucial. The era of "smart rivers" is emerging, and the technological underpinnings are being significantly enhanced.

How to resolve AdBlock issue?

How to resolve AdBlock issue?