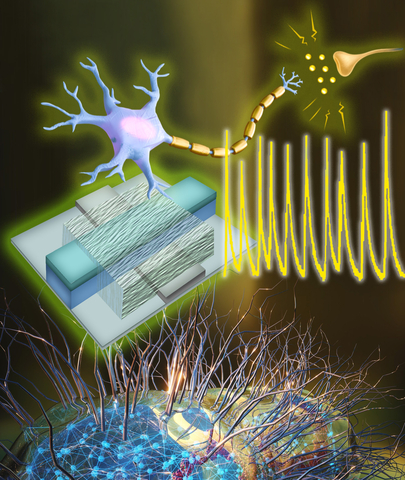

A recent claim from researchers at Auburn University, highlighted in a press release, announces the development of a new class of materials called "surface-immobilized electrides." These materials reportedly can host electrons free from atomic constraints, potentially providing advancements in quantum computing and catalytic technologies.

The announcement is ambitious, suggesting the possibility of free-electron "islands" functioning as quantum bits, and "electron seas" aiding in catalysis, along with a tunable platform for future materials. However, as with many striking scientific press releases, a healthy dose of skepticism is warranted.

What the Researchers Claim

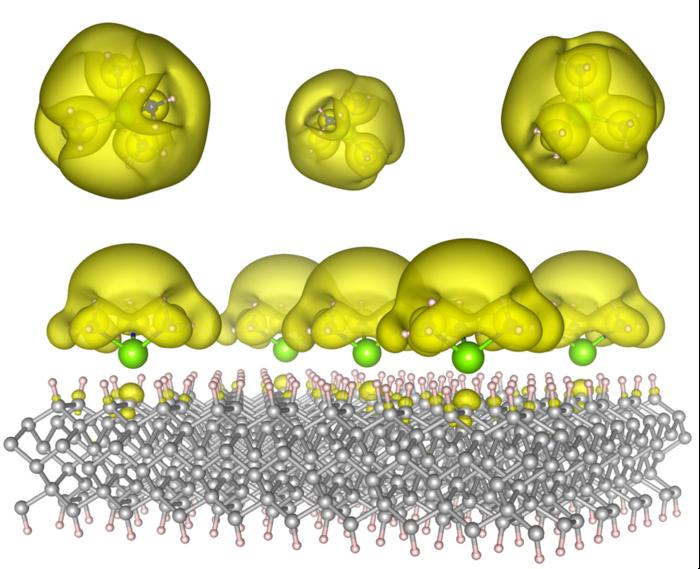

The Auburn team, publishing in ACS Materials Letters, describes a theoretical design for materials in which solvated-electron precursor molecules are anchored on rigid surfaces, such as diamond or silicon carbide.

By altering the molecular arrangement, they suggest that the electrons can adopt different states: localized "islands" that act as quantum bits, or extended metallic states that facilitate catalytic behavior.

The researchers frame this advancement as a solution to longstanding challenges with electrides (materials where electrons are loosely bound) by combining stability (through anchoring) with tunability.

The ultimate claim is bold: these materials could "change the way we compute and the way we manufacture."

Gaps, Uncertainties, and Cautionary Flags

A closer inspection raises several red flags and caveats that temper the excitement about this work:

1. No Experimental Validation Yet

The research is purely computational. There are no lab-grown samples, no spectroscopy data, no transport measurements, and no demonstration of the claimed states in real materials. All effects are predicted but not observed.

While simulations can guide experiments, they often overlook real-world complications such as defects, thermal fluctuations, interface issues, impurities, and fabrication challenges.

2. Stability and Scalability Remain Speculative

The press release emphasizes that anchoring helps improve stability compared to previous electrides, which have been notoriously fragile and sensitive to their environment. However, actually achieving a stable, air-tolerant, and scalable version in a real device is a significant leap.

Moreover, the practicality of anchoring such molecules uniformly across device-scale surfaces, along with adequate yields and reproducibility, has yet to be tested.

3. Tuning Electrons Is Harder Than It Seems

Electron-electron interactions, screening, disorder, coupling to phonons, electron leakage, and decoherence can all degrade theoretical predictions when applied to actual materials. The press release glosses over these complex details.

In quantum computing especially, coherence times and error correction thresholds are demanding. A material that theoretically supports a localized “island” electron does not guarantee it will behave reliably in a real qubit environment.

4. Broad Claims, Loosely Connected Applications

The press release shifts between quantum computing and catalysis, suggesting that a single class of materials could serve both purposes. This all-encompassing narrative is appealing but also indicates a lack of focus. The real-world constraints in catalysis (surface chemistry, stability in reactive conditions) differ significantly from those in a quantum processor (low noise, ultralow temperature, isolation).

Additionally, many material proposals tend to overpromise. The idea of “one platform to rule them all” has misled numerous prior claims in materials science and quantum technology.

5. Media Framing vs. Scientific Modesty

The press release is highly promotional, using phrases like “Imagine … supercomputers that learn ….” Such language raises concerns that what is being sold is hype or, at the very least, aspirational marketing rather than solid, near-term deliverables.

Why the Work Might Still Matter, But With Caution

Despite these concerns, the computational modeling presented is nontrivial, and exploring new electron-anchoring schemes is a legitimate direction in materials science. The concept of tuning delocalization versus localization of electrons is critical to many functional materials, including superconductors, topological insulators, and 2D materials.

If the theoretical groundwork is sound, it could inspire experimentalists to undertake synthesis trials, surface chemistry approaches, or thin-film growth strategies. In this regard, the paper may serve as a generative idea rather than a fully realized technology.

Bottom Line

The claim by the Auburn team that "quantum crystals" could serve as a blueprint for future computing and chemistry is an intriguing hypothesis but is not yet a proven advancement. Without experimental validation and with many unknowns regarding stability, scalability, and real-world performance, it is advisable for readers and funders to consider this as speculative frontier research promising, but far from certain.

Ultimately, enthusiasm should be tempered with prudent scientific caution. Time and experimentation will reveal whether these innovative electron designs can withstand the challenges presented by real-world materials.

How to resolve AdBlock issue?

How to resolve AdBlock issue?