Analyzing more than two decades' worth of supernova explosions convincingly bolsters modern cosmological theories and reinvigorates efforts to answer fundamental questions.

Analyzing more than two decades' worth of supernova explosions convincingly bolsters modern cosmological theories and reinvigorates efforts to answer fundamental questions.

Astrophysicists have performed a powerful new analysis that places the most precise limits yet on the composition and evolution of the universe. With this analysis, dubbed Pantheon+, cosmologists find themselves at a crossroads.

Pantheon+ convincingly finds that the cosmos is composed of about two-thirds dark energy and one-third matter — mostly in the form of dark matter — and is expanding at an accelerating pace over the last several billion years. However, Pantheon+ also cements a major disagreement over the pace of that expansion that has yet to be solved.

By putting prevailing modern cosmological theories, known as the Standard Model of Cosmology, on even firmer evidentiary and statistical footing, Pantheon+ further closes the door on alternative frameworks accounting for dark energy and dark matter. Both are bedrocks of the Standard Model of Cosmology but have yet to be directly detected and rank among the model's biggest mysteries. Following through on the results of Pantheon+, researchers can now pursue more precise observational tests and hone explanations for the ostensible cosmos.

"With these Pantheon+ results, we are able to put the most precise constraints on the dynamics and history of the universe to date," says Dillon Brout, an Einstein Fellow at the Center for Astrophysics | Harvard & Smithsonian. "We've combed over the data and can now say with more confidence than ever before how the universe has evolved over the eons and that the current best theories for dark energy and dark matter hold strong."

Brout is the lead author of a series of papers describing the new Pantheon+ analysis, published jointly today in a special issue of The Astrophysical Journal.

Pantheon+ is based on the largest dataset of its kind, comprising more than 1,500 stellar explosions called Type Ia supernovae. These bright blasts occur when white dwarf stars — remnants of stars like our Sun — accumulate too much mass and undergo a runaway thermonuclear reaction. Because Type Ia supernovae outshine entire galaxies, the stellar detonations can be glimpsed at distances exceeding 10 billion light years, or back through about three-quarters of the universe's total age. Given that the supernovae blaze with nearly uniform intrinsic brightnesses, scientists can use the explosions' apparent brightness, which diminishes with distance, along with redshift measurements as markers of time and space. That information, in turn, reveals how fast the universe expands during different epochs, which is then used to test theories of the fundamental components of the universe.

The breakthrough discovery in 1998 of the universe's accelerating growth was thanks to a study of Type Ia supernovae in this manner. Scientists attribute the expansion to invisible energy, therefore monikered dark energy, inherent to the fabric of the universe itself. Subsequent decades of work have continued to compile ever-larger datasets, revealing supernovae across an even wider range of space and time, and Pantheon+ has now brought them together into the most statistically robust analysis to date.

"In many ways, this latest Pantheon+ analysis is a culmination of more than two decades' worth of diligent efforts by observers and theorists worldwide in deciphering the essence of the cosmos," says Adam Riess, one of the winners of the 2011 Nobel Prize in Physics for the discovery of the accelerating expansion of the universe and the Bloomberg Distinguished Professor at Johns Hopkins University (JHU) and the Space Telescope Science Institute in Baltimore, Maryland. Riess is also an alum of Harvard University, holding a Ph.D. in astrophysics.

Brout's own career in cosmology traces back to his undergraduate years at JHU, where he was taught and advised by Riess. There Brout worked with then-PhD-student and Riess-advisee Dan Scolnic, who is now an assistant professor of physics at Duke University and another co-author on the new series of papers.

Several years ago, Scolnic developed the original Pantheon analysis of approximately 1,000 supernovae.

Now, Brout and Scolnic and their new Pantheon+ team have added some 50 percent more supernovae data points in Pantheon+, coupled with improvements in analysis techniques and addressing potential sources of error, which ultimately has yielded twice the precision of the original Pantheon.

"This leap in both the dataset quality and in our understanding of the physics that underpins it would not have been possible without a stellar team of students and collaborators working diligently to improve every facet of the analysis," says Brout.

Taking the data as a whole, the new analysis holds that 66.2 percent of the universe manifests as dark energy, with the remaining 33.8 percent being a combination of dark matter and matter. To arrive at an even more comprehensive understanding of the constituent components of the universe at different epochs, Brout and colleagues combined Pantheon+ with other strongly evidenced, independent, and complementary measures of the large-scale structure of the universe and with measurements from the earliest light in the universe, the cosmic microwave background.

Another key Pantheon+ result relates to one of the paramount goals of modern cosmology: nailing down the current expansion rate of the universe, known as the Hubble constant. Pooling the Pantheon+ sample with data from the SH0ES (Supernova H0 for the Equation of State) collaboration, led by Riess, results in the most stringent local measurement of the current expansion rate of the universe.

Pantheon+ and SH0ES together find a Hubble constant of 73.4 kilometers per second per megaparsec with only 1.3% uncertainty. Stated another way, for every megaparsec, or 3.26 million light years, the analysis estimates that in the nearby universe, space itself is expanding at more than 160,000 miles per hour.

However, observations from an entirely different epoch of the universe's history predict a different story. Measurements of the universe's earliest light, the cosmic microwave background, when combined with the current Standard Model of Cosmology, consistently peg the Hubble constant at a rate that is significantly less than observations taken via Type Ia supernovae and other astrophysical markers. This sizable discrepancy between the two methodologies has been termed the Hubble tension.

The new Pantheon+ and SH0ES datasets heighten this Hubble tension. In fact, the tension has now passed the important 5-sigma threshold (about one-in-a-million odds of arising due to random chance) that physicists use to distinguish between possible statistical flukes and something that must accordingly be understood. Reaching this new statistical level highlights the challenge for both theorists and astrophysicists to try and explain the Hubble constant discrepancy.

"We thought it would be possible to find clues to a novel solution to these problems in our dataset, but instead we’re finding that our data rule out many of these options and that the profound discrepancies remain as stubborn as ever," says Brout.

The Pantheon+ results could help point to where the solution to the Hubble tension lies. "Many recent theories have begun pointing to exotic new physics in the very early universe, however, such unverified theories must withstand the scientific process and the Hubble tension continues to be a major challenge," says Brout.

Overall, Pantheon+ offers scientists a comprehensive look back through much of cosmic history. The earliest, most distant supernovae in the dataset gleam forth from 10.7 billion light years away, meaning from when the universe was roughly a quarter of its current age. In that earlier era, dark matter and its associated gravity held the universe's expansion rate in check. Such a state of affairs changed dramatically over the next several billion years as the influence of dark energy overwhelmed that of dark matter. Dark energy has since flung the contents of the cosmos ever farther apart and at an ever-increasing rate.

"With this combined Pantheon+ dataset, we get a precise view of the universe from the time when it was dominated by dark matter to when the universe became dominated by dark energy," says Brout. "This dataset is a unique opportunity to see dark energy turn on and drive the evolution of the cosmos on the grandest scales up through present time."

Studying this changeover now with even stronger statistical evidence will hopefully lead to new insights into dark energy's enigmatic nature.

"Pantheon+ is giving us our best chance to date of constraining dark energy, its origins, and its evolution," says Brout.

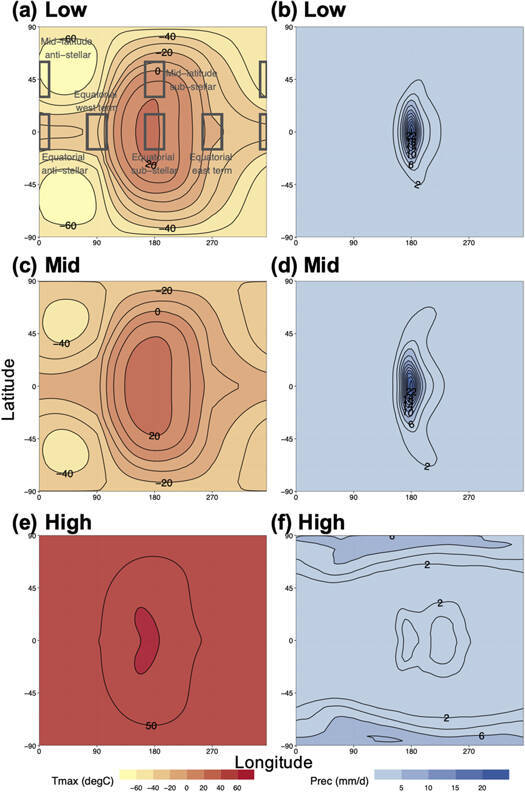

The climate crisis presents a huge challenge to all people on Earth. It has led many scientists to look for exoplanets, planets outside our solar system that humans could potentially settle. The James Webb Space Telescope was developed as part of this search to provide detailed observational data about earth-like exo-planets in the coming years. A new project, led by Dr. Assaf Hochman at the Fredy & Nadine Herrmann Institute of Earth Sciences at the Hebrew University of Jerusalem (HU) has successfully developed a framework to study the atmospheres of distant planets and locate those planets fit for human habitation, without having to visit them physically. Their research study was published in the academic journal Astrophysical Journal.

The climate crisis presents a huge challenge to all people on Earth. It has led many scientists to look for exoplanets, planets outside our solar system that humans could potentially settle. The James Webb Space Telescope was developed as part of this search to provide detailed observational data about earth-like exo-planets in the coming years. A new project, led by Dr. Assaf Hochman at the Fredy & Nadine Herrmann Institute of Earth Sciences at the Hebrew University of Jerusalem (HU) has successfully developed a framework to study the atmospheres of distant planets and locate those planets fit for human habitation, without having to visit them physically. Their research study was published in the academic journal Astrophysical Journal.

How to resolve AdBlock issue?

How to resolve AdBlock issue?  Analyzing more than two decades' worth of supernova explosions convincingly bolsters modern cosmological theories and reinvigorates efforts to answer fundamental questions.

Analyzing more than two decades' worth of supernova explosions convincingly bolsters modern cosmological theories and reinvigorates efforts to answer fundamental questions. A technique developed by Brazilian researchers enhances the efficiency of breeding programs, saving selection time and the cost of plant genotyping and characterization

A technique developed by Brazilian researchers enhances the efficiency of breeding programs, saving selection time and the cost of plant genotyping and characterization