The acceleration of climate change has increased forest dieback in a wide range of tree species and environments. In response to this alarming situation, transplantation strategies adapted to evolutionary mechanisms are being studied, for example, the idea of transplanting trees to more compatible climates. A team of INRAE and CNRS scientists have developed models based on height growth in maritime pine to predict how trees respond in a given environment. Their results, published on April 29th in The American Naturalist, show that models which incorporate genomic and climatic data predict tree height growth better than pre-existing models based on climatic data alone. This research could rapidly lead to tangible applications in forest conservation and management, notably based on transplantation strategies.

Trees are an essential cornerstone in the functioning and survival of forest ecosystems. But as global change accelerates, certain tree populations, too slow to adapt, may experience population decline or even extinction. Conservation and forest management strategies can be implemented to avoid such scenarios, such as moving trees to more compatible climates, known as assisted gene flow, or to threatened populations that lack genetic diversity, known as an evolutionary rescue. Because such strategies commit forest management authorities for several years, it is important to anticipate how transplanted trees will respond to their new environment.

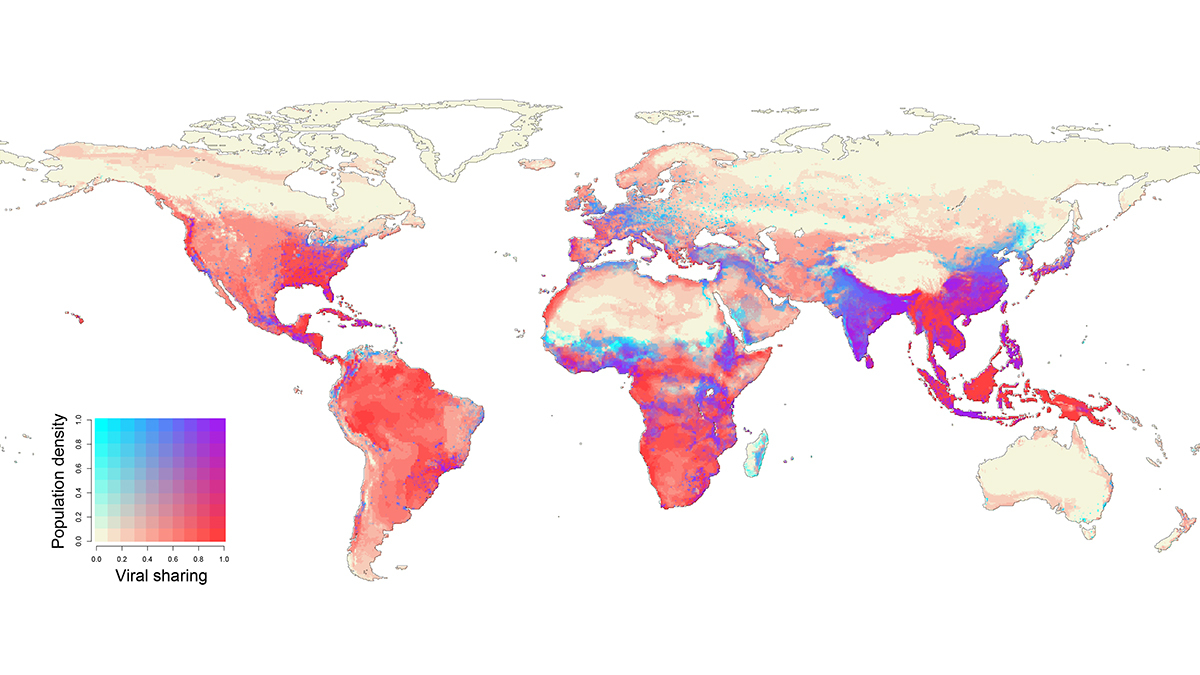

Until now, prediction models have been based mainly on the climate of origin of transplanted tree populations. However, genomic data provide valuable information on adaptive processes in trees, such as growth. With climatic and genomic information more and more accessible thanks to the continually decreasing cost of sequencing technology, the research team developed models combining these two types of data to improve the robustness and accuracy of predictions.

A model based on a large-scale experimental scheme of maritime pine in France, Spain, and Portugal

Researchers developed the models using maritime pine, an emblematic species of the Mediterranean basin. An experimental monitoring system was set up at five sites, in France (Cestas Pierroton), Spain (Asturias, Cáceres, and Madrid), and Portugal (Fundão), with trees from 34 maritime pine populations collected throughout the species' natural habitat. Scientists focused on predicting the height growth of trees, a critical factor in economic and ecological terms given that the fastest growing trees have a higher probability of survival and reproduction.

Results show that observed height variations in maritime pine are explained by the different gene pools from which they originate and by the different climates in which they’ve evolved. The incorporation of climatic and genomic data into the models improved predictions of population height growth by an average of 14–25% depending on the experimental site, compared to models based on climatic data alone.

The findings hold potential for the development of models to predict how transplanted tree populations adapt to a new environment in the context of forest conservation and management.

How to resolve AdBlock issue?

How to resolve AdBlock issue?