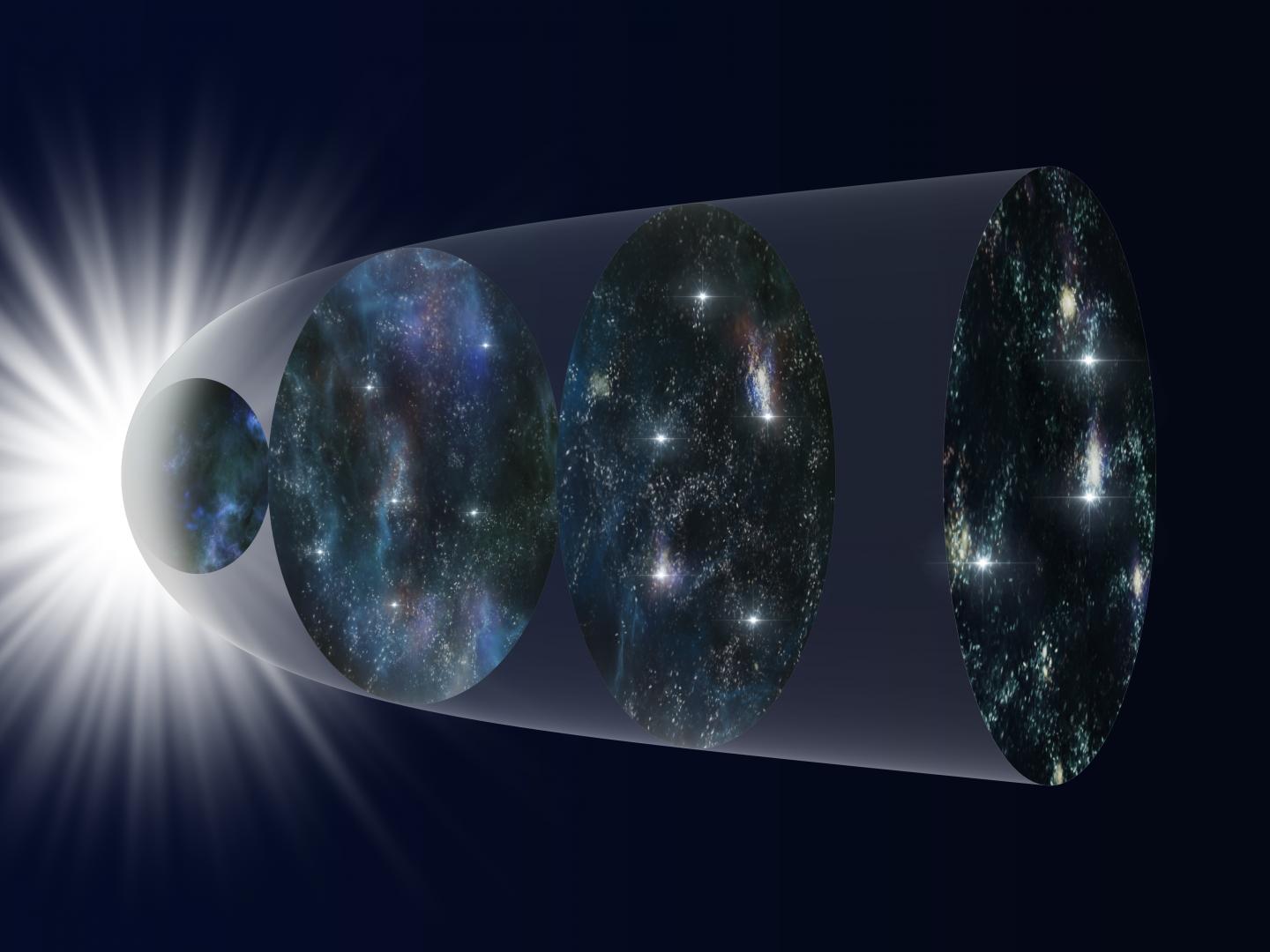

Heavy precipitation can cause large economic, ecological, and human life losses. Both its frequency and intensity have increased due to climate change influences. Therefore, it is becoming increasingly critical to accurately model and predict heavy precipitation events. However, current global climate models (GCMs) struggle to correctly model tropical precipitation, particularly heavy rainfall. Atmospheric scientists are working to identify and minimize model biases that arise when attempting to model large-scale and convective precipitation.

"Unrealistic convective and large-scale precipitation components essentially contribute to the biases of simulated precipitation," said Prof. Jing Yang, a faculty member in the Geographical Science Department at Beijing Normal University.

Prof. Yang and her postgraduate student Sicheng He, along with Qing Bao from the Institute of Atmospheric Physics at the Chinese Academy of Sciences, explored the challenges and barriers to achieving realistic rainfall modeling from the perspective of convective and large-scale precipitation.

"Although sometimes total rainfall amounts can be simulated well, the convective and large-scale precipitation partitions are incorrect in the models," remarked Yang.

To clarify the status of convective and large-scale precipitation components within current GCMs, researchers comprehensively classified 16 CMIP6 models focusing on tropical heavy rainfall. In most cases, results show much more rainfall resolved from large-scale rainfall rather than convective components of CMIP6 model simulations, which is not realistic.

The research team divided model components into three distinct groups to better assess based on the percentage of large-scale precipitation: (1) whole mid-to-lower tropospheric wet biases (60%-80% large-scale rainfall); (2) mid-tropospheric wet peak (50% convective/large-scale rainfall); and (3) lower-tropospheric wet peak (90%-100% large-scale rainfall).

These classifications are closely associated with the vertical distribution of moisture and clouds within the tropical atmosphere. Because the radiative effects of low and high clouds differ, the associated differences in vertical cloud distributions can potentially cause different climate responses, therefore considerable uncertainties in climate projections.

The study is recently published in Advances in Atmospheric Sciences. "The associated vertical distribution of unique clouds potentially causes different climate feedback, suggesting accurate convective/large-scale rainfall partitions are necessary to reliable climate projection," noted Yang.

How to resolve AdBlock issue?

How to resolve AdBlock issue?