Unlocking the secrets of the sea: How Japanese scientists are working to improve tsunami warning systems

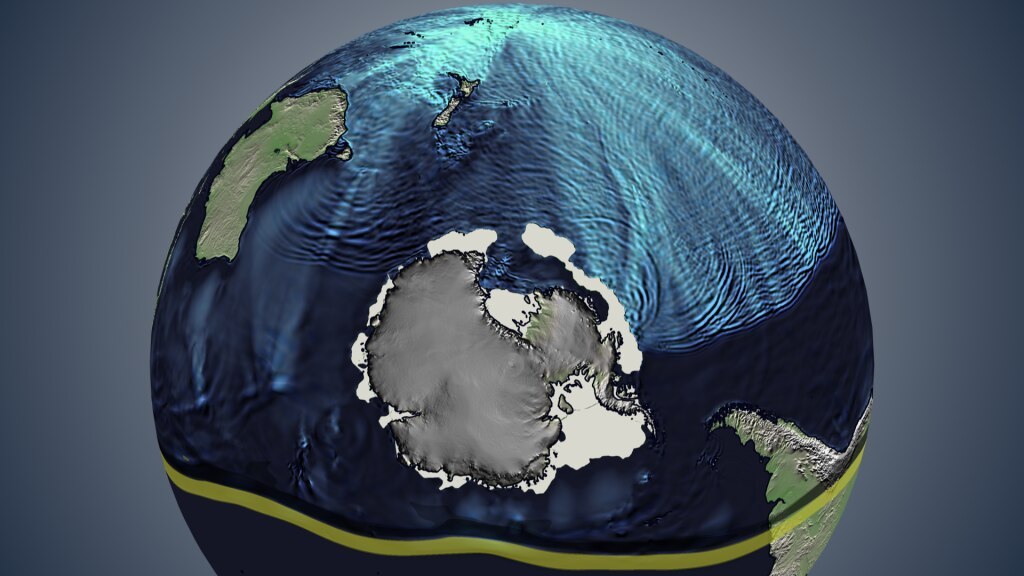

The Hunga Tonga-Hunga Ha'apai volcano in Tonga erupted on January 15, 2022, causing massive amounts of energy to be released into the atmosphere and ocean, leading to tsunamis across the Pacific Ocean. The Shocks, Solitons, and Turbulence Unit of the Okinawa Institute of Science and Technology (OIST) in Japan has conducted research into the disturbances in the atmosphere and ocean during this event and has developed a supercomputer model to enhance the current tsunami early warning systems.

Stephen Winn, a research technician in the unit and first author of the research article, stated “It's important to know how the atmospheric wave changes in time to make accurate predictions that would be of use for warning systems.”

Unlike a regular tsunami caused by a rapid movement of the seabed, the large waves caused by the Tonga explosion were also influenced by a pressure wave hundreds of kilometers wide released into the atmosphere. The atmospheric pressure wave first moved upwards and then spread outwards traveling at 1,141 km/h on average, about 400km/h faster than a regular tsunami can travel in deep water. It traveled around the earth causing waves as far away as the Mediterranean Sea. “This was the first event of its kind recorded in detail by modern instruments,” Prof. Emile Touber, leader of the Shocks, Solitons and Turbulence Unit stated.

As the atmospheric wave travels above the ocean, it displaces the body of water underneath, creating waves that travel faster than a regular tsunami. “Normally, a tsunami wave created in the Pacific would not reach the Mediterranean because it would have to travel around land masses to get there, but atmospheric waves are not restricted, traveling over those land masses,” Dr. Adel Sarmiento, a postdoc researcher at the unit explained. This is why the wave can reach worldwide and has a broader impact than a regular tsunami.

The scientists used measurements from the Tonga event to validate their model and used a state-of-the-art code, dNami, co-developed by Dr. Nicolas Alferez at the Conservatoire National des Arts et Métiers in Paris, France, to rapidly simulate the earth during the event using the supercomputer at OIST. The code allows them to create simulations in satisfactory resolution, faster than real-time, so that they are useful for improving warning systems in the future.

Prof. Touber explained that they can now more accurately predict the arrival time and height of a wave at a specific location and rapidly identify areas at high risk.

Hurricanes and typhoons can also cause disturbances in the atmosphere that interact with the sea, causing significant water level changes that will affect coastlines. “With our model, we can explore what might happen to the water flow as it approaches the coast if the sea level changes by a certain amount with certain typical storm conditions,” Prof. Touber said. “This can help decide on the kind of coastal defense systems that should be put in place for storm-related surges.”

A group of scientists from Japan conducted a study on the interactions between the ocean and atmosphere that occurred after the Tonga volcano eruption. The results of the study were very promising as they developed a model that has the potential to predict high-risk areas with great accuracy and improve the existing tsunami warning systems. This research is a major milestone in understanding the complex interplay between the ocean and atmosphere and has the potential to save many lives and properties in the future.

How to resolve AdBlock issue?

How to resolve AdBlock issue?