We are in the midst of a scientific and technological revolution. The computers of today use artificial intelligence to learn from examples and execute sophisticated functions that until recently were thought impossible. These smart algorithms can recognize faces and even drive autonomous vehicles. Deep learning networks, which are responsible for many of these technological advances, are based on the same principles that form the structure of our brain: they are composed of artificial nerve cells that are connected through artificial synapses; these cells send signals to one another via these synapses.

Our basic understanding of neural function dates back to the 1950s. Based on this elementary understanding, present-day artificial neurons that are used in deep learning operate by summing their synaptic inputs linearly and generating in response one of two output states—“0” (OFF) and “1” (ON). In recent decades, however, the field of neuroscience has discovered that individual neurons are, built from complex branching system that contains many functional sub-regions. Indeed, the branching structure of neurons and the many synapses that contact it over its distributed surface area implies that single neurons might behave as an extensive network whereby each sub-region it's own local, that is, nonlinear input-output function.

New research at the Hebrew University of Jerusalem (HU) seeks to understand the computing power of a neuron systematically. If one maps the input-output of a neuron for many synaptic inputs (many examples), then one may be able to examine how “deep” an analogous network should be to replicate the I/O characteristics of the neuron. Ph.D. student, David Beniaguev, along with Professors Michael London and Idan Segev, at HU’s Edmond and Lily Safra Center for Brain Science (ELSC), have undertaken this challenge and have published their findings in the prestigious journal Neuron.

The objective of the study is to understand how individual nerve cells, the building blocks of the brain, translate synaptic inputs to their electrical output. In doing so, the researchers seek to create a new kind of deep learning artificial infrastructure, that will act more like the human brain and produce similarly impressive capabilities as the brain does. “The new deep learning network that we propose is built from artificial neurons whereby each of them is already 5-7 layers deep. These units are connected, via artificial synapses, to the layers above and below it,” Segev explained.

In the present state of deep neuronal networks, every artificial neuron responds to input data (synapses) with a “0” or a “1”, based on the synaptic strength it receives from the previous layer. Based on that strength, the synapse either sends (excites) —or withholds (inhibits) —a signal to neurons in the next layer. The neurons in the second layer then process the data that they received and transfer the output to the cells in the next level etc. For example, in a network that is supposed to respond to cats (but not to other animals), this network should respond for a cat with a “1” at the last (deepest) output neuron, and with a “0” otherwise. Present-state deep neuronal networks demonstrated that they can learn this task and perform it extremely well.

This approach allows computers in driverless cars, for example, to learn when they have arrived at a traffic light or a pedestrian crossing – even if the computer has never before seen that specific crosswalk. “Despite the remarkable successes that are defined as a ‘game changer’ for our world, we still don’t completely appreciate how deep learning is capable of doing what it does and many people across the world are trying to figure it out,” Segev shared.

The ability of each deep-learning network is also limited to the specific task that it’s being asked to perform. A system that was taught to identify cats isn’t able to identify dogs. Furthermore, a dedicated system needs to be in place to detect the connection between meow and cats. While the success of deep learning is amazing for specific tasks, these systems lag far behind the human brain in their ability to multi-task. “We don’t need more than one driverless car accident to realize the inherent dangers in these limitations,” Segev quipped.

Currently, significant research is being focused on providing artificial deep learning with more intelligent and all-encompassing abilities, such as the ability to process and correlate between different stimuli and to relate to different aspects of the cat (sight, hearing, touch, etc.) and to learn how to translate those various aspects into meaning. These are capabilities at which the human brain excels and those which deep learning has not yet been able to achieve.

“Our approach is to use deep learning capabilities to create a computerized model that best replicates the I/O properties of individual neurons in the brain” Beniaguev explained. To do so, the researchers relied on mathematical modeling of single neurons, a set of differential equations that were developed by Segev and London. This allows them to accurately simulate the detailed electrical processes taking place in different regions of the simulated neuron and to best map the complex transformation for the barrage of synaptic inputs and the electrical current that they produce through the tree-like structure (dendritic tree) of the nerve cell. The researchers used this model to seek a deep neural network (DNN) that replicated the I/O of the simulated neuron. They found that this task is achieved by a DNN of 5-7 layers deep.

The team hopes that building deep-learning networks based closely on real neurons which, as they have shown, are already quite deep on their own, will enable them to perform more complex and more efficient learning processes, which are more similar to the human brain. “An illustration of this would be for the artificial network to recognize a cat with fewer examples and to perform functions like internalizing language meaning. However, these are processes that we still have to prove possible by our suggested DNNs with continued research,” Segev stressed. Such a system wouldn’t just mean changing the representation of single neurons in the respective artificial neuronal network but also combine in the artificial network the characteristics of different neuron types, as is the case in the human brain. “The end goal would be to create a computerized replica that mimics the functionality, ability, and diversity of the brain – to create, in every way, true artificial intelligence,” Segev added.

This study also offered the first chance to map and compare the processing power of the different types of neurons. “For example, to simulate neuron A, we need to map seven different levels of deep learning from specific neurons, while neuron B may need nine such layers” Segev explained. “In this way, we can quantitatively compare the processing power of the nerve cell of a mouse with a comparable cell in a human brain, or between two different types of neurons in the human brain.”

On an even more basic level, the development of a computer model based on a machine learning approach that so accurately simulates brain function is likely to provide a new understanding of the brain itself. “Our brain developed methods to build artificial networks that replicate its own learning capabilities and this in return allows us to better understand the brain and ourselves,” Beniaguev concluded.

Case Western Reserve University lab reports 84% accuracy in predicting from initial chest scan which patients will need breathing help; planning website for UH, Cleveland VA physicians to upload scans

Researchers at Case Western Reserve University have developed an online tool to help medical staff quickly determine which COVID-19 patients will need help breathing with a ventilator.

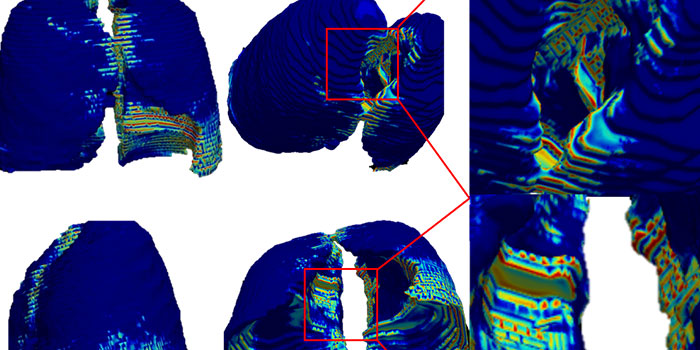

The tool, developed through analysis of CT scans from nearly 900 COVID-19 patients diagnosed in 2020, was able to predict ventilator need with 84% accuracy.

“That could be important for physicians as they plan how to care for a patient—and, of course, for the patient and their family to know,” said Anant Madabhushi, the Donnell Institute Professor of Biomedical Engineering at Case Western Reserve and head of the Center for Computational Imaging and Personalized Diagnostics (CCIPD). “It could also be important for hospitals as they determine how many ventilators they’ll need.”

Next, Madabhushi said he hopes to use those results to try out the computational tool in real-time at University Hospitals and Louis Stokes Cleveland VA Medical Center with COVID-19 patients.

If successful, he said medical staff at the two hospitals could upload a digitized image of the chest scan to a cloud-based application, where the AI at Case Western Reserve would analyze it and predict whether that patient would likely need a ventilator.

The dire need for ventilators

Among the more common symptoms of severe COVID-19 cases is the need for patients to be placed on ventilators to ensure they will be able to continue to take in enough oxygen as they breathe.

Yet, almost from the start of the pandemic, the number of ventilators needed to support such patients far outpaced available supplies—to the point that hospitals began “splitting” ventilators—a practice in which a ventilator assists more than one patient.

While 2021’s climbing vaccination rates dramatically reduced COVID-19 hospitalization rates—and, in turn, the need for ventilators—the recent emergence of the Delta variant has again led to shortages in some areas of the United States and in other countries.

“These can be gut-wrenching decisions for hospitals—deciding who is going to get the most help against an aggressive disease,” Madabhushi said.

To date, physicians have lacked a consistent and reliable way to identify which newly admitted COVID-19 patients are likely to need ventilators—information that could prove invaluable to hospitals managing limited supplies.

Researchers in Madabhushi’s lab began their efforts to provide such a tool by evaluating the initial scans taken in 2020 from nearly 900 patients from the U.S. and from Wuhan, China—among the first known cases of the disease caused by the novel coronavirus.

Madabhushi said those CT scans revealed—with the help of deep-learning computers, or Artificial Intelligence (AI)—distinctive features for patients who later ended up in the intensive care unit (ICU) and needed help breathing.

The research behind the tool appeared this month in the IEEE Journal of Biomedical and Health Informatics.

Amogh Hiremath, a graduate student in Madabhushi’s lab and lead author on the paper, said patterns on the CT scans couldn’t be seen by the naked eye but were revealed only by the computers.

“This tool would allow for medical workers to administer medications or supportive interventions sooner to slow down disease progression,” Hiremath said. “And it would allow for early identification of those at increased risk of developing severe acute respiratory distress syndrome—or death. These are the patients who are ideal ventilator candidates.”

Further research into ‘immune architecture’

Madabhushi’s lab also recently published research comparing autopsy tissues scans taken from patients who died from the H1N1 virus (Swine Flu) and from COVID-19. While the results are preliminary, they do appear to reveal information about what Madabhushi called the “immune architecture” of the human body in response to the viruses.

“This is important because the computer has given us information that enriches our understanding of the mechanisms in the body against viruses,” he said. “That can play a role in how we develop vaccines, for example.”

Germán Corredor Prada, a research associate in Madabhushi’s lab who was the primary author on the paper, said computer vision and AI techniques allowed the scientists to study how certain immune cells organize in the lung tissue of some patients.

“This allowed us to find information that may not be obvious by simple visual inspection of the samples,” Corredor said. “These COVID-19-related patterns seem to be different from those of other diseases such as H1N1, a comparable viral disease.”

Eventually, when combined with other clinical work and further tests in larger sets of patients, this discovery could serve to improve the world’s understanding of these diseases and maybe others, he said.

Madabhushi established the CCIPD at Case Western Reserve in 2012. The lab now includes more than 60 researchers. Some were involved in this most recent COVID-19 work, including graduate students Hiremath, Pranjal Vaidya; research associates Corredor and Paula Toro; and research faculty Cheng Lu and Mehdi Alilou.

How to resolve AdBlock issue?

How to resolve AdBlock issue?