The engineers have achieved unprecedented bandwidth and brightness on chip-sized nanophotonic devices.

Quantum entanglement or what Albert Einstein once referred to as “spooky action at a distance” occurs when two quantum particles are connected, even when millions of miles apart. Any observation of one particle affects the other as if they were communicating with each other. When this entanglement involves photons, interesting possibilities emerge, including entangling the photons’ frequencies, the bandwidth of which can be controlled.

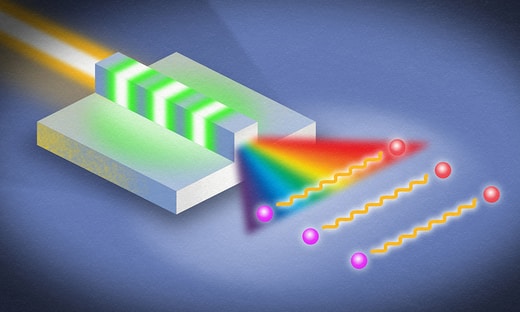

Researchers at the University of Rochester have taken advantage of this phenomenon to generate an incredibly large bandwidth by using a thin-film nanophotonic device they describe in Physical Review Letters.

The breakthrough could lead to:

- Enhanced sensitivity and resolution for experiments in metrology and sensing, including spectroscopy, nonlinear microscopy, and quantum optical coherence tomography

- Higher-dimensional encoding of information in quantum networks for information processing and communications

“This work represents a major leap forward in producing ultra-broadband quantum entanglement on a nanophotonic chip,” says Qiang Lin, professor of electrical and computer engineering. “And it demonstrates the power of nanotechnology for developing future quantum devices for communication, computing, and sensing,”

No more tradeoff between bandwidth and brightness

To date, most devices used to generate broadband entanglement of light have resorted to dividing up a bulk crystal into small sections, each with slightly varying optical properties and each generating different frequencies of the photon pairs. The frequencies are then added together to give a larger bandwidth.

“This is quite inefficient and comes at a cost of reduced brightness and purity of the photons,” says lead author Usman Javid, a Ph.D. student in Lin’s lab. In those devices, “there will always be a tradeoff between the bandwidth and the brightness of the generated photon pairs, and one has to make a choice between the two. We have completely circumvented this tradeoff with our dispersion engineering technique to get both: a record-high bandwidth at a record-high brightness.”

The thin-film lithium niobate nanophotonic device created by Lin’s lab uses a single waveguide with electrodes on both sides. Whereas a bulk device can be millimeters across, the thin-film device has a thickness of 600 nanometers—more than a million times smaller in its cross-sectional area than a bulk crystal, according to Javid. This makes the propagation of light extremely sensitive to the dimensions of the waveguide.

Indeed, even a variation of a few nanometers can cause significant changes to the phase and group velocity of the light propagating through it. As a result, the researchers’ thin-film device allows precise control over the bandwidth in which the pair-generation process is momentum-matched. “We can then solve a parameter optimization problem to find the geometry that maximizes this bandwidth,” Javid says.

The device is ready to be deployed in experiments, but only in a lab setting, Javid says. To be used commercially, a more efficient and cost-effective fabrication process is needed. And although lithium niobate is an important material for light-based technologies, lithium niobate fabrication is “still in its infancy, and it will take some time to mature enough to make financial sense,” he says.

Other collaborators include coauthors Jingwei Ling, Mingxiao Li, and Yang He of the Department of Electrical and Computer Engineering, and Jeremy Staffa of the Institute of Optics, all of whom are graduate students. Yang He is a postdoctoral researcher.

How to resolve AdBlock issue?

How to resolve AdBlock issue?