A collaboration between a former cosmologist and a computational neuroscientist at Janelia generates a new way to identify essential connections between brain cells.

After a career spent probing the mysteries of the universe, a Janelia Research Campus senior scientist is now exploring the mysteries of the human brain and developing new insights into the connections between brain cells.

Tirthabir Biswas had a successful career as a theoretical high-energy physicist when he came to Janelia on a sabbatical in 2018. Biswas still enjoyed tackling problems about the universe, but the field had lost some of its excitement, with many major questions already answered.

“Neuroscience today is a little like physics a hundred years ago when physics had so much data and they didn’t know what was going on and it was exciting,” says Biswas, who is part of the Fitzgerald Lab. “There is a lot of information in neuroscience and a lot of data, and they understand some specific big circuits, but there is still not an overarching theoretical understanding, and there is an opportunity to make a contribution.”

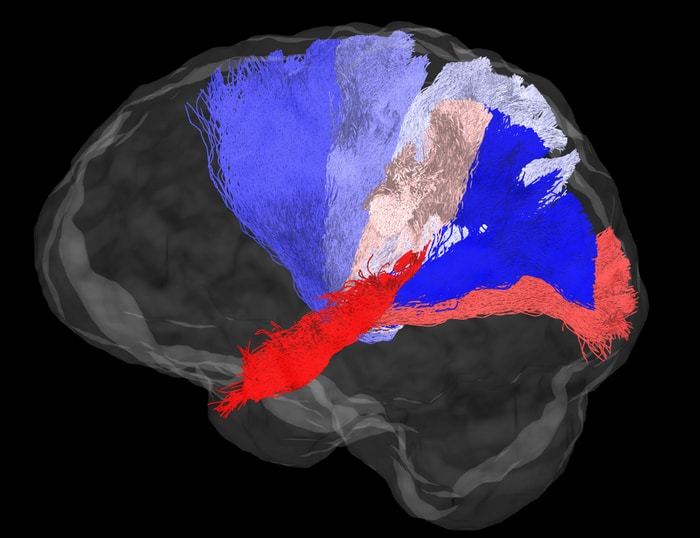

One of the biggest unanswered questions in neuroscience revolves around connections between brain cells. There are hundreds of times more connections in the human brain than there are stars in the Milky Way, but which brain cells are connected and why remains a mystery. This limits scientists’ ability to precisely treat mental health issues and develop more accurate artificial intelligence.

The challenge of developing a mathematical theory to better understand these connections was a problem Janelia Group Leader James Fitzgerald first posed when Tirthabir Biswas arrived in his lab.

While Fitzgerald was out of town for a few days, Biswas sat down with pen and paper and used his background in high-dimensional geometry to think about the problem – a different approach than that of neuroscientists, who typically rely on calculus and algebra to address mathematical problems. Within days, Biswas had a major insight into the solution and approached Fitzgerald as soon as he returned.

“It seemed this was a very difficult problem, so if I say, ‘I’ve solved the problem,’ he’ll probably think I’m crazy,” Biswas recalls. “But I decided to say it anyway.” Fitzgerald was initially skeptical, but once Biswas finished laying out his work, they both realized he was on to something important.

“He had an insight that is really fundamental to how these networks work that people hadn’t had before,” Fitzgerald says. “This insight was enabled by cross-disciplinary thinking. This insight was a flash of brilliance that he had because of how he thinks, and it just translated to this new problem he’s never worked on before.”

Biswas’s idea helped the team develop a new way to identify essential connections between brain cells, which was published on June 29 in Physical Review Research. By analyzing neural networks – mathematical models that mimic brain cells and their connections – they were able to figure out that certain connections in the brain may be more essential than others.

Specifically, they looked at how these networks transform inputs into outputs. For example, an input could be a signal detected by the eye and the output could be the resulting brain activity. They looked at which connection patterns resulted in the same input-output transformation.

As expected, there were an infinite number of possible connections for each input-output combination. But they also found that certain connections appeared in every model, leading the team to suggest that these necessary connections could be present in real brains. A better understanding of which connections are more essential than others could lead to greater awareness of how real neural networks in the brain perform computations.

The next step is for experimental neuroscientists to test this new mathematical theory to see if it can be used to make predictions about what is happening in the brain. The theory has direct applications to Janelia’s efforts to map the connectome of the fly brain and record brain activity in larval zebrafish. Figuring out underlying theoretical principles in these small animals can be used to understand connections in the human brain, where recording such activity is not yet feasible.

“What we are trying to do is put forward some theoretical ways of understanding what really matters and use these simple brains to test those theories,” Fitzgerald says. “As they are verified in simple brains, the general theory can be used to think about how brain computation works in larger brains.”

How to resolve AdBlock issue?

How to resolve AdBlock issue?