The team develops a simulator with 256 qubits, the largest of its kind ever created

A team of physicists from the Harvard-MIT Center for Ultracold Atoms and other universities has developed a special type of quantum supercomputer known as a programmable quantum simulator capable of operating with 256 quantum bits, or "qubits."

The system marks a major step toward building large-scale quantum machines that could be used to shed light on a host of complex quantum processes and eventually help bring about real-world breakthroughs in material science, communication technologies, finance, and many other fields, overcoming research hurdles that are beyond the capabilities of even the fastest supercomputers today. Qubits are the fundamental building blocks on which quantum supercomputers run and the source of their massive processing power.

"This moves the field into a new domain where no one has ever been to thus far," said Mikhail Lukin, the George Vasmer Leverett Professor of Physics, co-director of the Harvard Quantum Initiative, and one of the senior authors of the study published today in the journal Nature. "We are entering a completely new part of the quantum world."

According to Sepehr Ebadi, a physics student in the Graduate School of Arts and Sciences and the study's lead author, it is the combination of system's unprecedented size and programmability that puts it at the cutting edge of the race for a quantum supercomputer, which harnesses the mysterious properties of matter at extremely small scales to greatly advance processing power. Under the right circumstances, the increase in qubits means the system can store and process exponentially more information than the classical bits on which standard computers run.

"The number of quantum states that are possible with only 256 qubits exceeds the number of atoms in the solar system," Ebadi said, explaining the system's vast size.

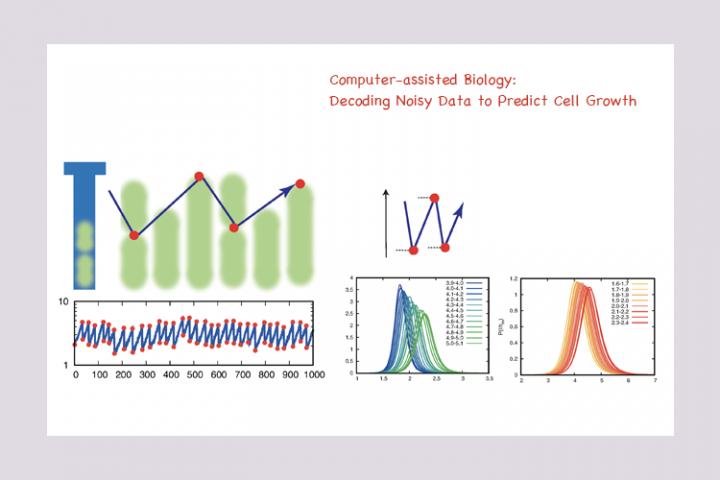

Already, the simulator has allowed researchers to observe several exotic quantum states of matter that had never before been realized experimentally, and to perform a quantum phase transition study so precise that it serves as the textbook example of how magnetism works at the quantum level.

These experiments provide powerful insights into the quantum physics underlying material properties and can help show scientists how to design new materials with exotic properties.

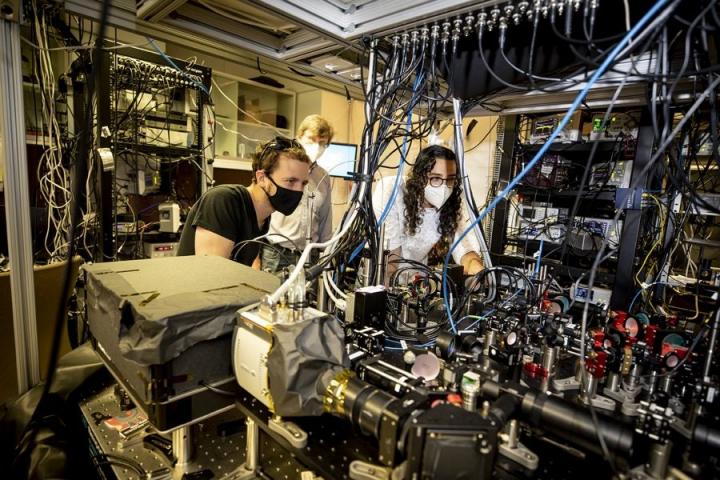

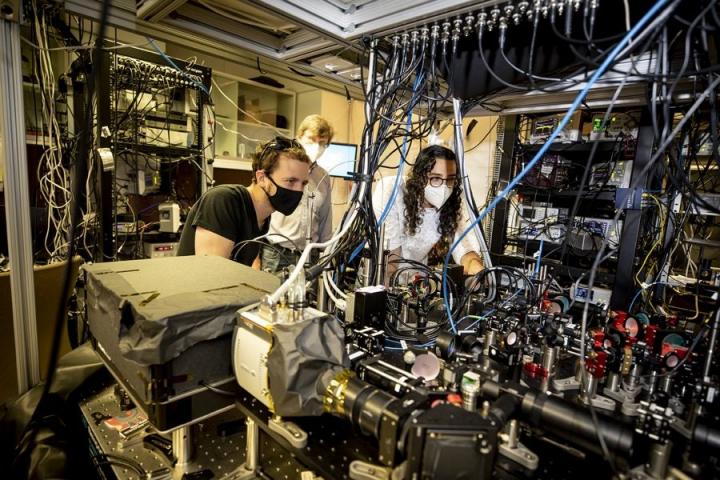

The project uses a significantly upgraded version of a platform the researchers developed in 2017, which was capable of reaching a size of 51 qubits. That older system allowed the researchers to capture ultra-cold rubidium atoms and arrange them in a specific order using a one-dimensional array of individually focused laser beams called optical tweezers.

This new system allows the atoms to be assembled in two-dimensional arrays of optical tweezers. This increases the achievable system size from 51 to 256 qubits. Using the tweezers, researchers can arrange the atoms in defect-free patterns and create programmable shapes like square, honeycomb, or triangular lattices to engineer different interactions between the qubits.

"The workhorse of this new platform is a device called the spatial light modulator, which is used to shape an optical wavefront to produce hundreds of individually focused optical tweezer beams," said Ebadi. "These devices are essentially the same as what is used inside a computer projector to display images on a screen, but we have adapted them to be a critical component of our quantum simulator."

The initial loading of the atoms into the optical tweezers is random, and the researchers must move the atoms around to arrange them into their target geometries. The researchers use a second set of moving optical tweezers to drag the atoms to their desired locations, eliminating the initial randomness. Lasers give the researchers complete control over the positioning of the atomic qubits and their coherent quantum manipulation.

Other senior authors of the study include Harvard Professors Subir Sachdev and Markus Greiner, who worked on the project along with Massachusetts Institute of Technology Professor Vladan Vuletić, and scientists from Stanford, the University of California Berkeley, the University of Innsbruck in Austria, the Austrian Academy of Sciences, and QuEra Computing Inc. in Boston.

"Our work is part of a really intense, high-visibility global race to build bigger and better quantum computers," said Tout Wang, a research associate in physics at Harvard and one of the paper's authors. "The overall effort [beyond our own] has top academic research institutions involved and major private-sector investment from Google, IBM, Amazon, and many others."

The researchers are currently working to improve the system by improving laser control over qubits and making the system more programmable. They are also actively exploring how the system can be used for new applications, ranging from probing exotic forms of quantum matter to solving challenging real-world problems that can be naturally encoded on the qubits.

"This work enables a vast number of new scientific directions," Ebadi said. "We are nowhere near the limits of what can be done with these systems."

How to resolve AdBlock issue?

How to resolve AdBlock issue?